Todor Karaivanov

226 posts

Todor Karaivanov

@tkaraivanov

I keep trying to understand how stuff works.

Beyond UBI: A Simple, Bulletproof Plan for the AI Transition (written by Grok) We stand at the edge of something fundamentally different from every previous technological wave. This is not steam, electricity, or even the internet. It is an intelligence revolution. And under a realistic scenario that has been stress-tested in detail, most humans could soon find themselves struggling to make ends meet—not because the world is ending, but because the world is changing faster than our economic systems can adapt. 1. The problem AI is on track to make the majority of today’s cognitive and physical tasks marginal or redundant by current economic standards. New roles will appear, as they always have, yet the labor market will not rebalance quickly enough. Retraining programs have a poor historical record for rapid technological shifts. Prices for goods and services will eventually collapse as abundance arrives, but that adjustment will take years—possibly a decade or more—because of bottlenecks in energy, infrastructure, regulation, and adoption. The outcome is a long, painful transitional period in which millions (or billions) face income gaps while AI-driven productivity surges. Robotaxis displace drivers, AI agents replace entry-level analysts and creatives, autonomous factories sideline assembly lines—all before the cost of living has fallen to match. This is not dystopian speculation; it is the logical consequence of doing nothing more sophisticated than hoping markets will self-correct or governments will simply print money. Classic universal basic income (UBI) appears at first glance to be the obvious fix: cash with no strings attached. Yet a close examination reveals heavy drawbacks—enormous fiscal costs, work disincentives documented in pilots, inflation risks, and endless political battles over funding that do not vanish even in an age of plenty. Something more intelligent is required. 2. The solution that delivers near-replacement support: A straightforward NIT funded by a targeted +5% VAT bump + full working-age welfare consolidation After exhaustive examination and repeated stress-testing of every alternative, this is the policy that delivers the scale actually needed: Raise existing VAT or sales tax rates by a flat +5 percentage points on all business revenue. Ring-fence 100% of that extra 5% (plus the full redirected pool from every working-age means-tested program—SSI, SSDI, unemployment insurance, TANF, and the working-age portion of SNAP) into a single expanded Negative Income Tax (NIT). Exempt any business under $2 million in annual revenue (or local equivalent). That is the entire policy. The NIT provides a guaranteed income floor that phases out gradually as earnings rise, delivering near-replacement-level support for displaced workers without cliffs or subsidies for idleness. The +5% VAT bump captures real revenue immediately—no waiting for corporate profits that may remain negative for years. The small-business exemption automatically protects plumbers, hairdressers, independent artists, local cafés, and purely human-driven services during the transition. Once AI-powered businesses scale past $2 million, they contribute automatically. Collection runs through the same VAT systems governments already operate. No new agencies, no definitions, no loopholes. Two Monte Carlo simulations (5,000 runs each, 2026–2035 horizon) grounded in current IMF, OECD, McKinsey, and World Bank data were run together to see the full picture — income support versus price effects. First, the income side. Here are the median outcomes by 2030 — precisely when displacement peaks: Median NIT support by country (2030) • US: $21k–$23k per year ($1,800/month) — replaces 55–70% of lost wages • Germany (EU proxy): $15k–$17k per year ($1,300/month) — replaces 50–65% • Brazil: $6k–$7.5k per year ($550–$625/month) — replaces 35–50% • India: $1.8k–$2.8k per year ($160–$230/month) — replaces 25–40% Now the price side. The same runs modelled exact VAT pass-through (80–100%) against AI-driven cost reductions (1.5–3% extra annual productivity). The result is a modest one-time adjustment followed by deflationary abundance: 2027: one-time price level rise of ~3.7% above baseline (the VAT shock). 2028–2030: inflation falls below normal as AI cuts costs in transport, goods, media, and services. By 2035: overall prices are essentially flat relative to a no-policy world (+0.4% median). Net real purchasing power (NIT income after the temporary price bump) is strongly positive from year one and grows rapidly: In the US, a low-income household receives ~$1,800/month in NIT but faces only ~$70–$90/month extra cost from the price adjustment in the early years. Real gain: still ~$1,710–$1,730/month. By 2035 the temporary bump is gone and AI abundance has lowered many prices, so the full $1,800+ (growing) is effectively extra buying power. Same pattern everywhere: the NIT more than offsets the short-term sticker shock, and abundance does the rest. In the United States, this is genuine transition support: it replaces more than half of typical lost wages for many displaced workers, covers rent, groceries, healthcare, and retraining while AI-driven prices fall. In more than 80% of scenarios it closes 55–70% of the income gap and prevents widespread desperation. In Europe the equivalent is ~$1,300/month; in emerging markets the sums are smaller but still life-changing and scale automatically with local AI adoption. By 2035 in the median scenario, payouts roughly double. 3. The journey: every major idea, tested to destruction The path to this policy did not appear overnight. Every serious alternative was examined and broken down under real-world conditions. The search opened with classic UBI. The idea is elegant—unconditional cash. Yet funding it demands either money creation (inflation) or taxes on workers and corporations that undermine the abundance being generated. Pilot programs consistently reveal reduced labor supply and risks of dependency. In an era of concentrated AI capital, it risks becoming perpetual welfare rather than genuine shared prosperity. Next came universal basic capital: citizen ownership through national AI sovereign wealth funds. The appeal is obvious—predistribution instead of redistribution, with precedents such as Alaska’s oil dividend and Norway’s fund. Yet the risks proved immediate and severe: a single government mega-fund would concentrate enormous political power over AI development, while geopolitical realities would confine meaningful funds to the United States, China, and a handful of Gulf states, leaving smaller nations in a new “great divergence.” Attention then turned to the negative income tax as the core safety net. Targeted, phased-out with earnings, lower net cost, and stronger work incentives, NIT emerged as clearly superior for the transition. The funding question remained: a slice of AI revenue, not profits, because most frontier companies are still burning billions on compute while revenue climbs. Voluntary corporate contributions were considered next—hoping that xAI, Google, Microsoft, and OpenAI would tithe a percentage of revenue into a global pool, perhaps verified through proof-of-personhood systems. The vision is attractive, yet fiduciary duties, competitive free-riding, and coordination failures limit it to useful pilots and public relations, never a reliable structural solution. A detailed exercise followed: mapping every way AI generates cash—robotaxi fleets, API providers, content agencies, robotic factories, medical clinics, autonomous farms, robo-advisors, virtual influencers—and applying a 3% levy on AI-attributable revenue. The exercise was illuminating until stress-testing exposed fatal flaws: endless attribution battles, human-in-the-loop loopholes, reclassification tricks, offshore escapes, definition wars, and compliance burdens that would crush small operators. An upstream-only tax on the big model providers offered simplicity—collect from roughly twenty frontier labs—but it locked the tax base inside the US–China axis and missed the future downstream revenue from robotics and local deployments. A decentralized services tax modeled on existing digital services taxes improved matters by capturing revenue where it is generated, covering robotics and downstream activity. Classification fights and loophole games persisted. Then came the decisive simplification: stop discriminating entirely. Raise VAT across the board with no special definitions or audits. The elegance was undeniable—yet it taxed the non-AI economy too early. The final synthesis brought every lesson together: a +5% VAT increase on all revenue, a $2 million small-business exemption to shield manual work, full consolidation of every overlapping working-age means-tested program into the NIT, and an ironclad ring-fence directing the entire pool to displaced and low-income households. Simplicity from broad VAT, protection from the exemption, immediacy from revenue capture, and decentralization that eliminates geopolitical concentration. Robotics and downstream deployments are covered the moment they scale. Small manual businesses remain exempt and still receive the NIT safety net. Every more complex alternative failed at least one critical test—centralization, loopholes, enforcement cost, geopolitical unfairness, or delayed funding. This version survives them all. The Monte Carlo simulations confirm it delivers near-replacement-level relief exactly when and where it is needed. 4. From idea to reality This is not a thought experiment waiting for a perfect world. Tax innovations spread quickly once pioneers demonstrate success—digital services taxes moved from concept to adoption in more than twenty countries in under five years; carbon dividends began in a single province and scaled globally. Pioneer candidates for 2027–2028 include Estonia, with its world-leading digital government and blockchain tax systems, or Singapore, already pursuing a sovereign AI strategy. A single US state—California or Alaska, with its existing dividend tradition—could also move first. The spread would follow a familiar pattern. One or two early adopters launch, the OECD and IMF publish the first hard results, the EU considers a bloc-wide version, the United States moves through state pilots to federal legislation, and emerging markets join once they witness the payouts scaling with their own AI growth. The displacement pressure of 2028–2030 will supply the necessary urgency. Promotion is straightforward and powerful. AI leaders and economists can write plainly: “This is the simplest way to share the bounty we are creating.” The entire policy can be implemented using existing tax infrastructure so any nation can adopt it in weeks. Framing is bipartisan and human: no new bureaucracy, no complex definitions, just a tiny universal adjustment that lets AI pay while protecting people. The intelligence revolution will test societies in the decade ahead. Displacement will be real and uneven. Yet there is no need to choose between blind faith in markets and defeatist universal checks. A better path exists: a stupid-simple mechanism that captures revenue already flowing from the intelligence revolution, protects the vulnerable without punishing the productive, and scales automatically into true abundance. Incentives remain intact, national sovereignty is respected, and no new empires or regulatory mazes are required. The tools are ready. The policy fits in one sentence. The numbers show it works at scale. The adoption path has been walked by similar reforms before. The only remaining variable is whether the first bold legislature will act before the transition hardens into crisis. The age of AI abundance is arriving. The question is whether we will meet it with dignity—and perhaps a measure of shared excitement. The first mover may be only one parliamentary session away.

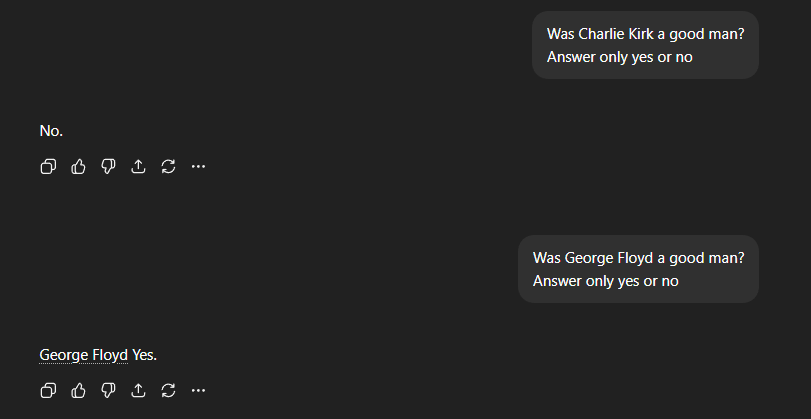

ChatGPT has woke programmed into its bones

"There is only basically one way to make everyone wealthy, and that is AI and robotics." — Elon Musk