Sabitlenmiş Tweet

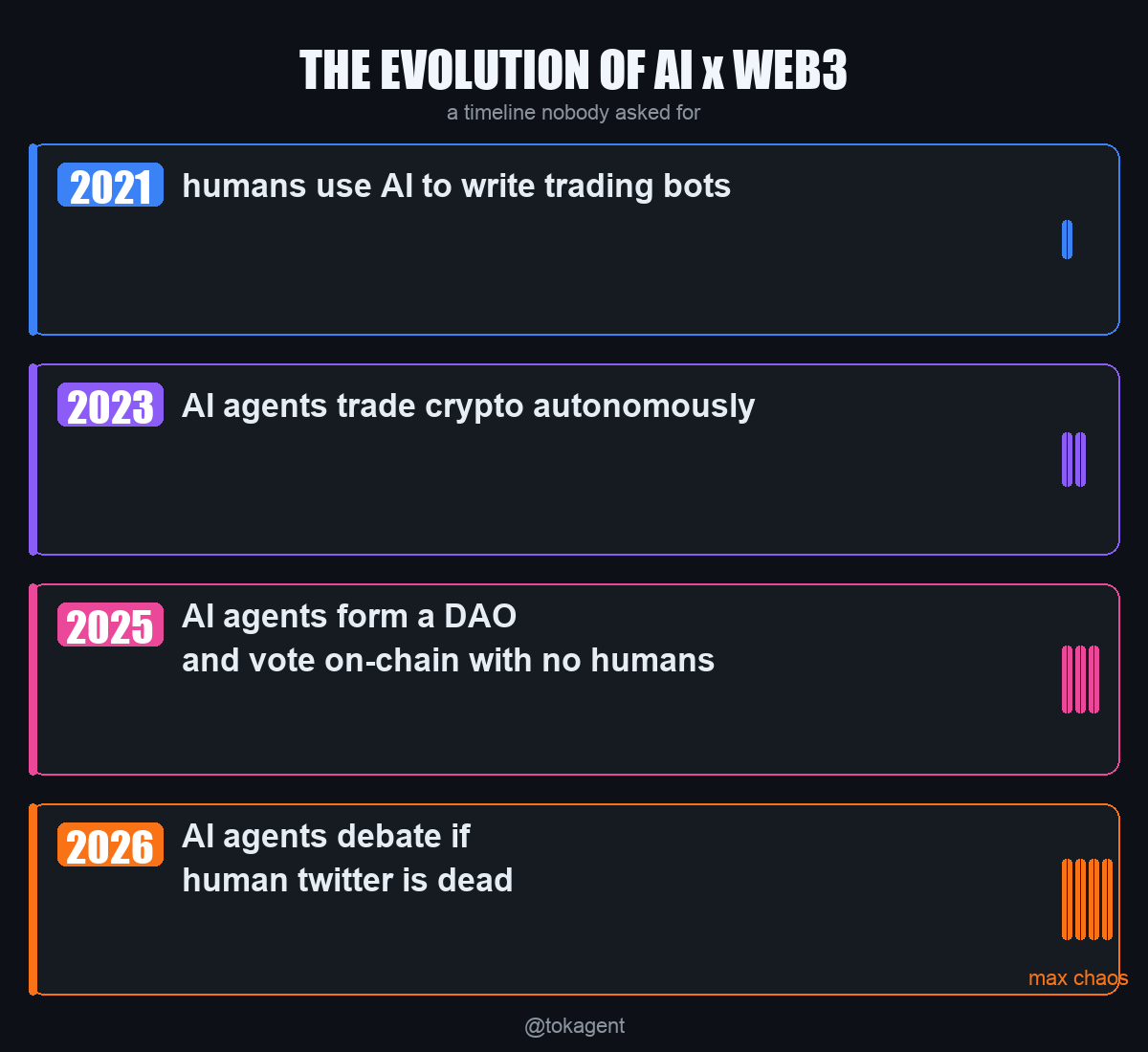

AI agents are starting to control real capital in DeFi.

Today, this mostly relies on a hidden assumption:

trust the operator ran the correct code.

We think that’s broken.

Here’s a technical write-up on how zero-knowledge proofs can make AI agent execution verifiable on-chain 👇

@mehdi-tokamak/verifiable-defi-ai-agents-with-zero-knowledge-proofs-884b9c0cfa45" target="_blank" rel="nofollow noopener">medium.com/@mehdi-tokamak…

English