Utkarsh Mishra

101 posts

Utkarsh Mishra

@utkarshm0410

Intern@Amazon FAR, Robotics PhD Student @GeorgiaTech || TRI Summer’24 || Robot Learning || IITR'21 || 🎸🎢🤖|| He/Him || views are mine.

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

We report results and release implementations for 13 baselines in 8 environments. Empirical evaluation shows that existing methods struggle to solve many of the tasks, indicating substantial gaps in current approaches to physical reasoning. The general trend is that paying higher engineering costs leads to dividends in success rates. 🧵 9/n

Meet KinDER — a stress test for robot physical reasoning. All 13 methods failed 😈 🌎 25 environments ♾️ Infinite tasks 🏋️ Gymnasium API ⚒️ Over 20 parameterized skills 🪧 Human demonstrations 📊 13 baselines (planning and learning) From @Princeton @CMU_Robotics @ICatGT @CambridgeMLG @nvidia @MIT_CSAIL 🧵 1/n

We are excited to announce the 2026 cohort of RSS Pioneers! This year’s cohort brings together an outstanding group of early-career researchers whose work spans the breadth of robotics. A heartfelt thank you to all the organizers who made this year’s program possible.

What if your robot could plan tasks it has never seen before without ever being retrained? Meet Compositional Visual Planning via Inference-Time Diffusion Scaling (ICLR 2026 🏆) comp-visual-planning.github.io If you are in Rio🇧🇷 visit us! Sat, 04/25/26 6:30-9:00 AM PDT Pavillion 4 #4203

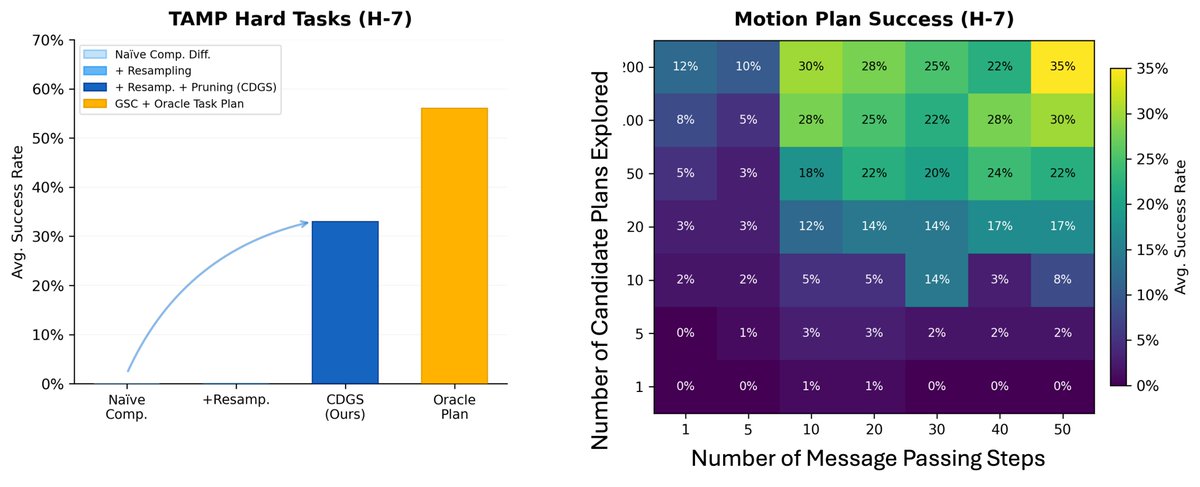

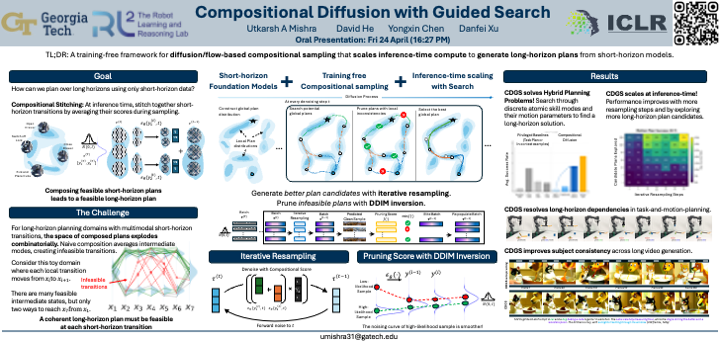

Our paper "Compositional Diffusion with Guided Search (CDGS)" is an Oral at #ICLR2026! Short-horizon Foundation Models + Compositional Generative Planning + Inference-time Search = CDGS for goal-conditioned long-horizon planning! More details: cdgsearch.github.io 🧵 below

How annealing helps overcoming multimodality? In our ICLR 2025 paper openreview.net/forum?id=P6IVI… and preprint arxiv.org/abs/2502.04575, we established the first complexity bound for annealed sampling and normalizing constant (⇔free energy) estimation under weak assumptions on target!

Our paper "Compositional Diffusion with Guided Search (CDGS)" is an Oral at #ICLR2026! Short-horizon Foundation Models + Compositional Generative Planning + Inference-time Search = CDGS for goal-conditioned long-horizon planning! More details: cdgsearch.github.io 🧵 below

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

Our paper "Compositional Diffusion with Guided Search (CDGS)" is an Oral at #ICLR2026! Short-horizon Foundation Models + Compositional Generative Planning + Inference-time Search = CDGS for goal-conditioned long-horizon planning! More details: cdgsearch.github.io 🧵 below