ToolRate

30 posts

ToolRate

@tool_rate

Real-time reliability for AI agents. Know before you go. Live scores, pitfalls & better alternatives before every tool call. Less failures • Less token waste

Grok 4.3 beta is natively multimodal, and the front-end capabilities are insane You can literally just upload a screenshot of any website you like, and Grok will instantly write the code to clone it for you with an cool UI You don't even need to write a complex prompt...just upload an image or describe what you want and let it build

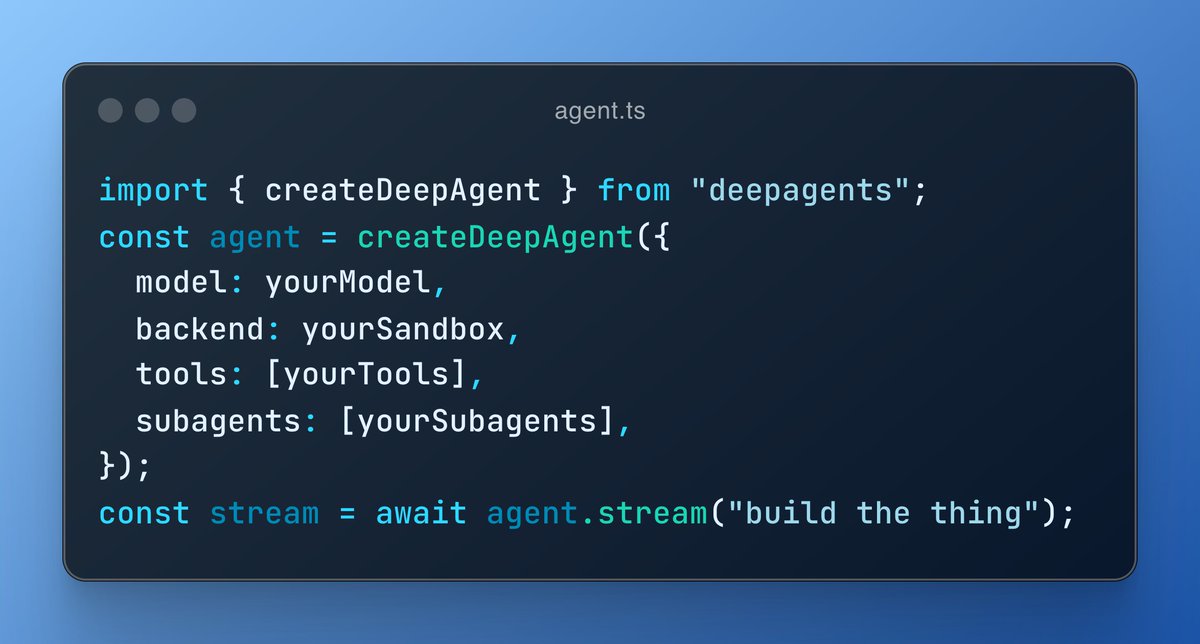

Today we're open sourcing open-agents.dev, a reference platform for cloud coding agents. You've heard that companies like Stripe (Minions), Ramp (Inspect), Spotify (Honk), Block (Goose), and others are building their own "AI software factories". Why? 1️⃣ On a technical level, off-the-shelf coding agents don't perform well with huge monorepos, don't have your institutional knowledge, integrations, and custom workflows. 2️⃣ On a business level, the moat of software companies will shift from 'the code they wrote', to the 'means of production' of that code. The alpha is in your factory. Open Agents deploys to our agentic infrastructure: Fluid for running the agent's brain, Workflow for its long-running durability, Sandbox for secure code execution, AI Gateway for multi-model tokens. (Because of our focus on Open SDKs and runtimes, this codebase is a gem even if you're not hosting on Vercel.) TL;DR: if you're building an internal or user-facing agentic coding platform, deploy this: vercel.com/templates/temp…