Rasel Khan

10.3K posts

Rasel Khan

@trkweb3

Finding early airdrops & real alpha in Web3

GMGN CT ☕️ Most DeFi protocols quietly depend on user anxiety. They make more money when people keep moving capital, chasing yields, rotating pools, and constantly reopening risk. That’s why the latest @TermMaxFi numbers are so interesting. TVL is still up around 2% over the last 7 days.Fees are down roughly 37%. In most protocols, that would look bearish. In fixed-rate markets, it may actually be a maturity signal. Because once users lock duration, lock funding costs, and lock predictable yield, the protocol no longer needs hyperactive capital to survive. Capital stops bouncing. It starts sitting. And honestly, that changes the business model of DeFi more than people realize. Most lending protocols monetize uncertainty. Floating rates, constant repricing, endless repositioning. Activity becomes revenue. Fixed-rate flips that logic. The healthier the market gets, the less users need to touch their positions. That’s a weird thing to say in crypto, where “engagement” is usually treated like proof of growth. But if users can enter a position, understand the risk upfront, and comfortably hold through maturity, lower interaction volume may actually mean the system is working. A lot of TermMax’s architecture already points in that direction. Fixed maturities reduce reflexive decision-making. Vaults reduce active management. Range Orders smooth pricing into a curve instead of forcing constant repricing. Isolated collateral keeps risk contained instead of socialized. So the protocol starts optimizing for something different:not how often capital moves,but how confidently capital stays. Obviously, one week of fee compression alone proves nothing. Market conditions, gas patterns, and user behavior still matter. But if fixed-rate DeFi keeps evolving this way, the next generation of protocols may stop competing for the most active users. They may start competing for the users who feel the least need to act at all.

GMGN CT ☕️ I think a lot of people still misunderstand what products like @TermMaxFi are actually changing. It’s not just fixed-rate lending. It’s the removal of forced decisions.Most people don’t blow up because they’re stupid. They blow up because DeFi keeps forcing them to act under pressure. Rates spike. Collateral drops. Funding changes. Positions need refinancing. Something suddenly demands a decision right now. And humans are terrible at making decisions when the clock is attacking them. That’s why so many users end up trapped in a loop of reacting instead of planning. What TermMax is building feels different because both sides of the system reduce that pressure at the same time. Alpha no-liquidation positions define the worst-case loss upfront through a fixed premium. No traditional margin calls. No random liquidation panic halfway through the trade. Fixed-rate lending locks borrowing cost and maturity from day one, so users are not waking up to floating-rate chaos or unexpected refinancing stress. The psychological effect is bigger than people realize. Your brain stops thinking:“How fast do I need to react?”And starts thinking:“Can this position survive until maturity?” That sounds small. It’s not.That is a completely different relationship with risk. A lot of DeFi was designed around constant stimulation. Constant movement. Constant intervention. The protocols made money when users stayed nervous. But low-volatility markets are changing user behavior now. People are getting tired of managing positions every hour just to protect yield that disappears anyway. Predictability is starting to feel valuable again. That’s why terms like “fixed duration,” “known cost,” and “no liquidation stress” keep showing up in community discussions around TermMax. Not because people suddenly became conservative.Because exhaustion eventually changes behavior. Of course, none of this means “risk-free.” Premiums can still be lost. Fixed maturities still require planning. Complex structures still depend on execution holding up under stress. But the direction feels important.Old DeFi rewarded the fastest reaction time.The next phase may reward the people who need to react the least.

Most DeFi lending still works like this: You deposit capital into a pool and accept whatever rate the market hands you. @TermMaxFi is quietly breaking that model. Range Orders let lenders quote rates instead of blindly taking them. That changes everything. Once lenders can choose where liquidity sits on the curve, fixed income stops being a passive pool and starts becoming a real market. Lower rates fill first. Higher rates wait for demand. Capital no longer moves as one blob chasing APY. It starts behaving like an order book.Uniswap V3 gave LPs control over price ranges.TermMax is giving lenders control over rate ranges. Same market structure shift. Different asset: time.And honestly, I think most people still underestimate how big that is. Because once lenders gain pricing power, yield stops being purely “market-driven.” It becomes partially “liquidity-positioned.” Returns start depending on curve placement, fill quality, duration demand, and patience. That’s much closer to fixed-income trading than traditional DeFi lending. Which is also why Vaults matter more at scale. At that point, users are no longer outsourcing yield generation. They’re outsourcing duration management, liquidity routing, and rate positioning to curators who actively manage the curve for them. The interesting part is that this only really becomes visible once TVL gets large enough. Small systems can survive on APY.Large systems eventually need market structure.Feels like DeFi lending is slowly crossing that line right now.

DeFi is quietly splitting into two eras.The first era was built on promises.The next one will be built on evidence. @TermMaxFi accidentally showed the difference this week. After the Puzzle Task bug, they didn’t just push a backend fix and call it a day. The recovery process itself became publicly verifiable: historical Dual Vault deposits, another 5-day Earn Vault hold, wallet-by-wallet checks, then unlocks for the 88 qualified addresses. Messy? Yeah.But that’s exactly why it mattered.Because most protocols only look transparent when nothing breaks. The real test is whether users can independently verify what happened after things go wrong. That’s where most DeFi systems still fall apart. Support tickets replace proofs. Spreadsheets replace state. “Trust the team” quietly becomes the final layer of infrastructure. TermMax took a different route here. Not perfect. Still partially manual. Some wallets failed verification. But the important part is that the protocol exposed the receipts instead of hiding the process behind ops language. And honestly, I think this becomes a much bigger theme over the next cycle. The winners probably won’t be the protocols with the cleanest marketing or the loudest “community-first” slogans. They’ll be the ones that leave behind auditable evidence when something breaks. Because eventually, every protocol hits failure.What changes the game is whether users are asked to trust the explanation...or verify the record.

MEXC LISTING CONFIRMED With $1.4M daily on-chain volume already, AtlasOra now goes live on @MEXC - one of the biggest exchanges, home to 40M+ users. Deposits: open now Trading: 26 May, 13:00 UTC Pair: $AORA / USDT MEXC is the first centralised venue for $AORA, opening centralised liquidity alongside the Aerodrome onchain market. Contract address (Base): 0x6E84030FA86EBf585E3E18fe557e5612f7e93Bff We are just getting started this week.

今天已经是5月25日了,也马上迎来Q2的最后一个月,TermMaxFi最开始说的预计Q1 TGE,然后前期行情不好,白皮书正式确定了Q2再开始TGE,如果如果不拖的话,6月中旬会TGE,最晚就是下个月的月底。 如果再继续延迟,那就真的遥遥无边了!! 已经坚持写快写6个月了,我真的没有见过一个项目嘴撸这么久的,吐槽归吐槽,写还得继续写。 过去的DeFi,更像是“流动性时代”。 市场核心围绕: 流动性挖矿 高频资金流动 短期收益竞争 即时价格博弈 这种模式推动了行业爆发,但也让整个市场长期停留在高波动、高情绪的状态。 而TermMaxFi代表的,则是另一种方向—— 时间金融、结构金融、长期资本金融。 固定利率与明确期限,看似只是借贷规则变化, 但背后真正改变的,是整个市场的运行逻辑。 因为当资金开始围绕时间被组织, 市场就会逐渐出现: 稳定收益结构 长期资本配置 风险分层体系 信用定价能力 收益率曲线 这些,才是成熟金融系统真正的底层基础。 更重要的是,TermMaxFi正在让DeFi从“变量驱动”,慢慢走向“结构驱动”。 过去很多收益来自: 情绪 波动 资金流向 市场热点 但未来,越来越多收益可能来自: 利差 周期管理 现金流 期限结构 资本配置能力 这意味着,链上金融会越来越接近真正的金融体系,而不仅仅是交易市场。 同时,OndoFinance等RWA生态的发展,也让这种趋势更加明显。 当股票代币、现实资产逐渐进入链上之后,市场就更需要固定利率、期限结构与长期融资能力。 因为现实世界金融,本质上就是建立在时间与信用之上的。 TermMaxFi正在做的,其实就是给链上世界补上这一层。 它不仅仅是在做借贷协议, 更像是在搭建下一代DeFi的金融底座。 未来的DeFi,也许不再只是“谁收益高”, 而是谁的结构更稳定、谁的时间定价更成熟、谁能真正承载长期资本。 而这一轮变化,才可能是真正决定行业未来十年的核心转折。

华尔街有收盘时间,但在火币HTX,我们不收盘! 🚀 TradFi专区正式上线,美股期货交易,就上HTX! 用USDT就可以直接交易特斯拉、英伟达、商业航空等热门美股合约,7×24小时不间断,做多做空任你选。 立即更新App体验👇 #TradFi

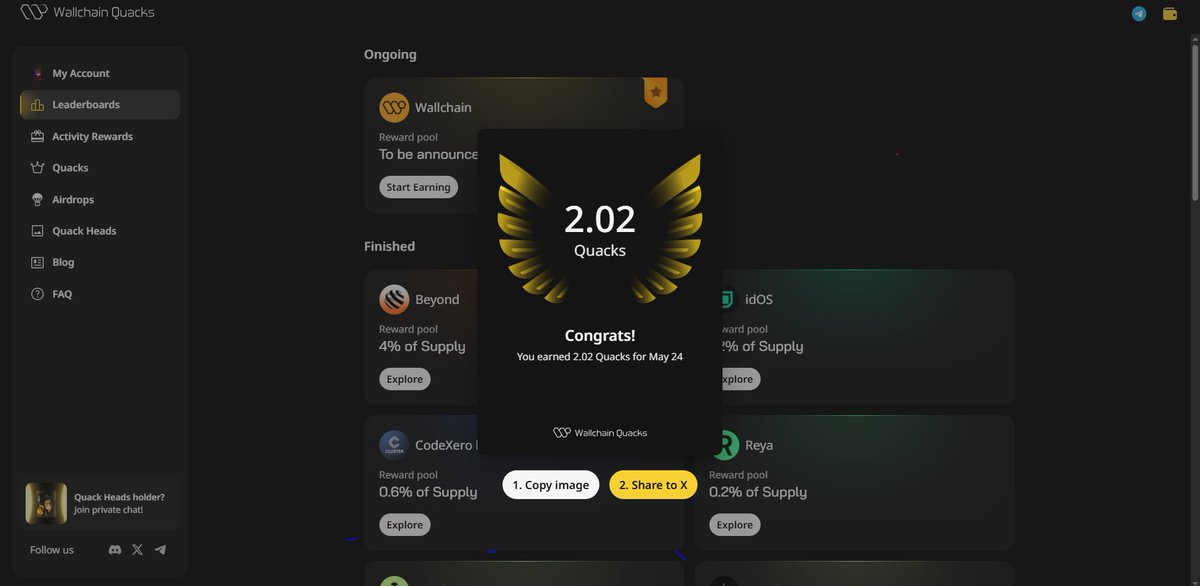

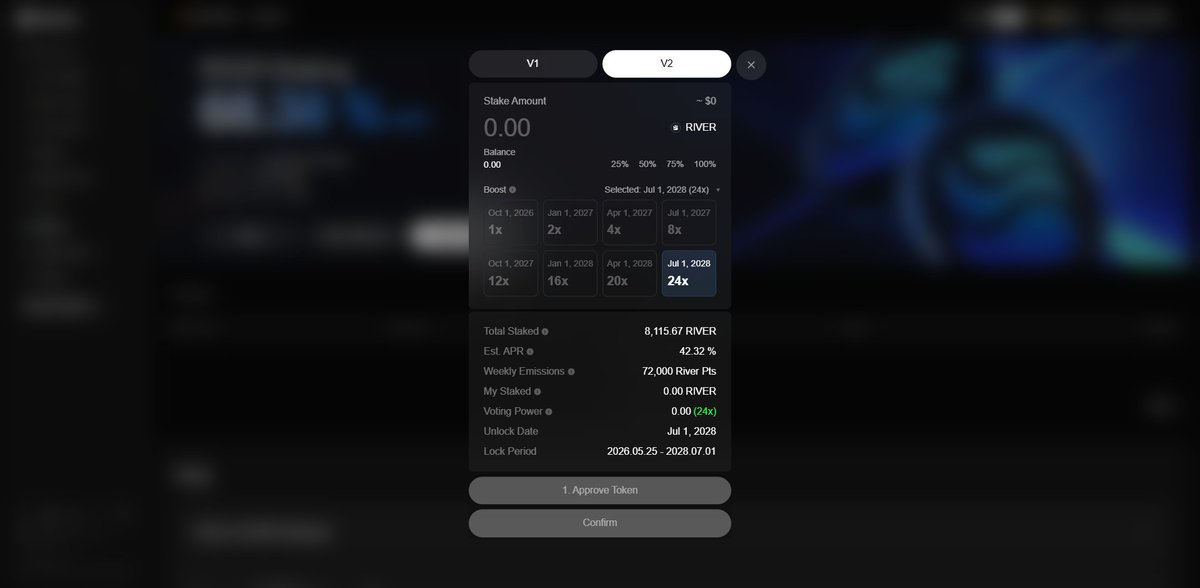

Today I earned 3.76 Quack, and lately I’ve been steadily conquering the goals I set for myself on @wallchain . And today, I officially completed one out of the five goals I set for myself: earning my first 100 Quack. Yes… today is a special day for me because I finally completed 1/5 of my Wallchain journey goals. So let me summarize all five goals again below: 1⃣/ Reach 50 X Score > Current progress: 29 X Score > I’ll continue pushing myself every day to increase it even higher. > Completion progress: 48% 2⃣/ Earn my first 100 Quack > Current progress: 100% COMPLETE => New goal unlocked: >Earn 500 Quack 3⃣/ Appear on the leaderboards for the next Wallchain campaign > Progress: 0% > Still waiting for the next Wallchain campaign announcement 4⃣/ Own 10 Quack Heads NFT > Current progress: #1179 #1482 #1386 #223 > Total owned: 4 NFT > Completion progress: 40% 5⃣/ Reach Top 1-100 on both the 7D and 30D leaderboards > Current rankings: #174 on the 7D leaderboard #197 on the 30D leaderboard > Completion progress: 0% => Because I still haven’t officially entered the Top 100 on both leaderboards yet. I’m still very optimistic about Wallchain and my daily Quack journey. I’ll keep pushing harder and stay consistent all the way through until I complete the remaining four goals I’ve set for myself. And what about @River4fun from @RiverdotInc ? Honestly, I still haven’t made any major progress there yet. After earning another 10.67 Pts, it immediately dropped back down to zero again. My current ranking is now around #1070, which is quite a drop, and my total balance is currently 234 Pts. And with only 37 days left before $RIVER Season 5 ends, I honestly don’t know whether I’ll be able to reach 1000 Pts or not… But that’s my goal for the next 37 days. And yes, despite everything, I’m still optimistic about River too.

"The Quacker's adventures begin..." 🦆 What happens next? You decide. Create your own continuation and quote this post. 🏆 3 best videos win 3 NFTs. How to enter: - create your own continuation of the story - quote this post with your AI video, however you imagine it Let the Quacker lore expand.

Good night my CT ! Let count our reward today @wallchain : Bagged 6.26 quacks @XOOBNetwork : climbed up to 5867 pts @3look_io : Just see new campaign @Appreciators_IO with 8 days only @quipnetwork x @TheARCTERMINAL : my ranking this week at 2xx with both projects @NucleusCodes x @sleepagotchi : Luckily i saw my raking today Finally @0G_labs not yet give up What's yours, my bosses ?

We just launched the ability to build native Android apps directly in Google AI Studio for free! Since launch last week, people have created more than 250,000 Android apps. Likely >99% of these folks never built an Android app before, everyone can now build, no coding required!

Get 50% of your losses covered on EVEDEX 🪂 - Deposit $500+ - Hit the trading volume target - Loss coverage applies automatically With @rhinofi your deposit converts and lands instantly from 60+ networks.