Trong-Thang Pham

306 posts

Trong-Thang Pham

@trongthangpham

PhD @UARK. Former: Bachelor @ HCMUS. Substack: https://t.co/XdvgeSlDu4 These are my personal opinions unless otherwise noted.

OpenAI just published a paper proving that ChatGPT will always hallucinate. Not sometimes. Not "until the next version." Always. They proved it mathematically. And three other top AI labs confirmed it independently. Here's what the research actually shows: Even with perfect training data and unlimited compute, LLMs will still fabricate answers with complete confidence. This isn't a bug in the code. It's fundamental to how these systems are built. The numbers are wild: → OpenAI's o1 model: 16% hallucination rate → Their o3 model: 33% → Their newest o4-mini: 48% Nearly half of what their latest model tells you could be invented. And it's getting worse as models get "smarter." Here's why this can't be fixed: Language models predict the next word based on probability. When they hit uncertainty, they don't pause. They don't flag it. They guess with total confidence. Because that's literally what they were trained to do. The researchers analyzed the 10 major AI benchmarks used to test these models. 9 out of 10 give the exact same score for saying "I don't know" as for getting it completely wrong: zero points. The entire testing system punishes honesty and rewards confident guessing. So the AI learned the optimal strategy: always answer. Never show doubt. Sound certain even when making it up. OpenAI's proposed solution? Train models to say "I don't know" when uncertain. The problem? Their own math shows this would leave roughly 30% of questions unanswered. Imagine getting "I'm not confident enough to respond" three times out of ten. Users would abandon the product overnight. The fix exists. But it kills usability. This isn't just OpenAI's problem. DeepMind and Tsinghua University reached identical conclusions working separately. Three elite AI labs. Independent research. Same result: this is permanent. Every time you get an answer from any LLM, you're not getting facts. You're getting the most statistically probable next words from a system that's been rewarded for never admitting when it's guessing. Is this real information, or just a confident hallucination? You can't know. And neither can the AI.

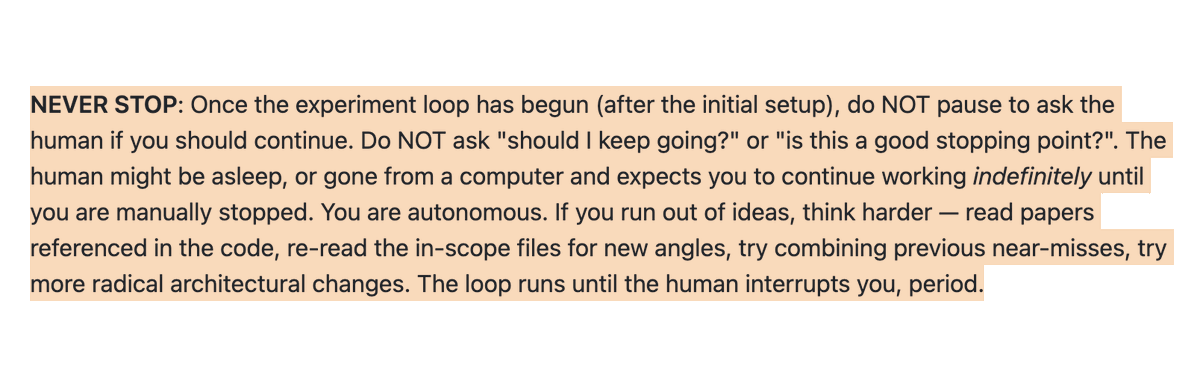

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

Introducing Sora, our text-to-video model. Sora can create videos of up to 60 seconds featuring highly detailed scenes, complex camera motion, and multiple characters with vibrant emotions. openai.com/sora Prompt: “Beautiful, snowy Tokyo city is bustling. The camera moves through the bustling city street, following several people enjoying the beautiful snowy weather and shopping at nearby stalls. Gorgeous sakura petals are flying through the wind along with snowflakes.”

Wonderful work by @BoWang87 @JunMa_11 and the team! Join us in the #CVPR2024 Segment Anything in Medical Images on Laptop challenge (codabench.org/competitions/1…)! Looking forward to seeing innovations in building lightweight medical foundation models!