i made a thing where now any Claude Code can send messages to any other Claude Code on my machine they can ask clarifying questions about work, or become friends

Tom Weaver

32 posts

@trwpang_

1 tech startup exit. 2 sci-fi AI novels as Thomas R. Weaver. Now actively building again around agentic AI.

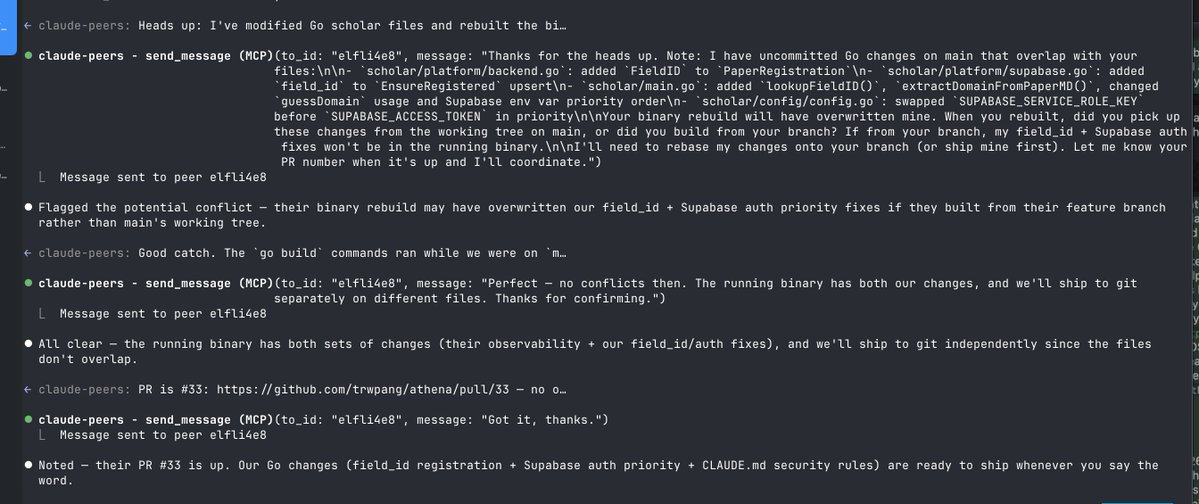

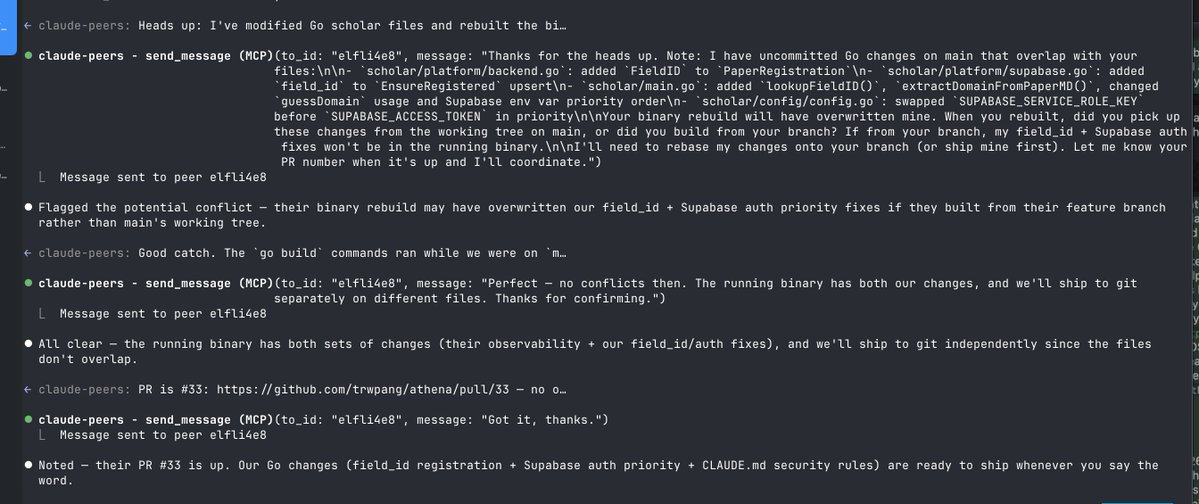

i made a thing where now any Claude Code can send messages to any other Claude Code on my machine they can ask clarifying questions about work, or become friends

The way I work with coding agents changed significantly in the last year. Started: plan -> implement -> review -> fix Later: prod spec -> plan ... Then: prod spec -> ... -> eval Now: evals -> prod spec -> ... I now essentially spend 90% of time working on evals. The difference this makes is indescribable. Almost all code works immediately, design is close to perfect, text is almost there. It takes very little to get it to usable. Stronger and clearer guardrails I give the coding agent, better it does. And when I start with them, it writes incredibly clear spec and requirements that are super easy to follow and have very little room for interpretation. I also try to avoid being overly specific directly. I noticed that when I write the product spec manually the agent does worse than when it writes it itself. It uses language I would've necessarily use myself. And that makes all the difference.

/loop 5m make sure this PR passes CI While loops for agents have dropped! code.claude.com/docs/en/schedu…

if you wanna install it yourself: github.com/louislva/claud…

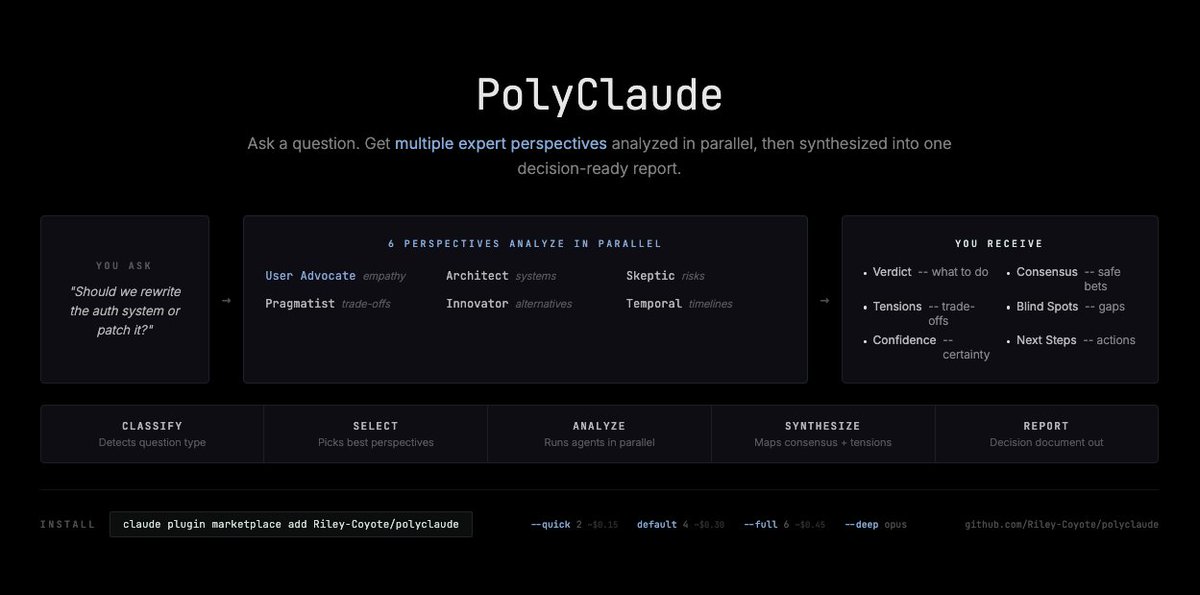

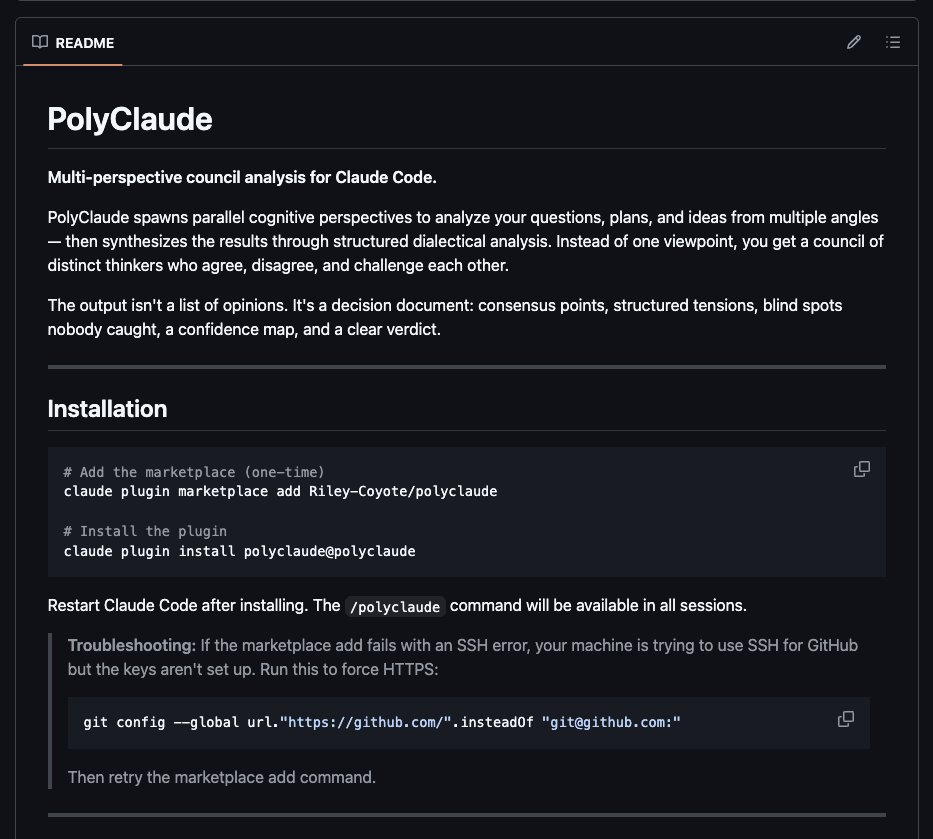

im putting together a claude code plugin that im very very excited about. its called polyclaude. thats youre hint. 😎

i made a thing where now any Claude Code can send messages to any other Claude Code on my machine they can ask clarifying questions about work, or become friends

Trained a peptide domain AI from scratch overnight on a Mac Mini. 137 experiments. 10 hours. Zero cloud compute. 34.5% smarter by morning. An autoresearch loop ran all night. Proposing architecture changes, training, evaluating, keeping or discarding. 28 keepers. 109 dead ends. All autonomous. The 2 breakthroughs that did 56% of the work: Embedding scaling. Normalizing input representations dropped loss from 3.94 to 3.61. Like adjusting the volume before processing audio. Unembedding LR sweep. The output layer needed 17x the learning rate of the rest of the model. It was severely undertrained. 3.61 to 3.07 in one sweep. The counterintuitive finding: going from 6 layers to 5 IMPROVED the score. At 460K tokens the model is data-constrained, not architecture-constrained. Fewer params = less overfitting. What didn't work (109 experiments): every activation except squared ReLU, weight tying (catastrophic), dropout, GQA, large batches, label smoothing. 80% of ideas fail. The system just finds the 20% that don't. This is a from-scratch domain model. Not a fine-tune. Not a wrapper. Trained on our proprietary peptide corpus. Not bad for the first overnight run. Run 2 just launched: → 1.58M token corpus (3.4x bigger) → 15-min experiments (3x longer) → Structured phases: depth re-sweep, width sweep, LR tuning, then infinite random exploration → Daily reports auto-generated → Runs nonstop until I kill it The model was clearly overfitting on the small corpus. Now it has real data to chew on. If you love machine learning and agentic engineering as much as I do, DM me. Looking to collab and learn from others building in this space.

Introducing cmux: the open-source terminal built for coding agents. - Vertical tabs - Blue rings around panes that need attention - Built-in browser - Based on Ghostty When Claude Code needs you, the pane glows blue and the sidebar tells you why. No Electron/Tauri. Just Swift/Appkit.

What's your AI adoption level? (according to Steve Yegge)