mrs.oliver 🤍 to you

83K posts

mrs.oliver 🤍 to you

@tsymonevisuals

✨ 29 | art + design + books + certified yapper RG: 10/85📚✨

Orlando, FL Katılım Mart 2013

3K Takip Edilen1.2K Takipçiler

Sabitlenmiş Tweet

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

hearing that “it’s Leo” turn me up fr

Lex P🪬@LexP__

Yall need to be careful when you ask Mona Leo for a feature. She be tearin yall up omg

English

mrs.oliver 🤍 to you retweetledi

She be losing her top like sista this is not your song😂🔥!!

Lex P🪬@LexP__

Yall need to be careful when you ask Mona Leo for a feature. She be tearin yall up omg

English

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

think about the world dialectically and you won’t be wrong about anything either 🤓

#DesignPolitix

English

mrs.oliver 🤍 to you retweetledi

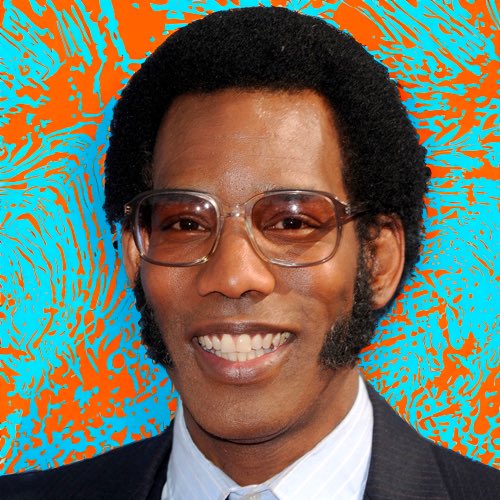

We used to take 3D glasses from the movie theater, pop the lens out and wear the glasses to school the next day

AuxGod@AuxGod_

Fake glasses era had NBA players in a headlock

English

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

mrs.oliver 🤍 to you retweetledi

I’d rather have daycare Medicaid and Medicare than fighting wars

unusual_whales@unusual_whales

Trump: We can't take care of daycare. We're a big country. We're fighting wars. It's not possible for us to take care of daycare, Medicaid, Medicare, all these things.

English

mrs.oliver 🤍 to you retweetledi