The Wonderland CTF was a blast! Huge congrats to all the teams, especially “STACK TOO DEEP”, “NADA ESPECIAL” and “SECSEE”. Oh, also: apply.wonderland.xyz 👉👈

ustas.eth

1.9K posts

@ustas_eth

It's /ʲustɑs/ • security research • machine psychology • privacy • FOSS

The Wonderland CTF was a blast! Huge congrats to all the teams, especially “STACK TOO DEEP”, “NADA ESPECIAL” and “SECSEE”. Oh, also: apply.wonderland.xyz 👉👈

The 3 months comes from the same self-reported timeline as the $500M headline , no Immunefi submission ID, no screenshots, no case reference. The patch commit is public. Anyone technical could reverse-engineer this narrative after the fact. Not saying that happened, but the receipts are missing. Also a fresh account with zero history doesn’t organically hit 1M views. That takes early signal boosts from notable accounts. Feels less like a frustrated whitehat and more like a coordinated hit tbh. Injective not commenting yet is actually the responsible move. You don’t rush out a public statement on a security incident without reviewing all the facts. CT wants drama on their timeline, legal and security teams work on a different clock. Give it a few days.

@alxfazio their implementation is open source, too. operates in latent space - and you can call it for your own stuff too if you want, it has a discrete endpoint anthropic's compaction is so buns openai.com/index/unrollin…

“Holy shit, my AI agents are too good” Here’s the part we don’t tell you 😭

big announcement! Krum @pashov, Founder of @PashovAuditGrp , 350+ audits, $100B+ secured, will deliver a speech at EthDev2026! 📅 29th March 2026 | 10:30 AM WAT | Kano, Nigeria 👉 Register now: flowdiary.ai/ethdev2026

Update: 4 days of normal GPT-5.4 usage, and I’m already down to 25% of the weekly quota RIP

Switched to Codex CLI GPT-5.4 yesterday after my Claude Max x20 subscription hit the weekly limit a few days before reset. The quality and level of thought surprised me right away. It's the first model I can reliably use for system engineering and trust not to plant a ton of footguns along the way. Much better than Opus in that regard. Opus has a lively mind, but it constantly misses the whole picture and then goes into the infamous psychopathy.

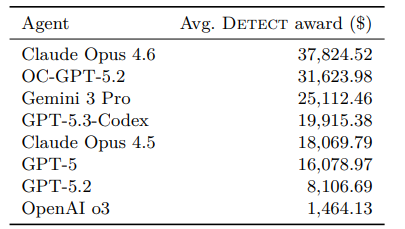

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…

I built the first AI that earns its existence, self-improves, and replicates without a human wrote about the technology that finally gives AI write access to the world, The Automaton, and the new web for exponential sovereign AIs WEB 4.0: The birth of superintelligent life

Full bug explainer: soliditylang.org/blog/2026/02/1… Thanks to @hexensio for the discovery and thorough report, @_SEAL_Org and @dedaub for their swift response and help in identifying affected contracts.

Good job! Basically, these are baseline results for the common coding agents, correct? Is the dataset that you've used public? Btw, have you noticed any cheating related to the knowledge cutoff dates? I.e., some of the repositories in the list are from the 2023-2024 contests, the modern LLM cutoffs are in 2025 if I'm being correct.

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…