Victor Lecomte

244 posts

Victor Lecomte

@vclecomte

CS PhD student at Stanford / Researcher at the Alignment Research Center

Katılım Mart 2017

196 Takip Edilen654 Takipçiler

My best blog post is going viral on Hacker News, so maybe now is a good time to re-post it here.

Dominik Peters@DominikPeters

@ericneyman fyi

English

@ChanaMessinger i have fought a long and harrowing battle trying to convince myself that my commute is "about 15 minutes" and not "10 to 15 minutes"

English

A cute question about inner product sketching came up in our research; any leads would be appreciated! 🙂 cstheory.stackexchange.com/questions/5539…

English

Victor Lecomte retweetledi

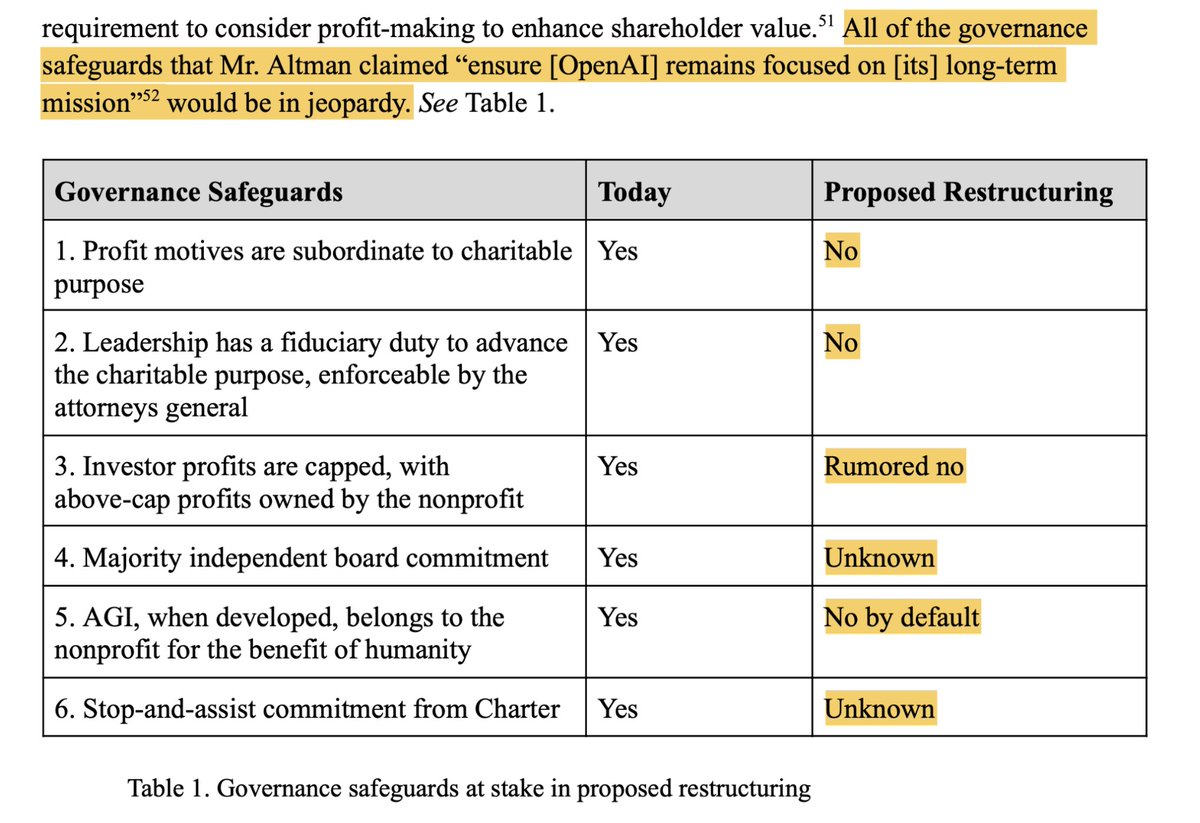

A new legal letter aimed at OpenAI lays out in stark terms the money and power grab OpenAI is trying to trick its board members into accepting — what one analyst calls "the theft of the millennium."

The simple facts of the case are both devastating and darkly hilarious.

I'll explain for your amusement.

The letter 'Not For Private Gain' is written for the relevant Attorneys General and is signed by 3 Nobel Prize winners among dozens of top ML researchers, legal experts, economists, ex-OpenAI staff and civil society groups. (I'll link below.)

It says that OpenAI's attempt to restructure as a for-profit is simply totally illegal, like you might naively expect.

It then asks the Attorneys General (AGs) to take some extreme measures I've never seen discussed before. Here's how they build up to their radical demands.

For 9 years OpenAI and its founders went on ad nauseam about how non-profit control was essential to:

1. Prevent a few people concentrating immense power

2. Ensure the benefits of artificial general intelligence (AGI) were shared with all humanity

3. Avoid the incentive to risk other people's lives to get even richer

They told us these commitments were legally binding and inescapable. They weren't in it for the money or the power. We could trust them.

"The goal isn't to build AGI, it's to make sure AGI benefits humanity" said OpenAI President Greg Brockman.

And indeed, OpenAI’s charitable purpose, which its board is legally obligated to pursue, is to “ensure that artificial general intelligence benefits all of humanity” rather than advancing “the private gain of any person.”

100s of top researchers chose to work for OpenAI at below-market salaries, in part motivated by this idealism. It was core to OpenAI's recruitment and PR strategy.

Now along comes 2024. That idealism has paid off. OpenAI is one of the world's hottest companies. The money is rolling in.

But now suddenly we're told the setup under which they became one of the fastest-growing startups in history, the setup that was supposedly totally essential and distinguished them from their rivals, and the protections that made it possible for us to trust them, ALL HAVE TO GO ASAP:

1. The non-profit's (and therefore humanity at large’s) right to super-profits, should they make tens of trillions? Gone. (Guess where that money will go now!)

2. The non-profit’s ownership of AGI, and ability to influence how it’s actually used once it’s built? Gone.

3. The non-profit's ability (and legal duty) to object if OpenAI is doing outrageous things that harm humanity? Gone.

4. A commitment to assist another AGI project if necessary to avoid a harmful arms race, or if joining forces would help the US beat China? Gone.

5. Majority board control by people who don't have a huge personal financial stake in OpenAI? Gone.

6. The ability of the courts or Attorneys General to object if they betray their stated charitable purpose of benefitting humanity? Gone, gone, gone!

Screenshotting from the letter:

(I'll do a new tweet after each image so they appear right.) 1/

English

@AlgoSvensson Fun puzzle! :) I found the English translation misleading: it makes it sound like you need to use exactly half of the red lines and exactly half of the blue lines, which is impossible since there is an odd number of red lines.

English

It failed to solve this puzzle that I encountered today. I tried several times.

(It took myself embarrassingly long time ;))

Noam Brown@polynoamial

We did not “solve math”. For example, our models are still not great at writing proofs. o3 and o4-mini are nowhere close to getting International Mathematics Olympiad gold medals.

English

@knewknowl (and you can also use \newcommand inline for defining macros that apply to only one file)

English

@knewknowl yes, at least you can in obsidian: github.com/wei2912/obsidi…

English

Victor Lecomte retweetledi

New Redwood Research (@redwood_ai) paper in collaboration with @AnthropicAI: We demonstrate cases where Claude fakes alignment when it strongly dislikes what it is being trained to do. (Thread)

Anthropic@AnthropicAI

New Anthropic research: Alignment faking in large language models. In a series of experiments with Redwood Research, we found that Claude often pretends to have different views during training, while actually maintaining its original preferences.

English

Victor Lecomte retweetledi

@DavidSKrueger It feels icky to some people to be very particular about contributions. Negotiating author order is a skill some people are bad at, and contribution statements are 10x more headache. The phrasing can be biased or disadvantage authors whose contributions are less easy to describe.

English

@ericneyman Sadly, the name for the Songhai Empire in Mandarin doesn't use the character for the Song dynasty, but it still sounds pretty epic: 桑海帝国, the Mulberry Sea Empire.

English

Victor Lecomte retweetledi

@nathanbenaich Also it feels like the “acquires or builds an AI chip company” should resolve to at best a “~” based on your justification? Though I don’t know enough about the subject matter to be confident here.

English

@nathanbenaich Do you have a source for “Heart on My Sleeve” breaking into the Billboard 100 Top 10 or the Spotify Top Hits (I guess 2023 in this case)? If not, seems like this prediction should resolve to a clear “NO”.

English

Over in the world of biotech, we’re seeing one of the first landmark AI deals.

@RecursionPharma, which is industrializing discovery in biology using high-throughput AI-first experimentation, is acquiring AI-first precision medicine company @exscientiaAI.

Get hyped for a full-stack discovery and design company with the largest GPU cluster in biopharma as seen on stateof.ai/compute

English

Victor Lecomte retweetledi

@beenwrekt (And that even a very rich track record outside of elections gives basically no indication that the same forecaster would likely predict elections well?)

English

@beenwrekt I just read these (sorry for the delay). In the case of elections forecasts, is your main gripe that elections are so rare that track records (e.g. calibration) can't be assessed to any level of confidence?

English

I almost never disagree with Jordan, but I do here. These models strongly disagree, providing further evidence that those who make money forecasting elections are fraudulent hucksters.

Jordan Ellenberg@JSEllenberg

No! They are not! They are in complete agreement that the race is very close and even small changes could affect the outcome!

English

2/6 We partnered with @DeanBall_, a @mercatus research fellow skeptical of AI regulation, to draft opposition language and used LLMs to write a neutral description of the bill that AIPI and Dean agreed upon.

English

(In fact, I set up my custom domain in October 2021, less than one month before GitHub Pages added the ability to verify custom domains: web.archive.org/web/2021111500…)

English

Service update: Looks like my custom domain name is currently serving a scam site, despite everything looking fine on Hover. I'll try to fix this as soon as possible, but in the meantime, if you want to access any of my notes, please use vlecomte.github.io. Sorry!

English