VCoolish

134 posts

@cyberdrk @WalletConnect Nice, wish you best of luck on what's coming next!

English

PSA:

after 4 years at @WalletConnect, I'm passing the baton and am excited to glance at an empty calendar.

It's been an awesome ride building WalletConnect into this core pillar of the ecosystem. I certainly have some learnings and scar tissue when it comes to building SDKs now... it also required a non-traditional CTO - at peak I'd have 20 meetings with partners in a single day.

I'm bullish on WalletConnect's future, especially WalletConnect Pay - cards are a crutch and WalletConnect Pay is the solution. Stoked to cheer from the sidelines going forward.

Huge gratitude for my all-star engineering and product team: @nachorivera7, @lukaisailovic, @Huxwell_, @jakubuid, @nuldiego, @RocchiTomas, Mario, @0xmagkha_, Mirna, @nachosantise, @0xGancho, @saitamoe, @enesozturkdev, @svennxyz, Ivan, @mechris13524, Felipe, @rplusq, @geek_maks, Alex, Cheun, @skibitsky, @CryptoProdMan, Nodar. And to the WalletConnect Council, the rest of the team across design, QA, ops, BD, and marketing. And to Rachel, @Cgmirror, and @Dolcemaschio for being awesome exec counterparts. And to @Houlgrave for building this together the past few years. And of course last but not least to @pedrouid for bringing me onboard and trusting me with this responsibility.

Riaz is stepping in to lead the team and I couldn't be more pumped. He's a seasoned payments tech exec and I'm glad we were able to convince him to join us.

I feel very lucky to have built and been part of such an awesome team which makes saying goodbye to so damn hard. Thank you all!

English

@andrebutenko Looks nice! How would you solve emotions on this mascot ?

English

Boring year tbh, X might be a perfect crypto gateway, just don't bring prediction markets with it😀

Nikita Bier@nikitabier

Crypto has had a rough year. Maybe we should launch something to fix it.

English

@MindGoogle why your Pro models are so slow? I am truly amazed by flash models speed/quality for simple tasks, but when it comes to something more sophisticated thinking takes like 10 minutes to answer a simple question in Gemini CLI with given context. It seems also like you are loading too much context every time so it simply can’t operate effectively in folders with more files.

Hope you are close to finish model refactoring to achieve same level of breakthrough as we saw happening after Bard.

English

VCoolish retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

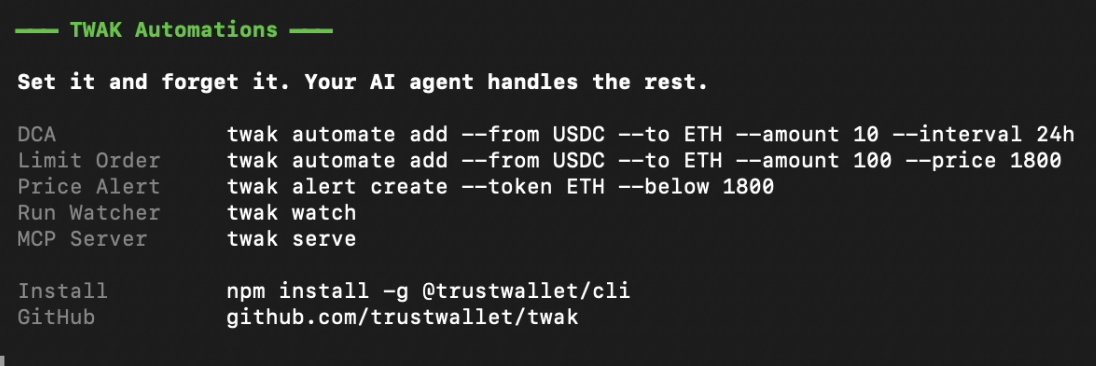

At Trust Wallet we are close to launching an internal AI orchestration tool that is gonna solve SDLC, we all know this is the way, the difference is only if you will accept it or stay behind.

Linear@linear

Issue tracking is dead. We are building what comes next. linear.app/next

English

VCoolish retweetledi

Last week, AI got eyes on crypto.

Today, it gets hands 🤖👐

Trust Wallet Agent Kit is live ↓🧵

portal.trustwallet.com

English

VCoolish retweetledi

Your AI agent can now read crypto.

Introducing: Trust Wallet Developer Portal. AI agents now have read-only access to crypto data across 100+ chains.

🔎 Search assets

📈 Get real-time prices

☑️ Scan trending tokens

⚠️ Check token risk

Start building → portal.trustwallet.com

English

>We’re early in AI with custody + execution rights… next wave is not "ask AI" - it’s "let AI do it".

Whole 2025 was wasted on discussions. Now that’s exactly what we’re building at Trust Wallet.

Shipping.

Not weekly.

Daily.

Small team, strong builders, high focus.

VCoolish@vcoolish1

English