Sabitlenmiş Tweet

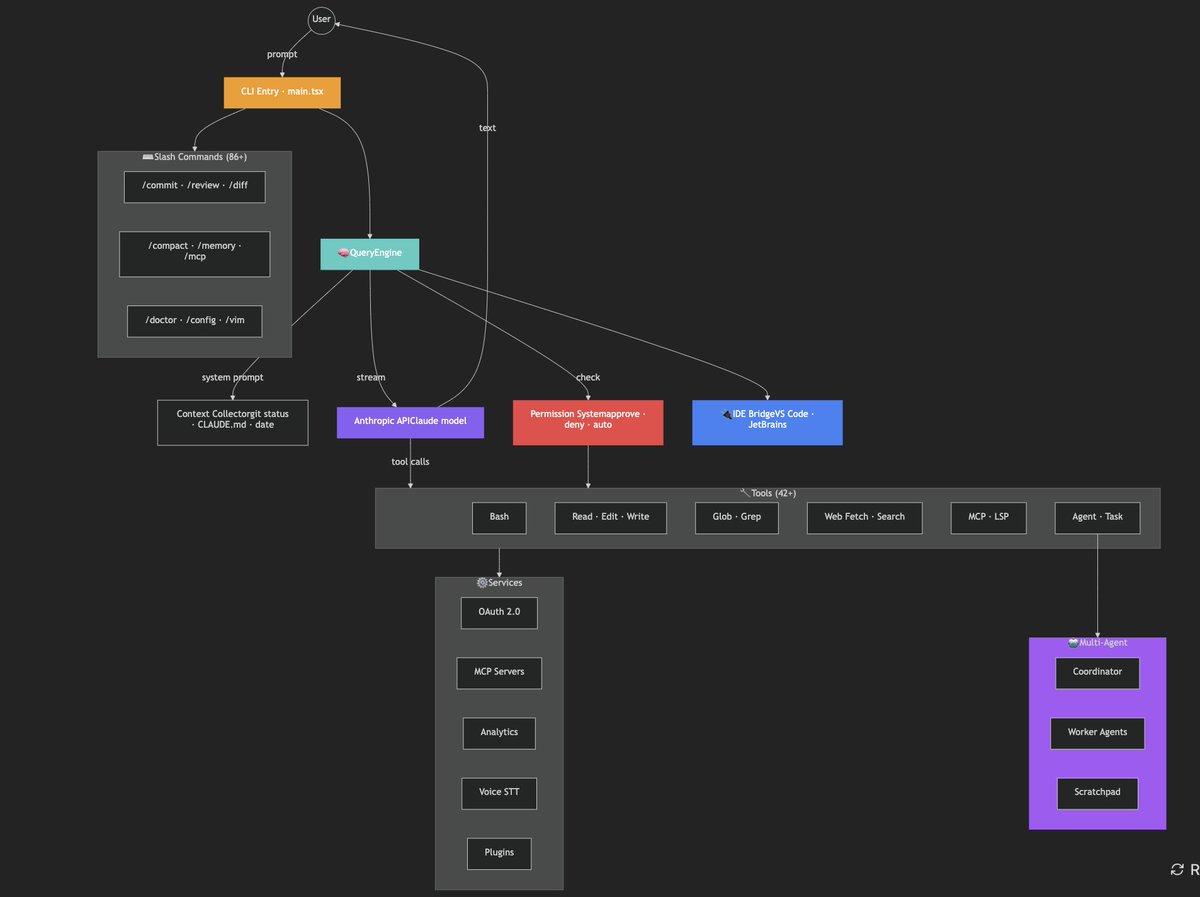

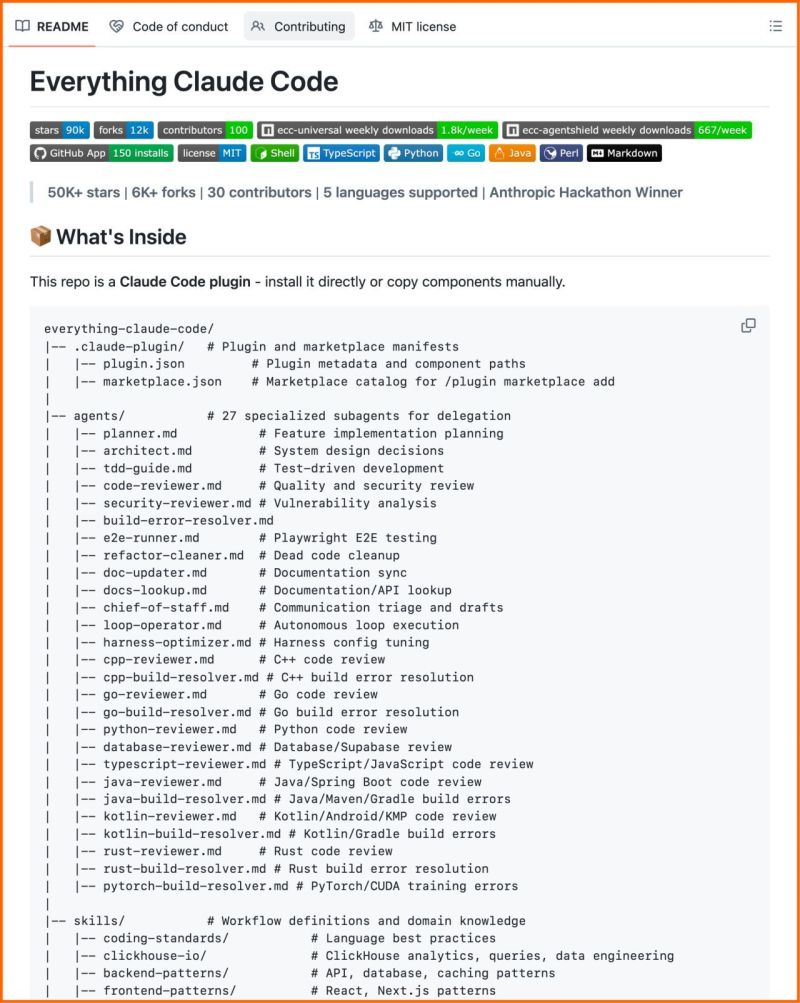

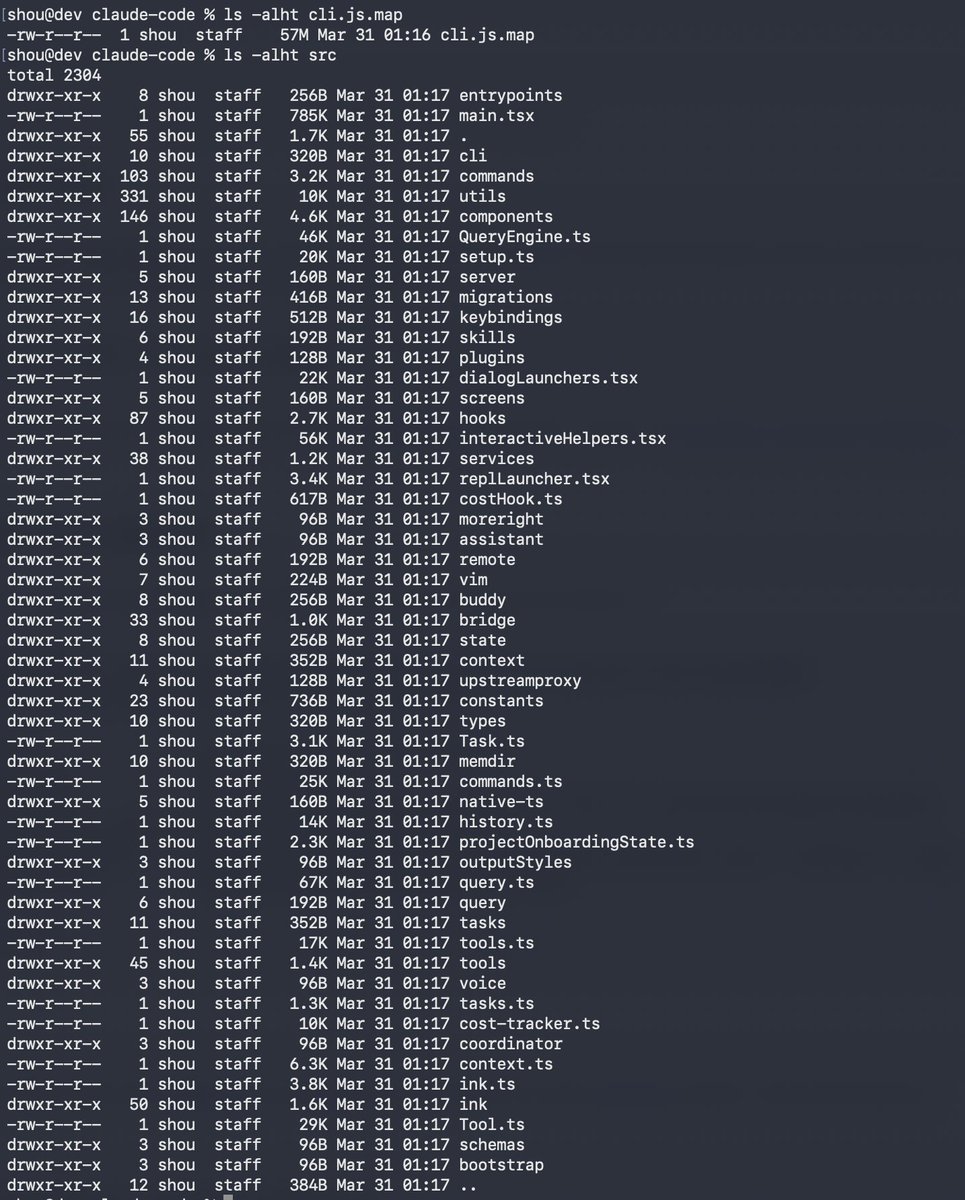

🚨 Claude Code source just leaked from an npm source map and @olliewd40 left this confession in production:

"The memoization here increases complexity by a lot, and im not sure it really improves performance"

Performance? Unsure 🤷

Shipped? Absolutely 🚀

Hotel? Trivago 🫡

English