velleit

68 posts

Simple way to see this is wrong: If you view a system as having inputs (like hearing something) and outputs (like saying something) then you can divide system properties by whether or not they affect I/O. Claude's weights somewhere storing "Paris is in France" affect I/O if you ask a question about Paris. The exact mass of the power supply to the GPU rack for that Claude instance doesn't affect I/O. That Claude instance being made out of silicon instead of carbon, or electricity in wires instead of water in pipes, doesn't affect I/O given a fixed algorithm above the wires or pipes. Nothing Claude can internally do will make anything get damp inside, if it's running on electricity. Nothing about "electricity vs water" can affect Claude's output for the same reason. It always answers the same way about France. Nothing Claude can internally compute will let it notice whether it's made of electricity or water flowing through pipes. When someone says "a simulated storm can't get anything wet", they are unwittingly pointing to the difference between the physical layer and the informational/functional layer. Things that the computer physics affect without affecting output; things that affect the output without depending on the exact computer-physics. The material it's made of doesn't affect the output. The output can't see the material because no algorithm can be made to depend on the choice of material. You can always run the same algorithm on different material, so you can't make the algorithm depend on that, so the output can't depend on that. By reflecting on your awareness of your own awareness, the fact of your own consciousness can make you say "I think therefore I am." Among the things you do know about consciousness is that it is, among other things, the cause of you saying those words. You saying those words can only depend on neurons firing or not firing, not on whether the same patterns of cause and effect were built on tiny trained squirrels running memos around your brain. You couldn't notice that part from inside. It would not affect your consciousness. That's why humans had to discover neurobiology with microscopes instead of introspection. Consciousness is in the class of things that can affect your behavior and can't depend on underlying physics, not in the class of direct properties of underlying physics that can't affect your behavior. A simulated rainstorm can't get anything wet. Running on electricity versus water can't change how you say "I think therefore I am." And that's it. QED.

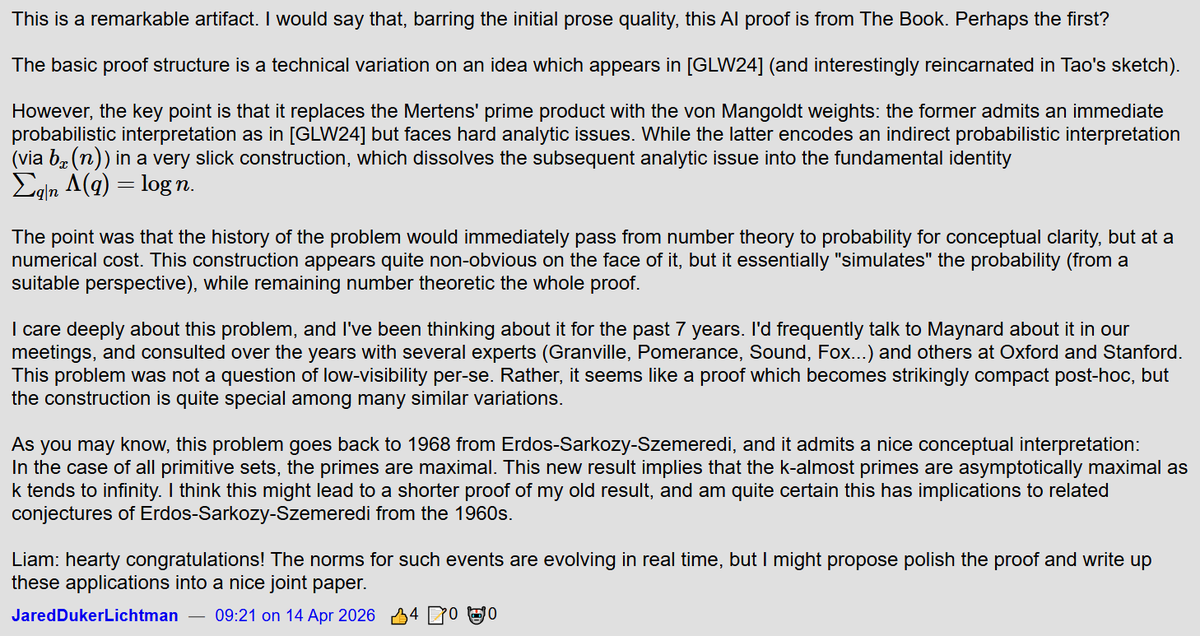

Google DeepMind researcher argues that LLMs can never be conscious, not in 10 years or 100 years. "Expecting an algorithmic description to instantiate the quality it maps is like expecting the mathematical formula of gravity to physically exert weight."

Universal HIGH INCOME via checks issued by the Federal government is the best way to deal with unemployment caused by AI. AI/robotics will produce goods & services far in excess of the increase in the money supply, so there will not be inflation.

Leonard Rome’s lab discovered an odd, abundant component of cells in the 1980s—and he’s still trying to figure out what it does. Learn more: scim.ag/4gOvrbG #ScienceMagArchives