Jianyang Gu

60 posts

Jianyang Gu

@vimar_gu

Postdoc @ the Ohio State University

Introducing @NeoCognition, the agent lab for specialized intelligence. Everyone needs experts, but human expertise does not scale. Backed by $40M seed funding, we build self-learning agents that specialize across domains to make expertise abundant.

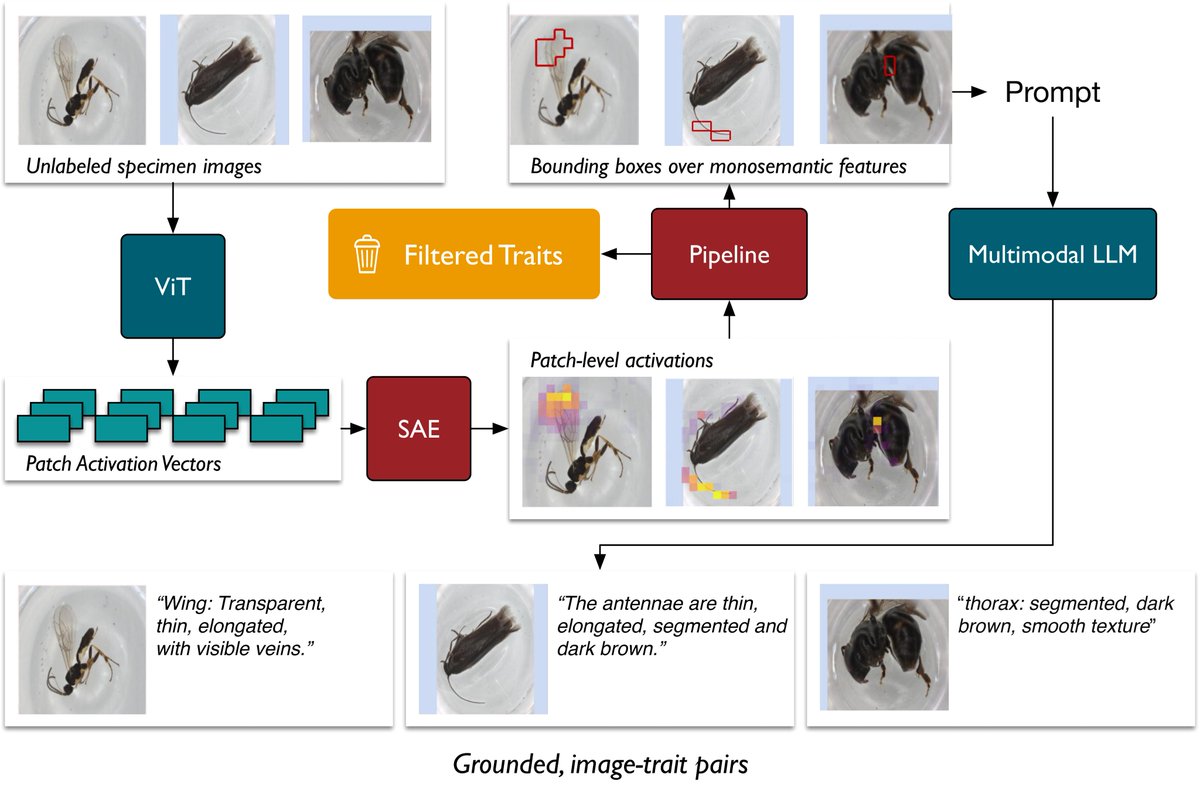

AI is helping scientists see nature in entirely new ways. 🔍 In collaboration with @OhioState, BioCLIP2 runs on NVIDIA accelerated computing to identify over a million species and reveal hidden patterns that support conservation and ecosystem health worldwide. 👉 nvda.ws/4v1RK5p

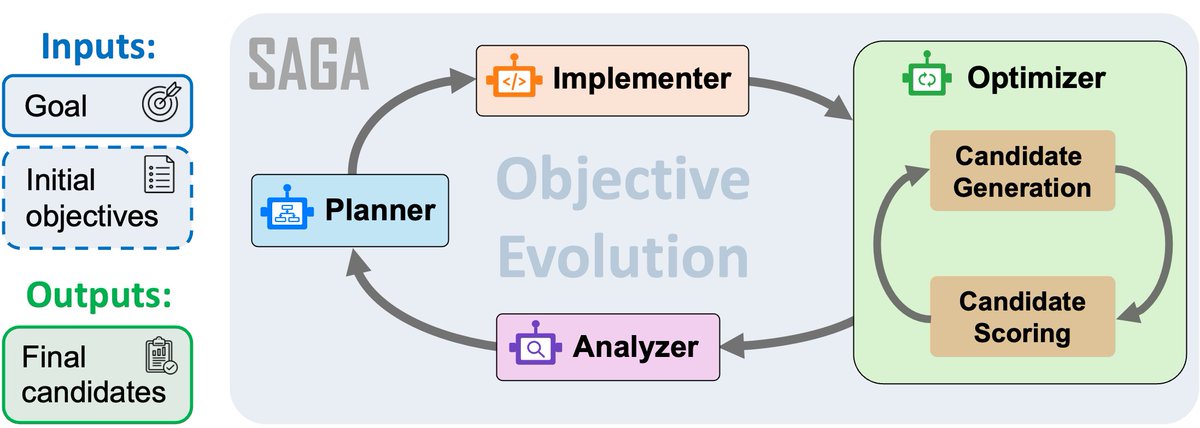

❓How can we build AI agents that do what scientists actually do? Is scientific discovery merely a search problem? 🚀 Meet SAGA: Scientific Autonomous Goal-evolving Agents. Five discovery tasks across chemistry, biology & materials science, with wet-lab validation.

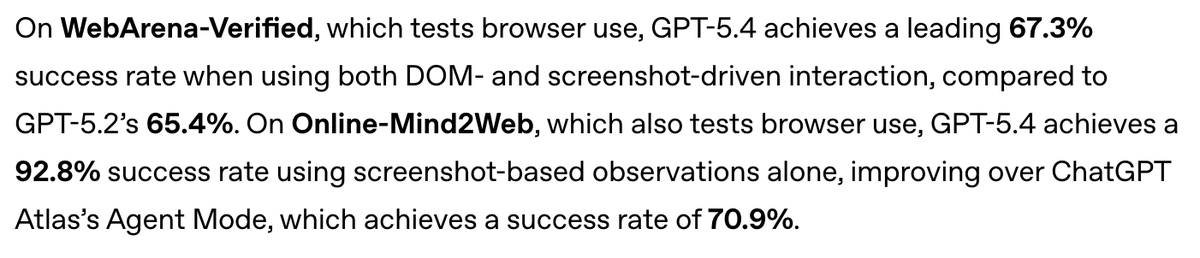

GPT-5.4 Thinking and GPT-5.4 Pro are rolling out now in ChatGPT. GPT-5.4 is also now available in the API and Codex. GPT-5.4 brings our advances in reasoning, coding, and agentic workflows into one frontier model.

GPT-5.4 Thinking and GPT-5.4 Pro are rolling out now in ChatGPT. GPT-5.4 is also now available in the API and Codex. GPT-5.4 brings our advances in reasoning, coding, and agentic workflows into one frontier model.

How was the show Silicon Valley so ahead of its time?

As you write your #CVPR2026 rebuttal, please note the policies below. Good luck ✍️

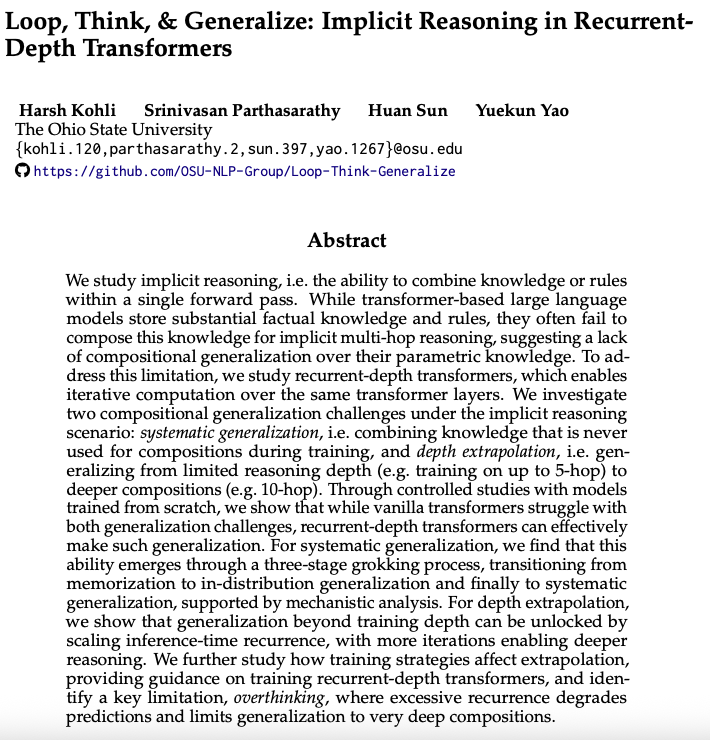

@ShumingHu @YouJiacheng fyi thanks for the original discussion arxiv.org/abs/2512.10794 TL;DR: my earlier take did not hold up, but the outcome led to a much deeper understanding; see the acknowledgments as well 🫡