🏅 Runners-up: - @xizhi_tan (Drexel), advised by Vasilis Gkatzelis, for: "Learning-augmented mechanism design" - Yifan Wu (Northwestern), advised by @jasondhartline, for: "Trustworthy AI: Foundations from Proper Scoring Rules"

Ellen Vitercik

19 posts

@vitercik

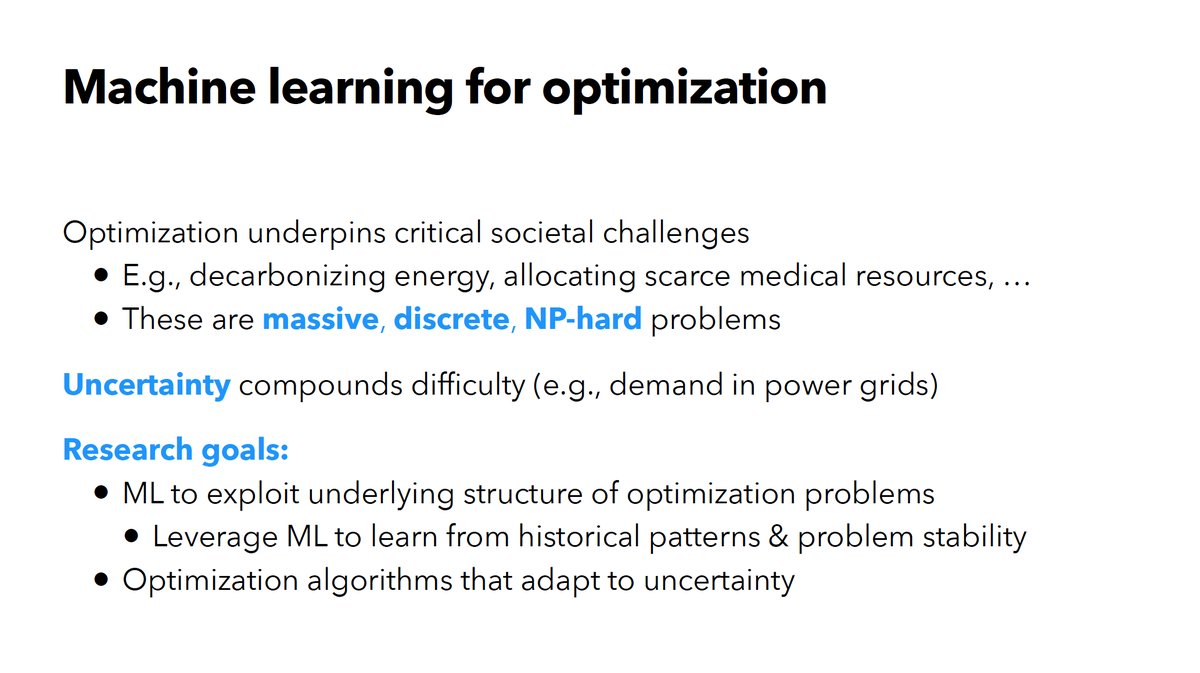

Assistant Professor @Stanford Management Science & Engineering and Computer Science | Machine Learning & Optimization

🏅 Runners-up: - @xizhi_tan (Drexel), advised by Vasilis Gkatzelis, for: "Learning-augmented mechanism design" - Yifan Wu (Northwestern), advised by @jasondhartline, for: "Trustworthy AI: Foundations from Proper Scoring Rules"

📢 New position paper: “𝗡𝗲𝘂𝗿𝗮𝗹 𝗔𝗹𝗴𝗼𝗿𝗶𝘁𝗵𝗺𝗶𝗰 𝗥𝗲𝗮𝘀𝗼𝗻𝗶𝗻𝗴 𝗥𝗲𝗾𝘂𝗶𝗿𝗲𝘀 𝗮 𝗖𝗹𝗲𝗮𝗿 𝗦𝗰𝗼𝗽𝗲, 𝗧𝗵𝗲𝗼𝗿𝗲𝘁𝗶𝗰𝗮𝗹 𝗙𝗼𝘂𝗻𝗱𝗮𝘁𝗶𝗼𝗻𝘀, 𝗮𝗻𝗱 𝗘𝗺𝗽𝗶𝗿𝗶𝗰𝗮𝗹 𝗥𝗶𝗴𝗼𝗿” When and where should we use Neural Algorithmic Reasoning (NAR)? NAR is an exciting emerging area at the intersection of 𝙘𝙡𝙖𝙨𝙨𝙞𝙘𝙖𝙡 𝙖𝙡𝙜𝙤𝙧𝙞𝙩𝙝𝙢 𝙙𝙚𝙨𝙞𝙜𝙣 and 𝙣𝙚𝙪𝙧𝙖𝙡 𝙘𝙤𝙢𝙥𝙪𝙩𝙖𝙩𝙞𝙤𝙣 — but its foundations are still underdeveloped. In this paper, we argue that the field needs: ✨ a sharper 𝙙𝙚𝙛𝙞𝙣𝙞𝙩𝙞𝙤𝙣 of what NAR is (and is not), including clearer distinctions from neighboring paradigms, 📐 stronger 𝙩𝙝𝙚𝙤𝙧𝙮 for expressivity and generalization, 🧪 more rigorous 𝙗𝙚𝙣𝙘𝙝𝙢𝙖𝙧𝙠𝙨 and connection to high-impact 𝙖𝙥𝙥𝙡𝙞𝙘𝙖𝙩𝙞𝙤𝙣𝙨.

[0/n] Can LLMs 𝘢𝘤𝘵𝘶𝘢𝘭𝘭𝘺 reason about structure—order, hierarchy, connectivity, and how parts fit together?🧩 We introduce 𝗗𝗦𝗥-𝗕𝗲𝗻𝗰𝗵: a data-structure benchmark designed to test this 𝘄𝗶𝘁𝗵𝗼𝘂𝘁 𝘁𝗼𝗼𝗹𝘀. Even SOTA LLMs still struggle in the hardest settings.