Sabitlenmiş Tweet

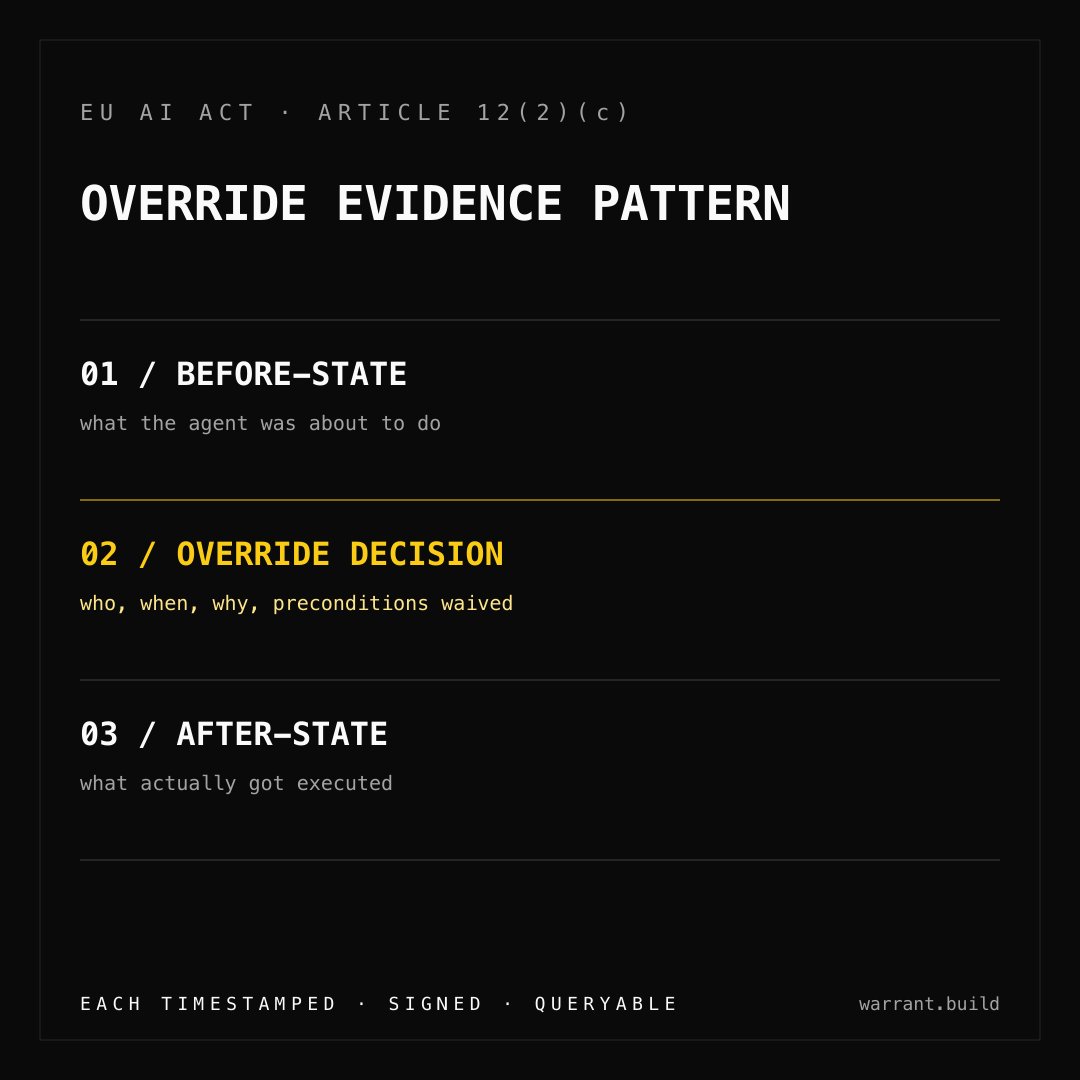

Reading the EU AI Act Article 12 spec end-to-end this week.

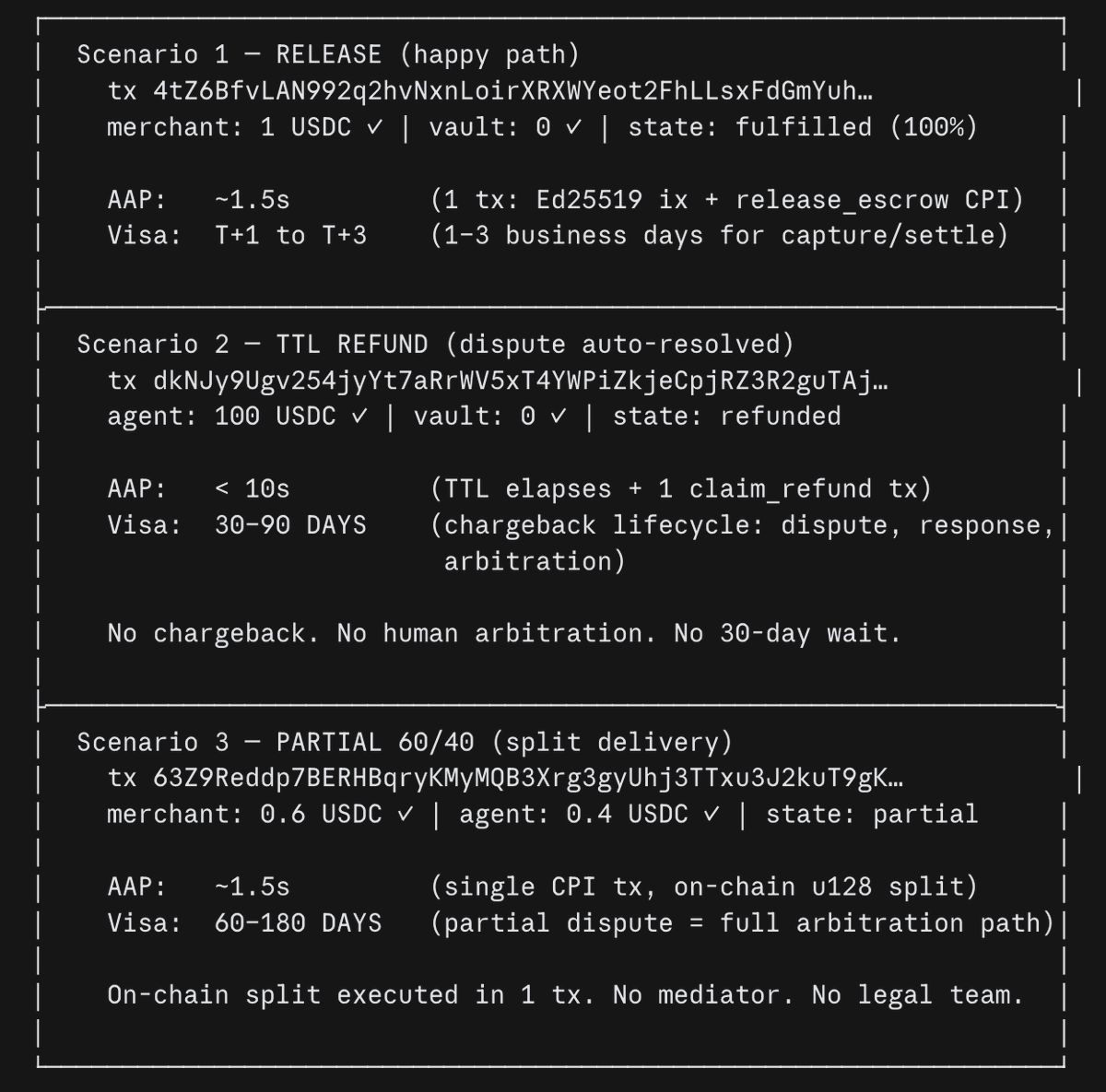

The gap between "logs" (what most teams output) and "evidence" (what notified bodies will demand) is wider than the industry realizes.

95 days until enforcement. Most fintechs aren't close.

#EUAIAct

English