Vlaд Фaуsт

3.5K posts

Vlaд Фaуsт

@vladfaust

I may be following too many accounts

Katılım Mayıs 2013

868 Takip Edilen176 Takipçiler

@yougotvansh @_royaltomar @dodopayments Just want to show folks the alternatives. @dodopayments is very nice. 🙂

English

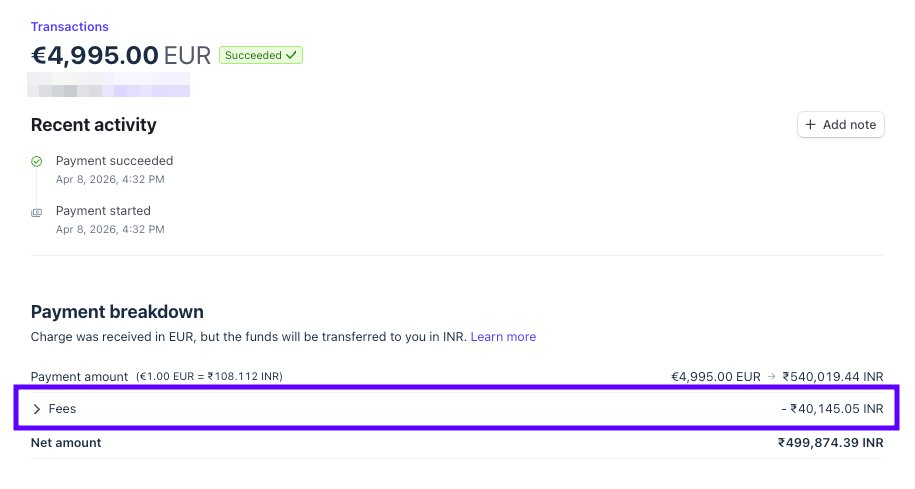

hey @stripe, your api just charged our european customer €4,995 instead of €60. painful bug.

we have the same product priced in inr, eur, usd, and gbp. on our checkout page we pass the inr price id and stripe correctly picks the right amount + currency based on the customer's region. ₹4,995 becomes €60 for an eu customer. works perfectly.

we recently shipped "pay with saved card" inside our app and used the same logic. passed the inr price id for a 4,995 inr charge, expecting stripe to convert it the same way.

instead, stripe swapped the currency to eur but kept the number. €4,995 hit the customer's card instead of €60.

now here's the kicker: if we refund it ourselves, we eat ~€370 in processing fees, fx, and taxes. that's 6x the actual order value of €60. we'd lose money fixing a bug we didn't cause.

can someone take a look and reverse this from your end? i have the charge id ready with me.

English

Probably a good development I think. Better than all of the NSFW content getting nuked from orbit.

Civit probably knows that it'll lose a majority of its base without NSFW.

civitai.com/articles/28369…

English

Vlaд Фaуsт retweetledi

agents that make explainer videos > agents that summarize PDFs

Nous Research@NousResearch

Introducing the Manim skill for Hermes Agent. Manim is an engine for creating precise programmatic animations for mathematical and technical explainers, made famous by the @3blue1brown channel.

English

Vlaд Фaуsт retweetledi

ZINC — LLM inference engine written in Zig, running 35B models on $550 AMD GPUs github.com/zolotukhin/zinc

English

Vlaд Фaуsт retweetledi

Humans can see in high-res, high-FPS in real-time. Why can't VLMs?

Introducing AutoGaze: ViTs/VLMs "gaze" only at key video regions! Up to 4-100x token savings, 19x speedup, and enables scaling to 4K-res 1K-frame videos.

📄 arxiv.org/abs/2603.12254

🌐 autogaze.github.io

🤗 huggingface.co/collections/bf…

(1/n)🧵

English

Vlaд Фaуsт retweetledi

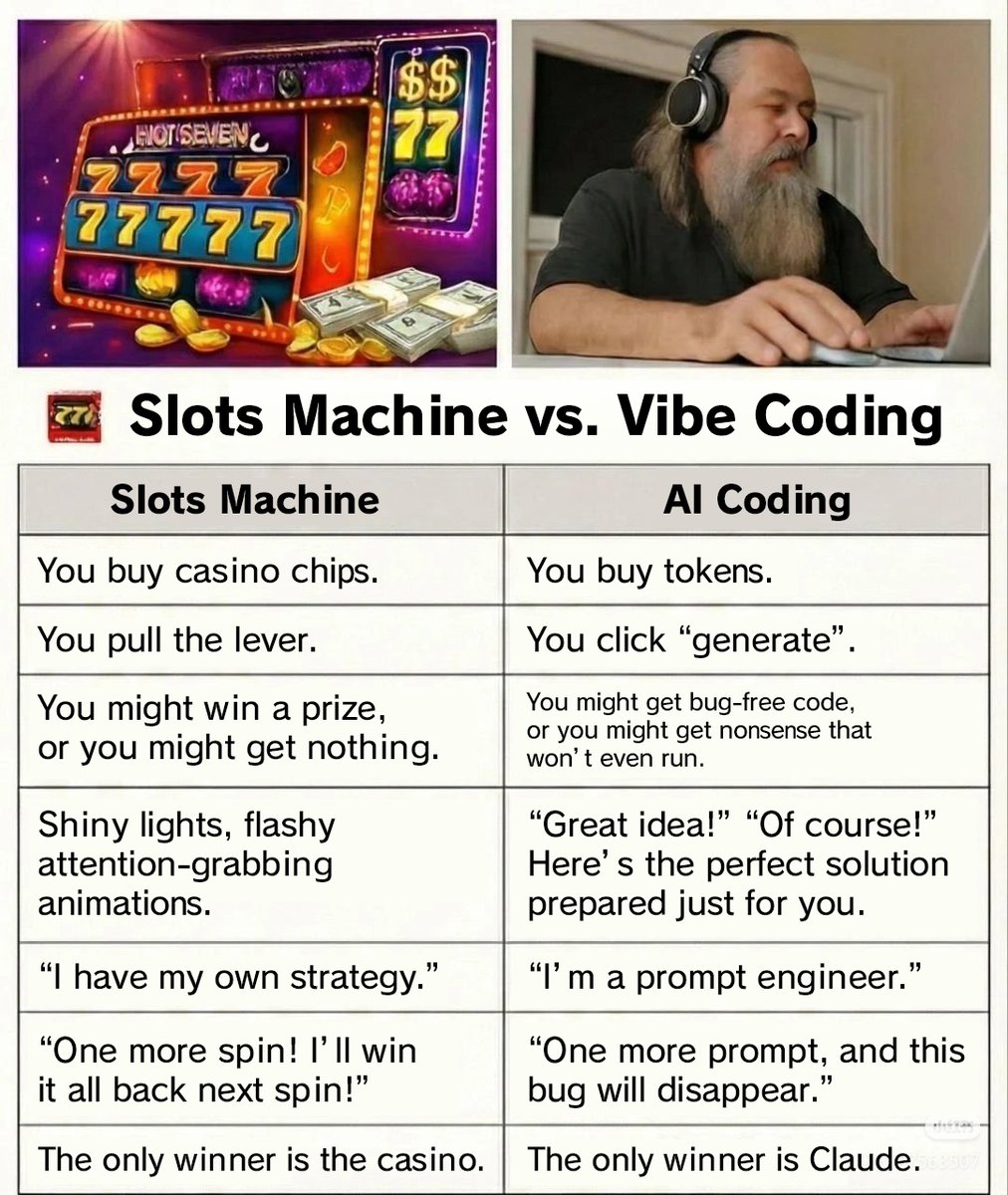

The original image is pretty self-explanatory but I translated it anyway.

Aliez Ren@aliez_ren

当老虎机胜率大于 50% 的时候,就变成了印钞机。Vibe coding 也是一样的道理

English

@NormBreaker3 GDScript is weak-typed + events are not typed => more bug-prone in long run

English

Vlaд Фaуsт retweetledi

v0.28.0 is released!

SSO, SAML and a New Patches System and a lot of improvements

Read more on our blog post:

dokploy.com/blog/v0-28-0-s…

English

Vlaд Фaуsт retweetledi

We’re excited to introduce Doc-to-LoRA and Text-to-LoRA, two related research exploring how to make LLM customization faster and more accessible.

pub.sakana.ai/doc-to-lora/

By training a Hypernetwork to generate LoRA adapters on the fly, these methods allow models to instantly internalize new information or adapt to new tasks.

Biological systems naturally rely on two key cognitive abilities: durable long-term memory to store facts, and rapid adaptation to handle new tasks given limited sensory cues. While modern LLMs are highly capable, they still lack this flexibility. Traditionally, adding long-term memory or adapting an LLM to a specific downstream task requires an expensive and time-consuming model update, such as fine-tuning or context distillation, or relies on memory-intensive long prompts.

To bypass these limitations, our work focuses on the concept of cost amortization. We pay the meta-training cost once to train a hypernetwork capable of producing tasks or document specific LoRAs on demand. This turns what used to be a heavy engineering pipeline into a single, inexpensive forward pass. Instead of performing per-task optimization, the hypernetwork meta-learns update rules to instantly modify an LLM given a new task description or a long document.

In our experiments, Text-to-LoRA successfully specializes models to unseen tasks using just a natural language description. Building on this, Doc-to-LoRA is able to internalize factual documents. On a needle-in-a-haystack task, Doc-to-LoRA achieves near-perfect accuracy on instances five times longer than the base model's context window. It can even generalize to transfer visual information from a vision-language model into a text-only LLM, allowing it to classify images purely through internalized weights.

Importantly, both methods run with sub-second latency, enabling rapid experimentation while avoiding the overhead of traditional model updates. This approach is a step towards lowering the technical barriers of model customization, allowing end-users to specialize foundation models via simple text inputs. We have released our code and papers for the community to explore.

Doc-to-LoRA

Paper: arxiv.org/abs/2602.15902

Code: github.com/SakanaAI/Doc-t…

Text-to-LoRA

Paper: arxiv.org/abs/2506.06105

Code: github.com/SakanaAI/Text-…

GIF

English

Vlaд Фaуsт retweetledi

Ok, I think my experiment leaving AI working on stuff 24/7 ends here. It doesn't work. Code explodes in complexity, results are not that great, the AI can't get past hard walls (it is still completely unable to even *grasp* SupGen), and it is insanely expensive (spent ~1k over the last 2 days). The best results are on the JS compiler, mostly because it is familiar (compared to inets), but not worth losing control over the codebase.

I think the dream of having AI's working on the background and making real progress on things that matter (i.e., truly new things) isn't here yet. It is still a machine hard-stuck on its own training data, incapable of thinking out of the box. It is great for building things that were already built. But not new things

Also coding normally has the under-appreciated advantage that you're doing two things at the same time: building a codebase *and* learning it. AI's do only half of that. The other half is obviously impossible 🤔

English

Vlaд Фaуsт retweetledi

Vlaд Фaуsт retweetledi

Vlaд Фaуsт retweetledi

Vlaд Фaуsт retweetledi

I still need to land my next role. Bills don't pause.

But I'm done with the cycle. Time to build something that can't be taken away in a December all-hands.

Follow along for the real shit. #buildinginpublic

English