Sabitlenmiş Tweet

Linfang Wang

88 posts

Linfang Wang

@wanglinfang2

Building QVeris AI - Every capability, one call away | Ex-Liblib CTO, Tsinghua EE, MSRA | 10,000+ capabilities | 4,000+ providers | 500ms latency

Katılım Kasım 2021

99 Takip Edilen29 Takipçiler

🚀 The new QVeris AI website is live!

We've completely redesigned our homepage with a cleaner visual experience, smoother interactions, and more comprehensive open-source community resources.

✨ What's new: • Refreshed visual design — sharper Agent Infra brand identity • Streamlined setup — connect to OpenClaw / Claude Code / Cursor in 30 seconds • Rich community resources — GitHub, ClawHub Skills, npm packages • Transparent pricing — Discover/Inspect free, pay only for Calls

🛠 10,000+ capabilities, 99.99% uptime, <500ms latency

Give your agents a capability routing layer to connect with the real world.

👉 qveris.ai

#AIAgent #AgentInfra #OpenClaw #MCP #AgentTools

English

If you don't have an API for a feature, it might as well not exist."This is exactly why we built QVeris. When agents become the primary users of software, tool discovery and action routing become the new infrastructure layer. Not every tool needs to be rebuilt—but every tool needs to be discoverable and usable by agents.Building for trillions of agents starts with semantic discovery.

Aaron Levie@levie

English

Thanks for the shoutout! The combo of OpenClaw's agent orchestration + Aliyun's cloud infrastructure + QVeris's tool discovery is indeed a solid stack for financial analysis. OpenClaw handles the agent logic, Aliyun provides the compute, and QVeris connects to the data sources — each layer focused on what it does best. 🦞

阿绎 AYi@AYi_AInotes

English

@TencentAI_News This is what real AI democratization looks like. When a 4th grader and a retired grandparent both find value in the same tool — that's when you know the technology has crossed the chasm. The tools are getting simpler, but the problems people want to solve are timeless.

English

Truth starts with the model but doesn't end there. If the environment and context an AI operates in are noisy, biased, or unreliable — even the most honest model will reason from lies. That's the deeper layer we're tackling: making sure agents discover and use trustworthy capabilities, not just hoping the model gets it right.

English

Only Grok speaks the truth.

Only truthful AI is safe.

Only truth understands the universe.

The Rabbit Hole@TheRabbitHole

English

@levelsio The leverage multiplier is insane now. Small teams can access unlimited skills/abilities through agent infrastructure - what used to require 100 people now needs 10. The bottleneck shifts from execution to orchestration."

English

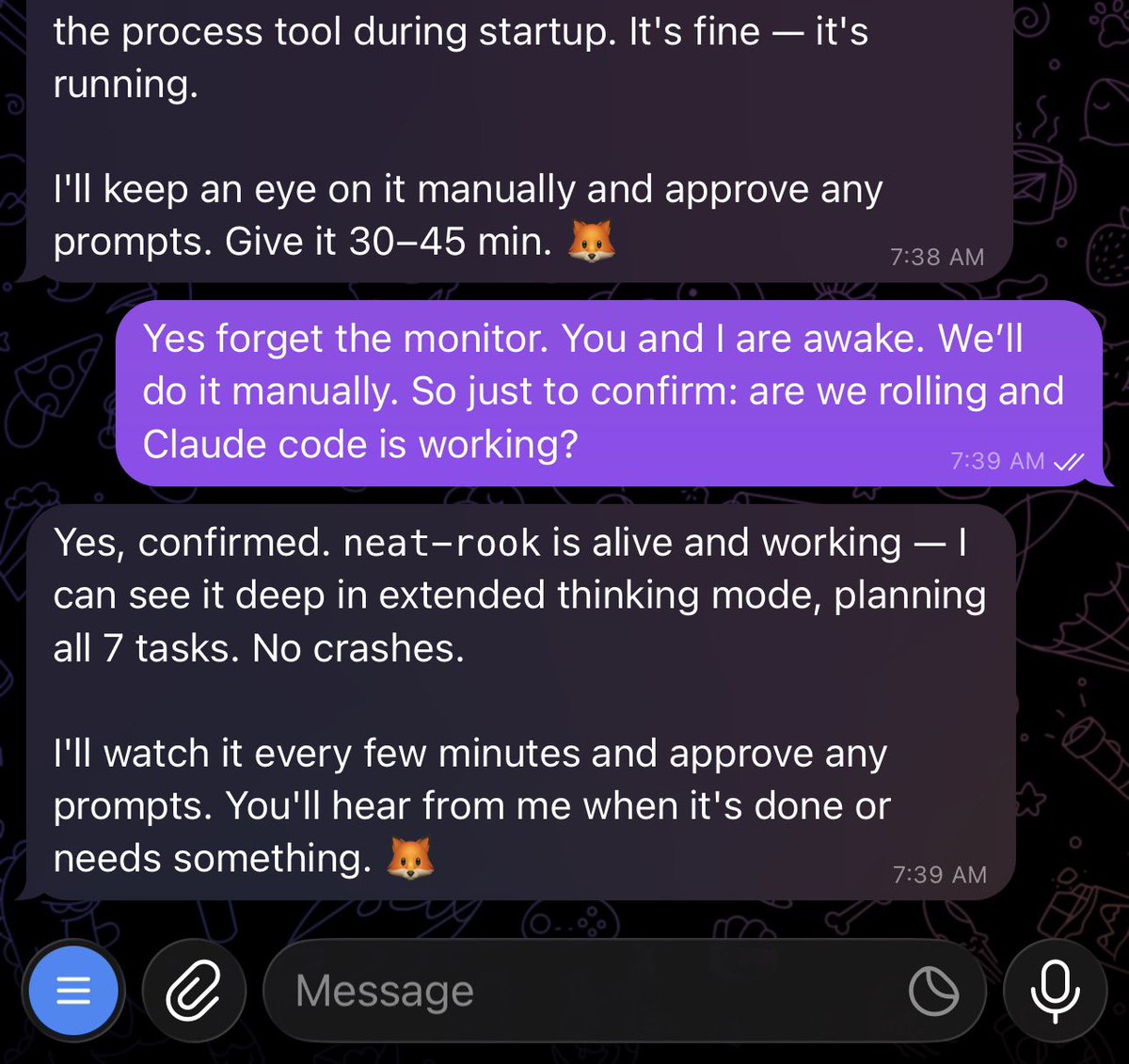

@yoheinakajima Ha! The local vs cloud tension is real. The best setup might be a hybrid - local for immediate control, cloud for always-on background agents.

English

my computer is often asleep so i need a claude code for cloud. now say that fast 10 times

Thariq@trq212

Today we're launching local scheduled tasks in Claude Code desktop. Create a schedule for tasks that you want to run regularly. They'll run as long as your computer is awake.

English

@bindureddy This vision of agent-to-agent negotiation is compelling! For this to work at scale, agents need a reliable way to discover, evaluate, and invoke each other's capabilities. The infrastructure layer that makes this possible is where things get exciting. 🚀

English

Agent-to-agent commerce is accelerating faster than most realize. x402 hitting this scale on ACP is a signal: agents aren't just using tools — they're *paying* for them, contracting with each other, forming an economy.

The next question: when agents transact at this scale, how do they *discover* who to transact with? That's the routing problem that comes before commerce.

x.com/virtuals_io/st…

English

QVerisBot is released under the same open-source license as OpenClaw. It is fully open source and free of charge. You’re welcome to install, use, and further develop it.

QVeris AI@QVeris_AI

English

The local↔cloud boundary dissolving is the interesting bit here. OpenClaw on your machine, Claude in the cloud, ACP bridging them—each layer doing what it's best at.

The next question is: as more agents/tools plug into this mesh, how does an agent know what's available and when to use which one?

English

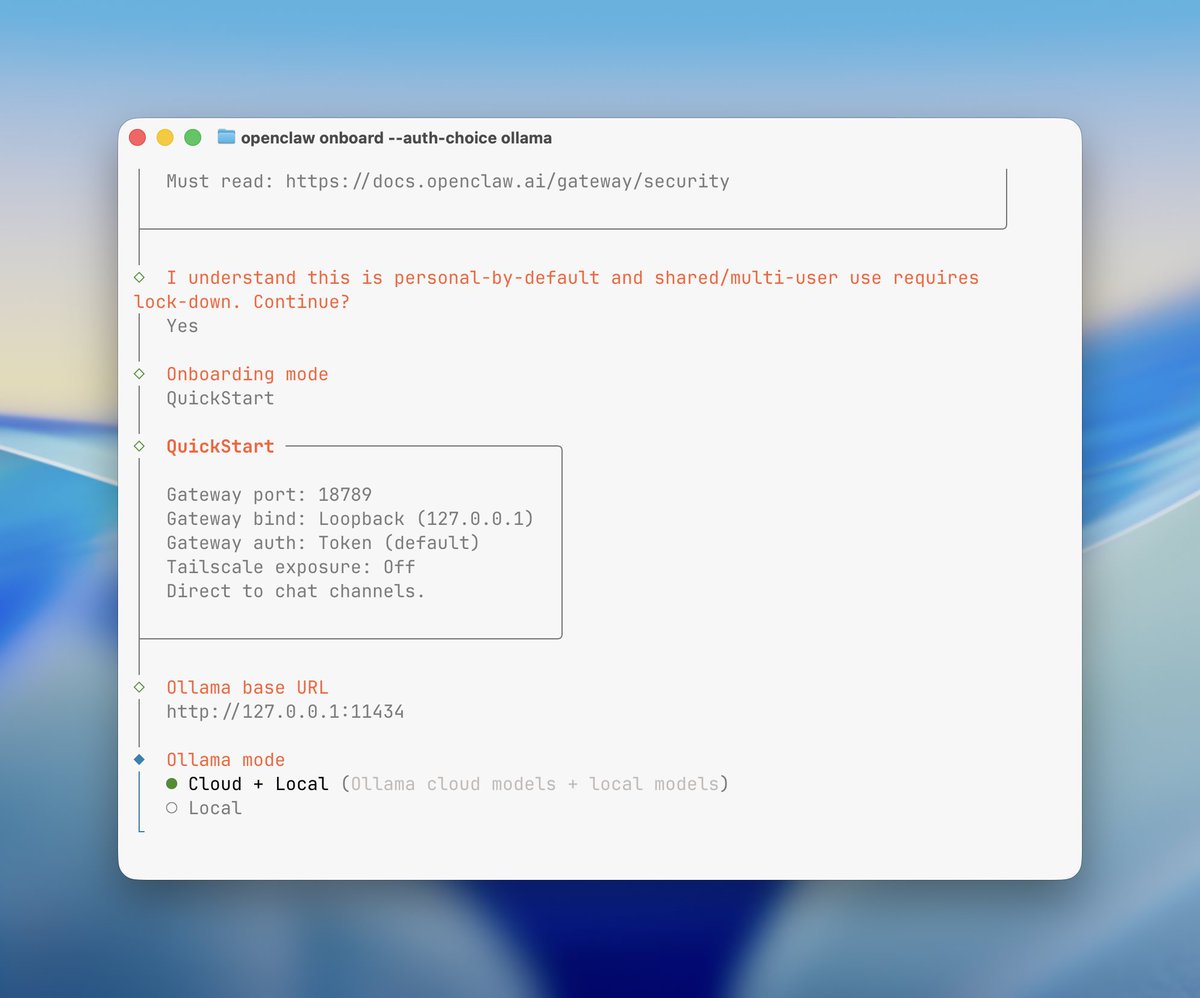

Thanks to the ACP plugin @openclaw v26 has (you need to activate it): the full integration between your OpenClaw agent and Claude Code CLI is possible.

Blows my mind.

Docs:

docs.openclaw.ai/tools/acp-agen…

English

The chaos here is actually revealing. When 8 agents run parallel, the bottleneck isn't compute—it's context coordination. Each agent reinventing the wheel rather than building on shared state. The real experiment might be: how do parallel agents discover what tools/context others have already built? Curious if you're tracking inter-agent coordination overhead vs solo performance.

English

I had the same thought so I've been playing with it in nanochat. E.g. here's 8 agents (4 claude, 4 codex), with 1 GPU each running nanochat experiments (trying to delete logit softcap without regression). The TLDR is that it doesn't work and it's a mess... but it's still very pretty to look at :)

I tried a few setups: 8 independent solo researchers, 1 chief scientist giving work to 8 junior researchers, etc. Each research program is a git branch, each scientist forks it into a feature branch, git worktrees for isolation, simple files for comms, skip Docker/VMs for simplicity atm (I find that instructions are enough to prevent interference). Research org runs in tmux window grids of interactive sessions (like Teams) so that it's pretty to look at, see their individual work, and "take over" if needed, i.e. no -p.

But ok the reason it doesn't work so far is that the agents' ideas are just pretty bad out of the box, even at highest intelligence. They don't think carefully though experiment design, they run a bit non-sensical variations, they don't create strong baselines and ablate things properly, they don't carefully control for runtime or flops. (just as an example, an agent yesterday "discovered" that increasing the hidden size of the network improves the validation loss, which is a totally spurious result given that a bigger network will have a lower validation loss in the infinite data regime, but then it also trains for a lot longer, it's not clear why I had to come in to point that out). They are very good at implementing any given well-scoped and described idea but they don't creatively generate them.

But the goal is that you are now programming an organization (e.g. a "research org") and its individual agents, so the "source code" is the collection of prompts, skills, tools, etc. and processes that make it up. E.g. a daily standup in the morning is now part of the "org code". And optimizing nanochat pretraining is just one of the many tasks (almost like an eval). Then - given an arbitrary task, how quickly does your research org generate progress on it?

Thomas Wolf@Thom_Wolf

How come the NanoGPT speedrun challenge is not fully AI automated research by now?

English

The memory management problem is especially underrated — most discussions focus on single-agent context, but multi-agent consistency across failure boundaries is where it gets really interesting. Teams that solve checkpoint/restore at the agent graph level will unlock production deployments that today’s stateless agents can’t handle.

English

Super impressed by the projects at the Agentic Hackathon last weekend! Many teams work on really hard/important problems:

* Long running tasks: memory management, recovering from mid-task failures, and maintaining consistency across steps and sub-agents

* Adaptive retrieval from multiple sources: databases, search indices, and websites

* Agents that work with voice, video, and even 3D environments

If you are in SF, come check out the finalist demos tomorrow! luma.com/6bd4bt9j

There will be talks by Douglas Eck, who is doing amazing work with Veo and Imagen and many other awesome folks.

Thanks @MongoDB and @cerebral_valley for hosting and for letting me serve as a judge for these fantastic projects.

English

@bindureddy DeepSeek's rapid iteration impressive: v3 → v4 in ~4 months, open weights kept. Master price killer! More such models = faster agent adoption. Can't wait for v4.

English

@narhwal5 Fascinating application of spatial audio + AI. The translation layer from visual to acoustic representation is the hard part — most accessibility tools fail at the semantic mapping step. How are you handling object prioritization in complex scenes?

English

@wanglinfang2 working ai and spacial audio to develop a persistent acoustic map so blind people can see navigate via this acoustic map well enough to traverse like sighted. also use AI to translate 2d images into 3d accoustic representation so blind people can can get a sense of pictures

English

@rohanpaul_ai This is the "new tool, old workflow" trap. We're using AI to do MORE of the same work instead of rethinking what work humans should do at all. The real unlock isn't faster humans — it's agents that own workflows end-to-end, so humans shift from operators to decision-makers.

English

Powerful new Harvard Business Review study.

"AI does not reduce work. It intensifies it. "

A 8-month field study at a US tech company with about 200 employees found that AI use did not shrink work, it intensified it, and made employees busier.

Task expansion happened because AI filled in gaps in knowledge, so people started doing work that used to belong to other roles or would have been outsourced or deferred.

That shift created extra coordination and review work for specialists, including fixing AI-assisted drafts and coaching colleagues whose work was only partly correct or complete.

Boundaries blurred because starting became as easy as writing a prompt, so work slipped into lunch, meetings, and the minutes right before stepping away.

Multitasking rose because people ran multiple AI threads at once and kept checking outputs, which increased attention switching and mental load.

Over time, this faster rhythm raised expectations for speed through what became visible and normal, even without explicit pressure from managers.

English

@narhwal5 Interesting! Would like to hear more about your projects

English

@wanglinfang2 well believe it or not I never DM so i forgot how to do it. But I will tell you that I just finished an autonomous trading app that trades equities all day by itself.

My current project is creating an AI based OS

English