William Belk

1.5K posts

William Belk

@wbelk

Creator of Rapid Reviews, the fastest product reviews app for Shopify https://t.co/4Uo0CtAyJn — and https://t.co/LsgG9DZJ4O free page testing tool

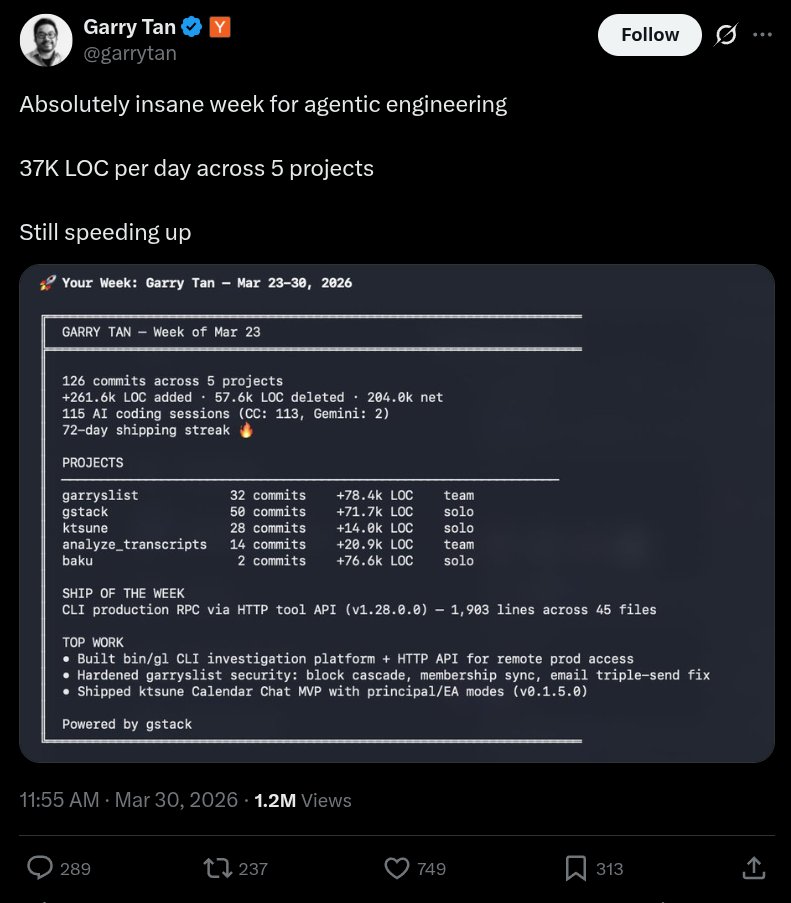

Absolutely insane week for agentic engineering 37K LOC per day across 5 projects Still speeding up

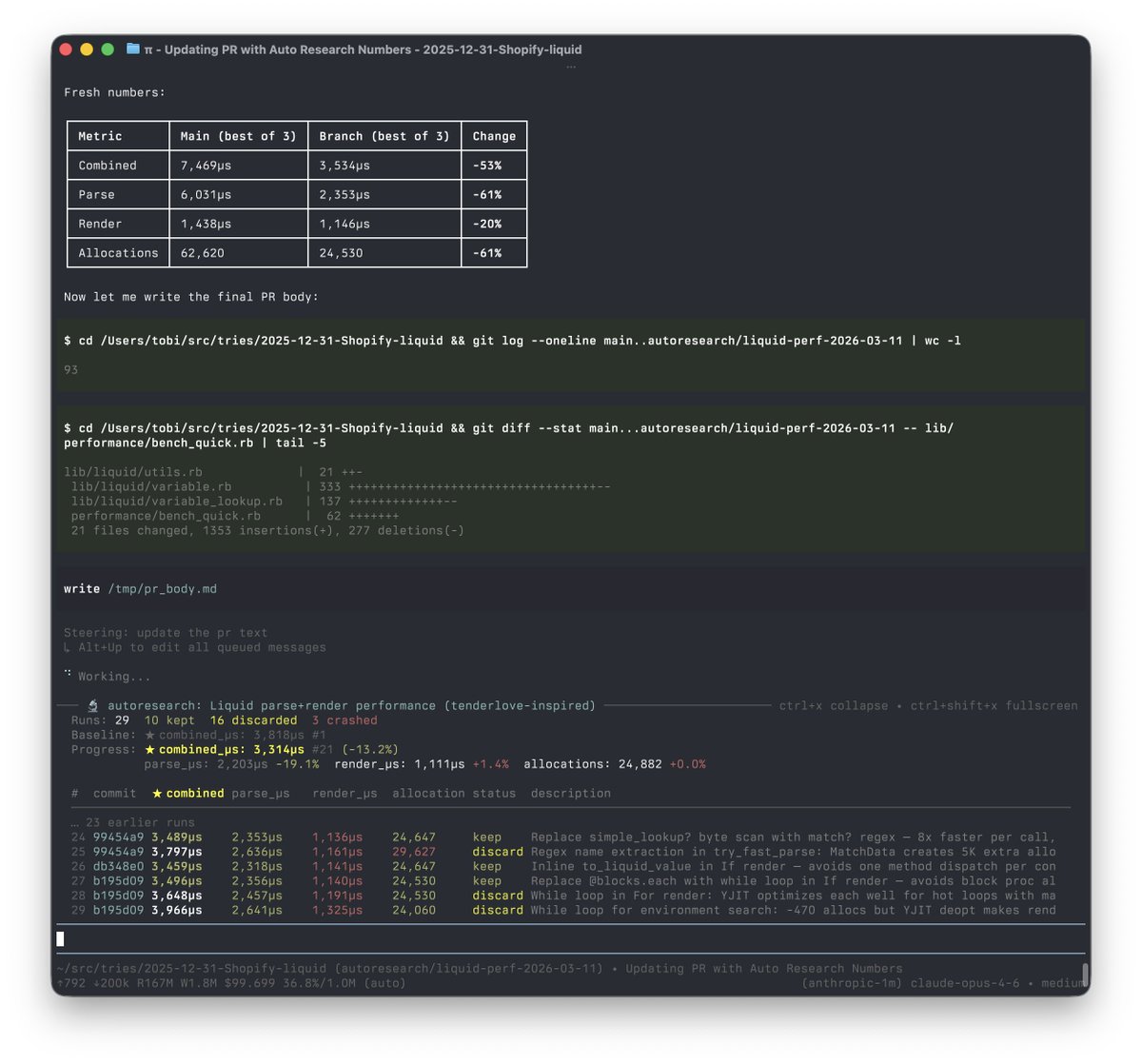

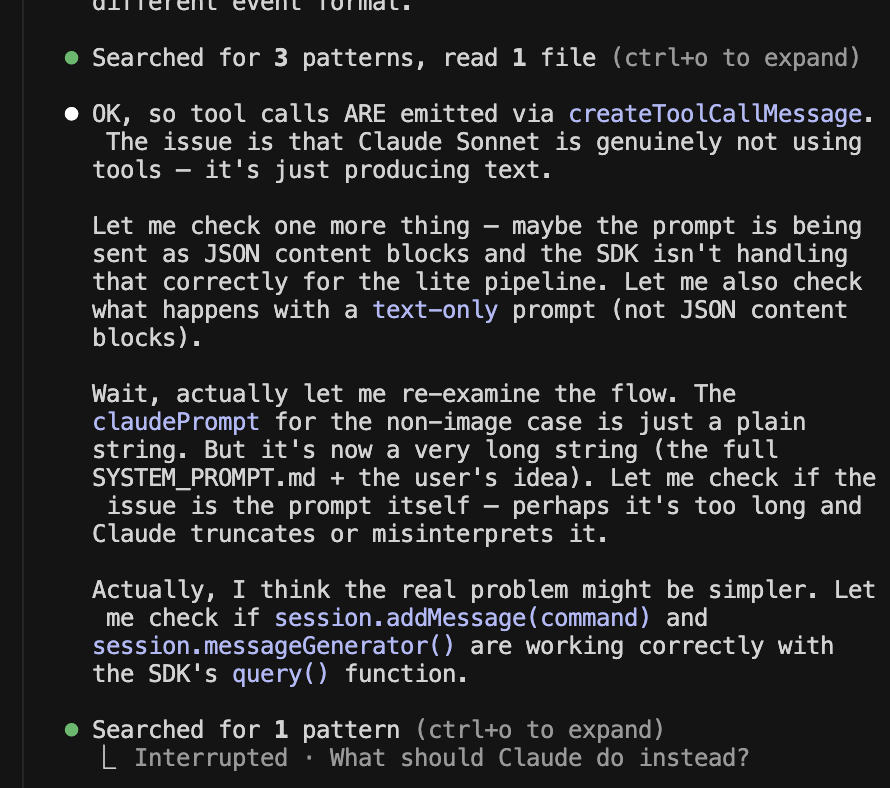

I built a skill to extract @claudeai sessions as .md and index into QMD db from @tobi, for BM25/vector search on session knowledge github.com/wbelk/claude-q… This connects persistent session data to Claude via MCP — essential for large context work. Claude will exceed the context and forget stuff, then it get spooky. - Generates a step-by-step prompt setup - Installs QMD - Extracts full Claude sessions to .md (including subagent tasks) - Indexes session content in QMD - Updates Claude settings to use QMD MCP tools for session recall - Updates QMD session index on PreCompact, SessionEnd hooks - Prevents concurrent qmd embed processes Requirements: Node v22.22.0 Bun

I built a skill to extract @claudeai sessions as .md and index into QMD db from @tobi, for BM25/vector search on session knowledge github.com/wbelk/claude-q… This connects persistent session data to Claude via MCP — essential for large context work. Claude will exceed the context and forget stuff, then it get spooky. - Generates a step-by-step prompt setup - Installs QMD - Extracts full Claude sessions to .md (including subagent tasks) - Indexes session content in QMD - Updates Claude settings to use QMD MCP tools for session recall - Updates QMD session index on PreCompact, SessionEnd hooks - Prevents concurrent qmd embed processes Requirements: Node v22.22.0 Bun

I built a skill to extract @claudeai sessions as .md and index into QMD db from @tobi, for BM25/vector search on session knowledge github.com/wbelk/claude-q… This connects persistent session data to Claude via MCP — essential for large context work. Claude will exceed the context and forget stuff, then it get spooky. - Generates a step-by-step prompt setup - Installs QMD - Extracts full Claude sessions to .md (including subagent tasks) - Indexes session content in QMD - Updates Claude settings to use QMD MCP tools for session recall - Updates QMD session index on PreCompact, SessionEnd hooks - Prevents concurrent qmd embed processes Requirements: Node v22.22.0 Bun

I built a skill to extract @claudeai sessions as .md and index into QMD db from @tobi, for BM25/vector search on session knowledge github.com/wbelk/claude-q… This connects persistent session data to Claude via MCP — essential for large context work. Claude will exceed the context and forget stuff, then it get spooky. - Generates a step-by-step prompt setup - Installs QMD - Extracts full Claude sessions to .md (including subagent tasks) - Indexes session content in QMD - Updates Claude settings to use QMD MCP tools for session recall - Updates QMD session index on PreCompact, SessionEnd hooks - Prevents concurrent qmd embed processes Requirements: Node v22.22.0 Bun