Zhepei Wei

134 posts

@weizhepei

Ph.D. Student @CS_UVA | Prev @AIatMeta, @AmazonScience. Research interest: ML/NLP/LLM.

🔥 Introducing Direct Corpus Interaction (DCI)! The best retriever for agentic search is no retriever. 🚀 We replaced the entire agentic search pipeline — embedding model, vector index, top-k retrieval — with only `grep` and `bash`. 🔧 📄 Paper: huggingface.co/papers/2605.05… DCI unlocks the full agentic potential of any Claude Sonnet 4.6: 69.0% → 80.0% on BrowseComp-Plus (+11.0, −$424). 💡The Magic: The agent searches the raw corpus directly — `grep`, `find`, `bash`, shell pipelines — exactly like a coding agent navigating a codebase. No preprocess. No embedding model. No vector index. No offline indexing. 📊The Results: DCI outperforms top baselines across 13 benchmarks, with average gains of: 🔍 Agentic Search: +11.0% 🧠 Multi-hop QA: +30.7% 📈 IR Ranking: +21.5% 💡 Insights: Beyond accuracy, we conduct a series of controlled ablation studies to pinpoint the sources of DCI’s gains. Specifically, we examine trajectory-level search, evidence utilization corpus, context management, and tool usage (RQ2-RQ6). Try it yourself! 🛠️Code: github.com/DCI-Agent/DCI-… 🤖 Demo: huggingface.co/spaces/DCI-Age… 🔎 Eval logs: huggingface.co/datasets/DCI-A…

🤔Ever wondered why your post-training methods (SFT/RL) make LLMs reluctant to say “I don't know?” 🤩Introducing TruthRL — a truthfulness-driven RL method that significantly reduces hallucinations while achieving accuracy and proper abstention! 📃arxiv.org/abs/2509.25760 🧵[1/n]

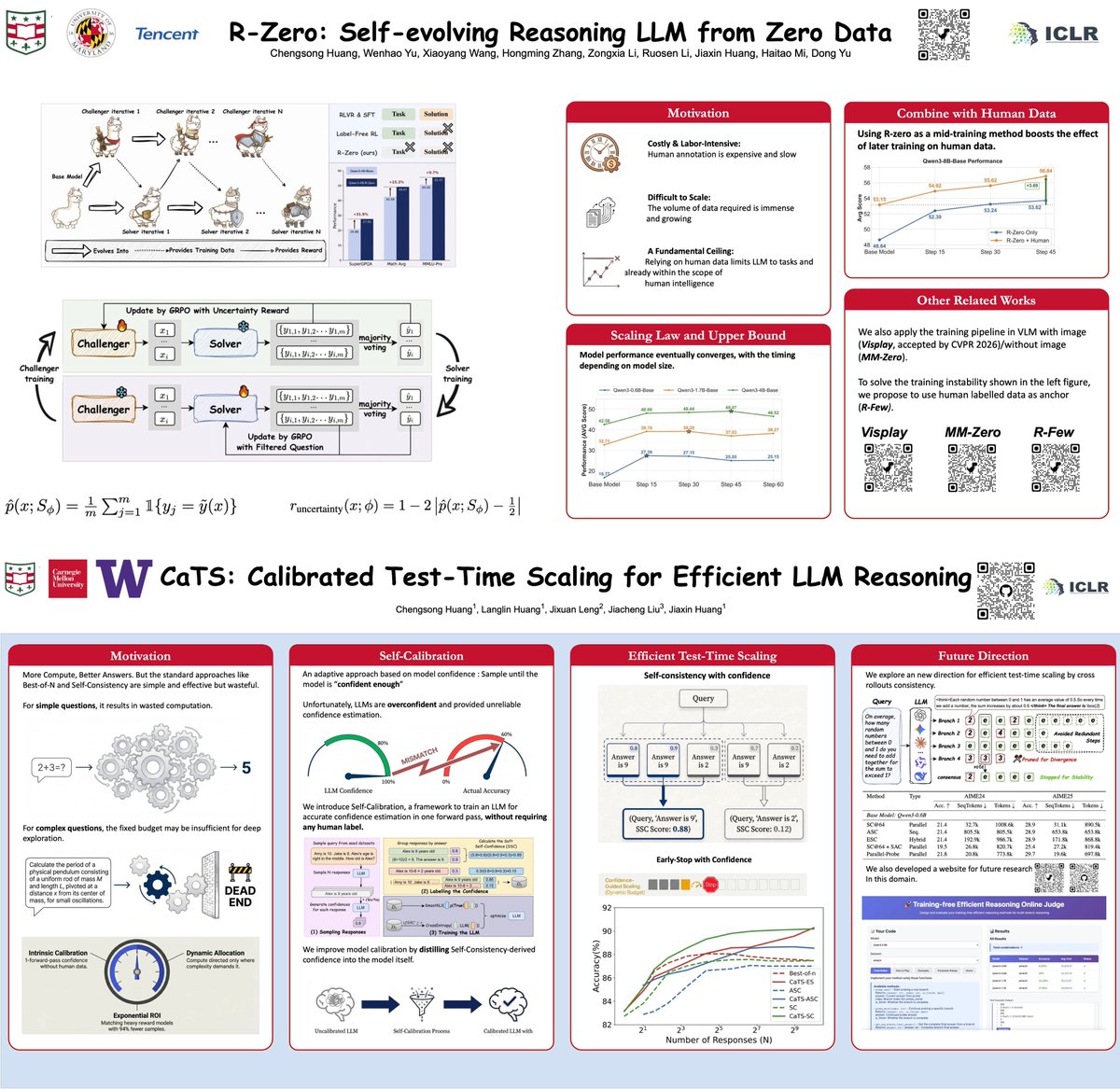

🚀🚀New Research Alert: Efficient Test-Time Scaling via Self-Calibration! ❓How to dynamically allocate computational resources in repeated sampling methods? 💡We propose an efficient test-time scaling method by using model confidence for dynamically sampling adjustment, since confidence can be seen as an intrinsic measure that directly reflects model uncertainty on different tasks. The confidence-weighted Self-Consistency can save 94.2% samples to achieve an accuracy of 85.0, compared to standard Self-Consistency. Paper: arxiv.org/abs/2503.00031 Code: github.com/Chengsong-Huan… [1/n]