Sabitlenmiş Tweet

afr

1.5K posts

afr

@weristafraid

мне так не хватает твоей красоты

Kasachstan Katılım Eylül 2021

88 Takip Edilen18 Takipçiler

@rezakhadjavi Analyse Instagram reels to analyse different niches, right now I'm letting it take screenshots in a certain time frame and analyse them and it guesses what happens in between, works as well for now

English

@weristafraid not at the moment. but what's your dream workflow here?

English

afr retweetledi

this OpenClaw bot finds restaurants with ugly menus, rebuilds them as live web menus, and mails the owner a postcard...on autopilot.

here's how agencies can land recurring contracts with this system:

- scrapes every restaurant in a city in real time

- filters by review count + rating + last menu update + photo quality

- pulls the real menu items from the official site, PDF, or Google reviews

- samples the brand palette from the restaurant's own visual identity

- renders a 9:16 brand-matched menu, hosted live at a QR-accessible URL

- writes a personalized postcard referencing a real reviewer and a real dish

- mails it to the registered office addressed to the owner by first name

every step from discovery to brand-matching to outreach is automated.

reply "MENU" + RT and i'll send you a free guide so you can build this too

English

@kloss_xyz You've got any comparisons of your work before and what papermcp+Claude produced.

English

yesterday: I gave Claude Code 5 years of design files with Opus 4.7 using Paper Design MCP and it generated hundreds of assets using my coded skills and workflows.

3% of usage

today: test Claude Design for hours... 20 iterations into the same design system, rate limited before even finishing.

All Anthropic is doing here is throttling our usage with a new “Design” UI and calling it a feature.

this on the $200 (20x) Max plan btw...

Claude@claudeai

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

English

wow, the new openai gpt image feels like a massive upgrade

before this, every time I generated an image from a prompt, I would usually cancel it right away, the tone always felt a bit too orange and off

but this time I just let it run, went to make coffee, and came back…

honestly a bit shocked with the result

it looks much cleaner, the colors feel more balanced, and that usual “gpt image look” is almost gone

still testing it, but yeah… definitely worth trying

DStudioproject@D_studioproject

i know i should sleep but the voices in my head go… this ritual party powered by seedance 2.0 on @openart_ai

English

Introducing Cinema Studio 3.5, powered by Seedance 2.0: your personal AI director.

Built on learnings from Arena Zero, Zephyr, and a growing number of original productions, Cinema Studio 3.5 brings the creative process directly to you.

The integrated assistant analyzes your prompts, learns from top-performing examples, and writes with full context of your project. Keeps track of what works, refines what doesn't, and delivers consistently better results.

This is how Higgsfield's creative teams prompt. Now it's how you prompt too.

Start here: higgsfield.ai

English

@jameygannon Vpn to access it on seedance already? How much cost reduction is that then to sites like fal or wavespeed?

English

seedance 2 is the first video model i’ve seen to actually make me excited enough to dive in to the mess that is the AI video landscape

need to get a vpn asap LOL

Higgsfield AI 🧩@higgsfield

Seedance 2.0 – officially on Higgsfield with 65% OFF! Next-gen physics in your AI videos. Joint audio-video generation. Best-in-class picture control. World’s best video model lands on Higgsfield right on our birthday. Only available through business email verification for all regions except US and Japan.

English

afr retweetledi

@jameygannon Reasonable Take, I don't like the creators advertising these node based systems as something insanely necessary/revolutionary when it's the same as having a red thread in your head when using e.g. MJ+Higgsfield. But I also don't like the thought of re-using 1 workflow for difstuf

English

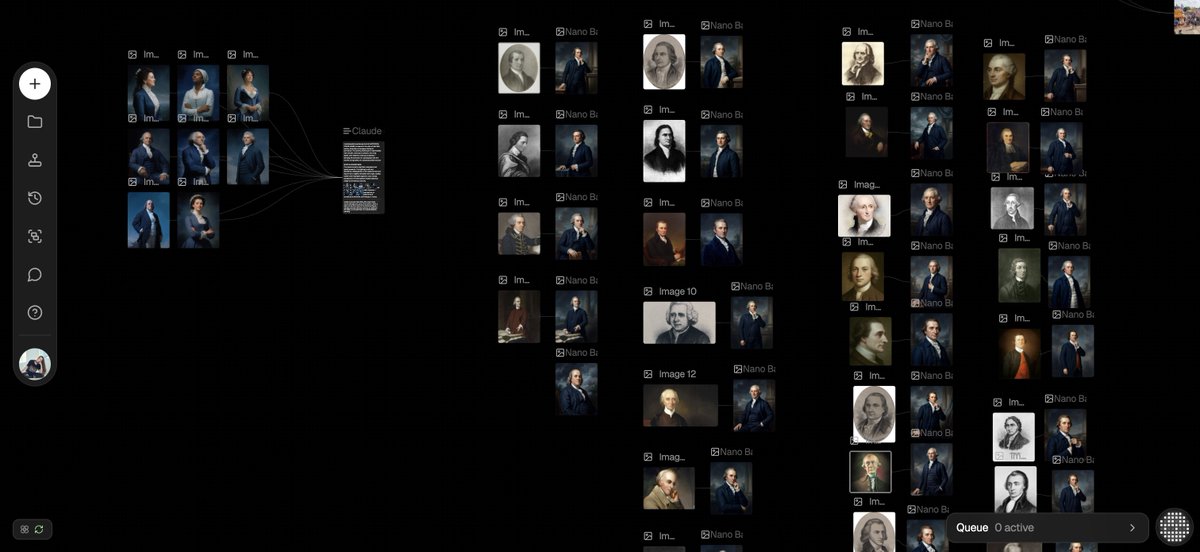

I have a theory that node-based interfaces will win because from any screenshot you can immediately tell what’s going on, especially compared to linear prompting interfaces. This is extremely important for onboarding and adoption.

The node-based stuff is largely a visual preference and kinda a gimmick (it’s no different functionally, at this point), but i’ve found it does make tutorials or client walkthroughs much easier

English

@jameygannon @midjourney Noticed as well that hands look weird more often than before 🥴

English

first looks at @midjourney v8

- EXTREMELY fast

- base realism is higher, much more depth and texture in images

- it is definitely reading the moodboards/srefs more intuitively, images look like they are actually FROM that moodboard or shoot (HUGE IMPROVEMENT)

- more body slop than v7, assuming that will improve before the full launch

from what i've seen this is exactly what we needed, feels even more like an extension of my brain

English