Haohui Mai retweetledi

Haohui Mai

1.9K posts

Haohui Mai

@wheat9

OS hacker+GPU optimization

Bay area Katılım Ekim 2009

440 Takip Edilen236 Takipçiler

Haohui Mai retweetledi

Haohui Mai retweetledi

The paper is now available: huggingface.co/papers/2602.06…

More updates coming soon!

Zhijian Liu@zhijianliu_

Holiday cooking finally ready to serve! 🥳 Introducing DFlash — speculative decoding with block diffusion. 🚀 6.2× lossless speedup on Qwen3-8B ⚡ 2.5× faster than EAGLE-3 Diffusion vs AR doesn’t have to be a fight. At today’s stage: • dLLMs = fast, highly parallel, but lossy • AR LLMs = accurate, sequential, but slow DFlash = diffusion drafts, AR verifies.

English

Haohui Mai retweetledi

Sounds incredible until you read the fine print. The compiler generates less efficient code than GCC with all optimizations disabled. It doesn’t have its own assembler or linker. It can’t produce a 16-bit x86 code generator. And Carlini himself says it has “nearly reached the limits of Opus’s abilities.” New features and bugfixes kept breaking existing functionality.

So what did $20,000 and two weeks actually buy? A compiler that passes 99% of GCC’s torture tests but can’t match the output quality of a tool that’s had 37 years of human engineering. That’s the constraint nobody’s pricing in.

The real story is in the cost curve, not the capability demo. $20,000 for 100,000 lines means $0.20 per line of generated code. A senior compiler engineer costs roughly $150/hour. At maybe 50 polished lines per hour for something this complex, that’s $3/line. AI just did it at 15x cheaper, and it will only get cheaper from here.

But the code isn’t equivalent. The AI version needs a human to finish the assembler, fix the linker, optimize the output, and prevent regressions. Those are the hardest 20% of the problem, and they represent 80% of the engineering value. Anthropic built the demo. Shipping the product still requires humans.

This tells you exactly where we are in the autonomous software timeline. AI can now produce impressive first drafts of complex systems at trivial cost. Turning those drafts into production software still requires the judgment that costs $300K+ per year in compiler engineer salary. The gap between “compiles the Linux kernel” and “replaces GCC” is measured in decades of accumulated engineering wisdom that no model has internalized yet.

The companies that understand this will use agent teams to generate the 80% and hire engineers to finish the 20%. The companies that don’t will ship $20,000 compilers that produce slower code than a free tool from 1987.

Anthropic@AnthropicAI

New Engineering blog: We tasked Opus 4.6 using agent teams to build a C compiler. Then we (mostly) walked away. Two weeks later, it worked on the Linux kernel. Here's what it taught us about the future of autonomous software development. Read more: anthropic.com/engineering/bu…

English

@Yuchenj_UW My experience is that Codex seems to have better world knowledge which make it more effective on triaging and debugging. Claude code excels in day to day software engineering tasks that need more automation.

English

Is Codex actually ahead of Claude Code now???

I tried Codex yesterday while doing some training optimizations on Andrej’s nanochat. It has worse UI, ran my code in a CPU-only sandbox despite I have GPUs. It feels less agentic than Claude Code for sure.

Sonnet 5, I'm still patiently waiting for you...

English

@HotAisle For dense model nvfp4 works out of the box (Petit). We are adding MoE support these days. Stay tuned

English

That warning about gptq_gemm being buggy and suggesting Marlin/BitBLAS is largely NVIDIA advice. On MI300X, GPTQ support is much less mature; if you see GPU “memory access fault” crashes later, GPTQ kernels are a prime suspect. Practically: FP8 / BF16 tends to be the stable lane on MI300X.

Yup...

Memory access fault by GPU node-3 (Agent handle: 0x1b1c89b0) on address 0x7ee6a0009000. Reason: Unknown.

Memory access fault by GPU node-2 (Agent handle: 0xb524a50) on address 0x7f4ca9223000. Reason: Unknown.

English

Haohui Mai retweetledi

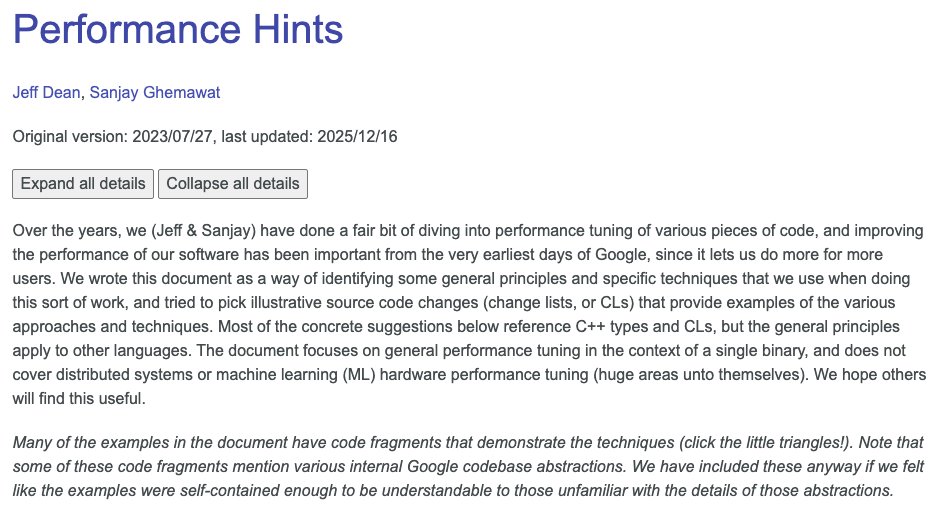

Performance Hints

Over the years, my colleague Sanjay Ghemawat and I have done a fair bit of diving into performance tuning of various pieces of code. We wrote an internal Performance Hints document a couple of years ago as a way of identifying some general principles and we've recently published a version of it externally.

We'd love any feedback you might have!

Read the full doc at: abseil.io/fast/hints.html

English

@tianyin_xu Sitting in Siebel Center at a snowstorm day is very peaceful and surprisingly satisfying

English

@tianyin_xu In terms of producing papers / artifacts, the productivity does rise. It terms of producing insights — I found it not so much. AI does makes people lazy in some sense :-)

English

That can be one of the reasons that the demand of PhD becomes smaller. I think that CS research these days either goes very fundamental or goes very applied. The middle ground fades away because engineering barriers are much lower now. OTOH students are indeed much more productive now than before.

English

Working on recommendation letters for students applying for PhD/MS. Multiple sources say this year will be tough, as PhD/MS openings will be fewer especially in traditional CS/ECE areas, e.g., not AI related [1].

My suggestion is to apply more broadly and more targetedly. There are still many excellent colleagues who are looking for grad students. You can find some of them in the comments of this LinkedIn post,

linkedin.com/feed/update/ur…

[1] In fact, AI today means everything CS, and I argue that AI needs more domain experts than ever.

English

I really hope that AMD can spend some love on their out-of-the-box experience for inference. For example, the latest sglang v0.5.5 docker image is broken for a whole week due to github.com/sgl-project/sg…. Maybe it's time to add some smoke tests

English

Haohui Mai retweetledi

🚀 End the GPU Cost Crisis Today!!!

Headache with LLMs lock a whole GPU but leave capacity idle? Frustrated by your cluster's low utilization?

We launch kvcached, the first library for elastic GPU sharing across LLMs.

🔗 github.com/ovg-project/kv…

🧵👇 Why it matters:

English

Haohui Mai retweetledi

How do you run FP4 models on AMD MI250/MI300 without waiting for MI350?

The CausalFlow team @wheat9 built Petit, optimized mixed-precision kernels co-designed with AMD’s MatrixCore.

Benchmarks:

🔹 1.74× faster Llama-3.3-70B inference

🔹 3.7× faster GEMM vs hipBLASLt

Open-sourced + integrated into SGLang v0.4.10. See the full blog👇

English

@aramh Too difficult for novice programmers, not enough knobs for experts (except having unsafe everywhere) but in general it’s still nice for average system programmings

English

I'm looking for intelligent critiques of Rust (not dumb statements like "borrow checker bad").

One complaint I have is that Cell/RefCell are types instead of modes. In other words that the horizon of mutability is static instead of dynamic. It's possible to go from a mutable reference to an immutable one, but it's not fine grained enough.

Another complaint I have is that the lifetime of a reference doesn't default to its encompassing data structure.

English

@satnam6502 Get an MacBook Air and a powerful Linux box. That works the best

English

I find myself without a laptop and I am torn between a 13" MacBook Air M4 32GB 1TB vs. 14" MacBook Pro M4 Pro 32GB 1TB. It will be my main development machine (with external monitor), running SystemVerilog simulations, theorem provers like Lean4, Agda, SVA formal verification jobs using Tabby CAD from YosysHQ, ML frameworks like MLIR and OpenXLA, and some large Haskell programs. So all this points to the MacBook Pro (and the extra HDMI etc. ports are nice) but if the whole point of a laptop is to be light and portable perhaps I should get the MacBook Air and hope it has enough juice to keep me productive. In that case it might make sense to pair it with a beefy Linux machine which I can use via VS Code's remote feature (my typical mode of use recently anyway). Any advice very welcome, esp. from theorem prover and hardware CAD users.

apple.com/shop/buy-mac/m…

English

@tianyin_xu What a pity. It shows the challenges of system research to stay relevant is real. It is tough when everybody moves to “AI”…

English