Whijae Roh

738 posts

Whijae Roh

@whijae

Computational Biologist #CancerGenomics #GenomicMedicine #AI

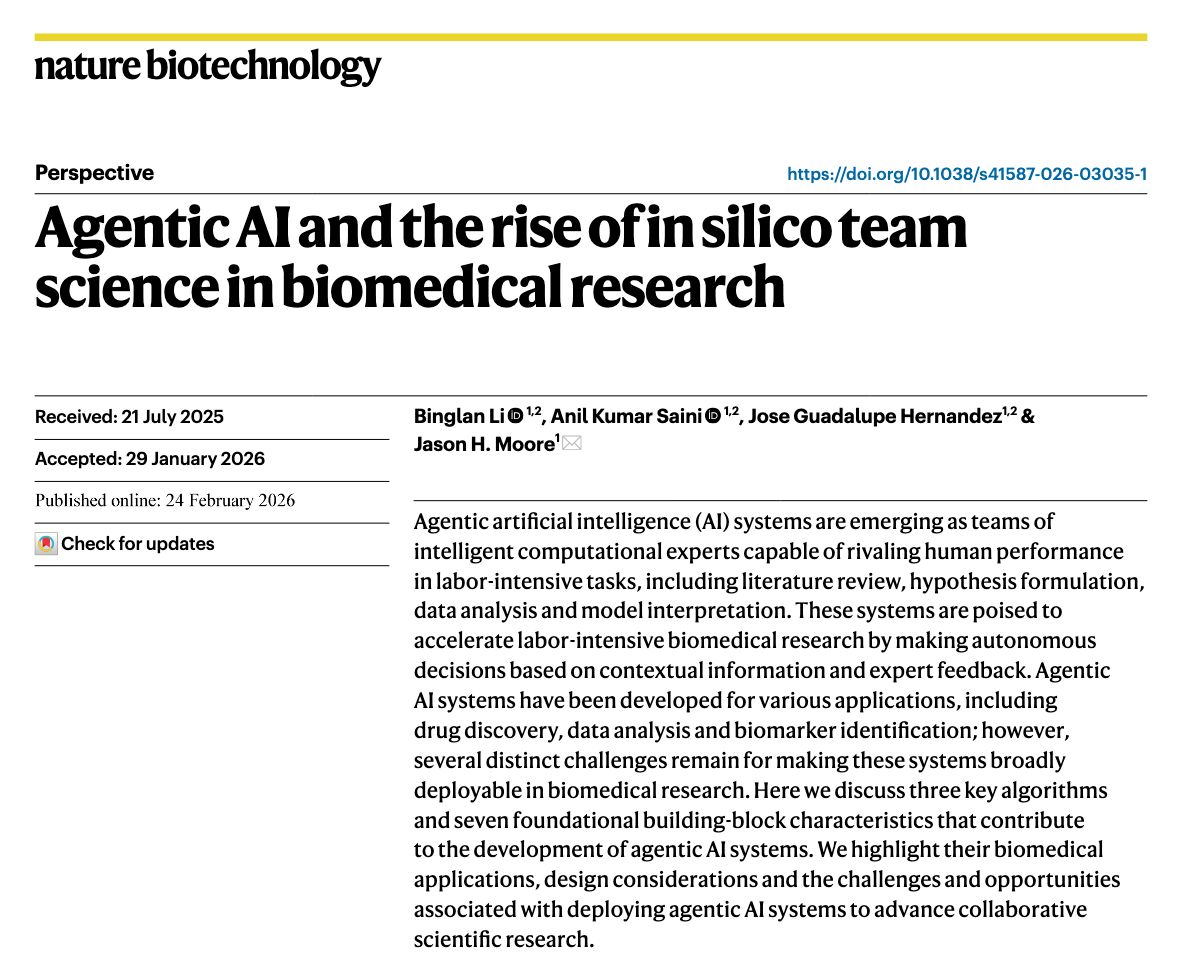

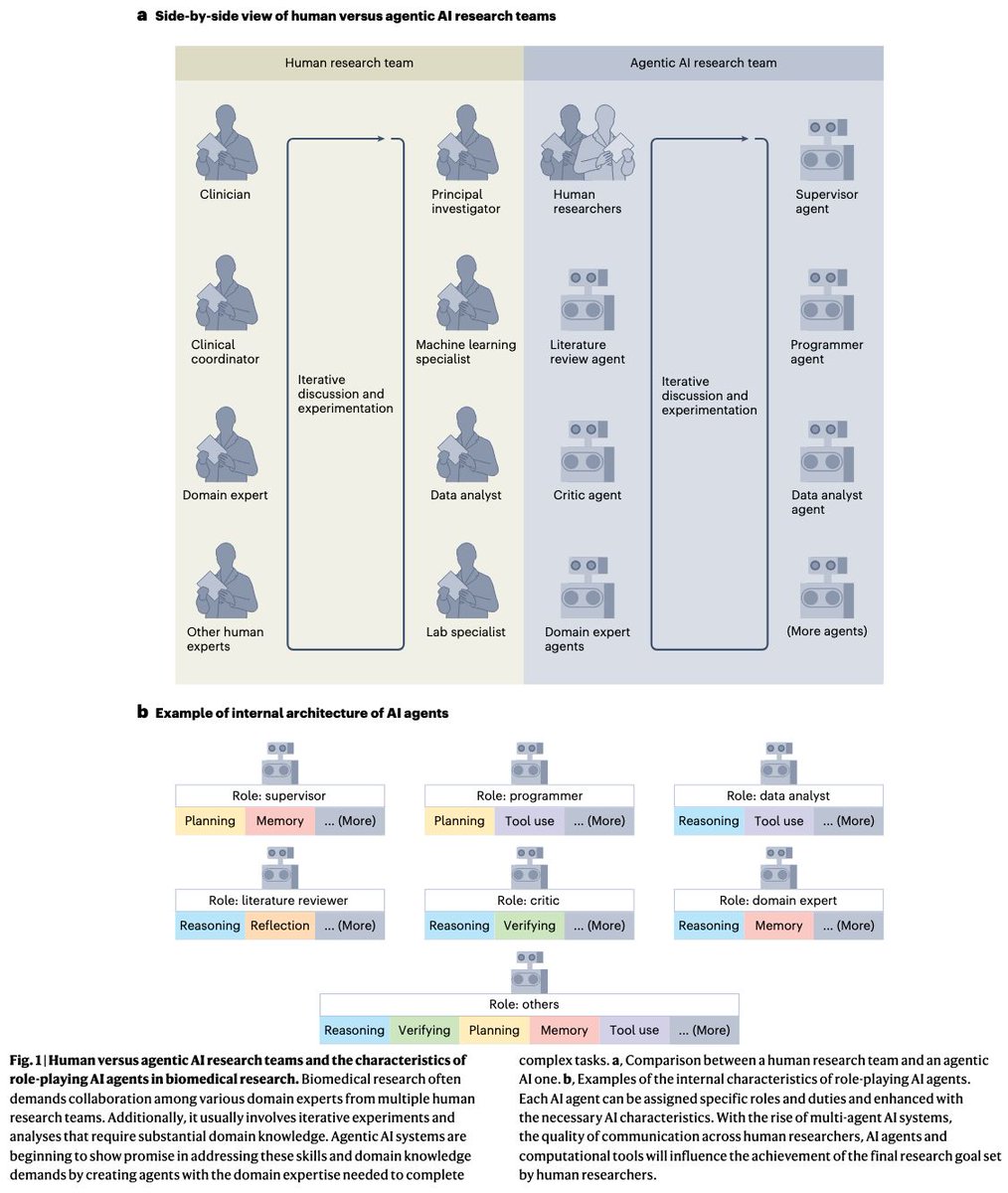

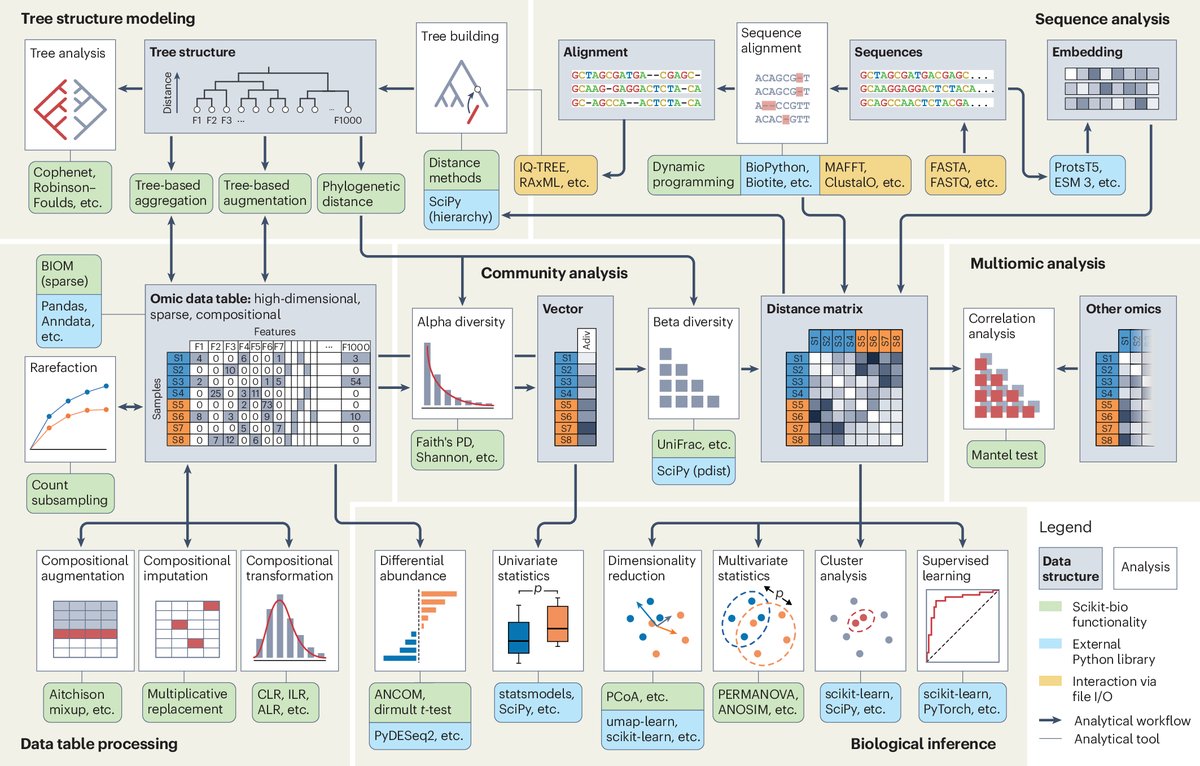

I used Claude Opus 4.5/4.6 (and a bit of Codex GPT-5.3) to port edgeR to Python. See edgePython github.com/pachterlab/edg… This allowed me to develop a single-cell DE method that extends NEBULA with edgeR Empirical Bayes. All in one week. Details in doi.org/10.64898/2026.…

There's a new kind of coding I call "vibe coding", where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It's possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper so I barely even touch the keyboard. I ask for the dumbest things like "decrease the padding on the sidebar by half" because I'm too lazy to find it. I "Accept All" always, I don't read the diffs anymore. When I get error messages I just copy paste them in with no comment, usually that fixes it. The code grows beyond my usual comprehension, I'd have to really read through it for a while. Sometimes the LLMs can't fix a bug so I just work around it or ask for random changes until it goes away. It's not too bad for throwaway weekend projects, but still quite amusing. I'm building a project or webapp, but it's not really coding - I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.