Patrick Mineault

5.5K posts

Patrick Mineault

@patrickmineault

NeuroAI researcher @ Amaranth Foundation, safety, open science. Previously engineer @ Google, Meta, Mila.

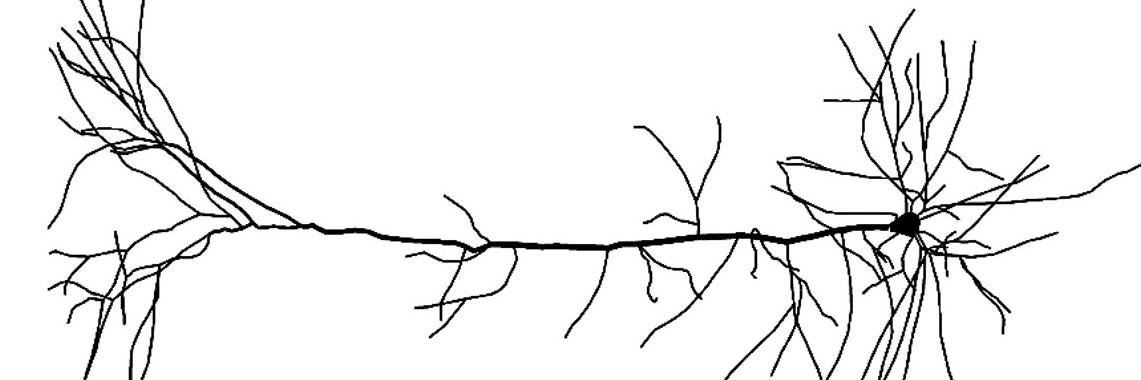

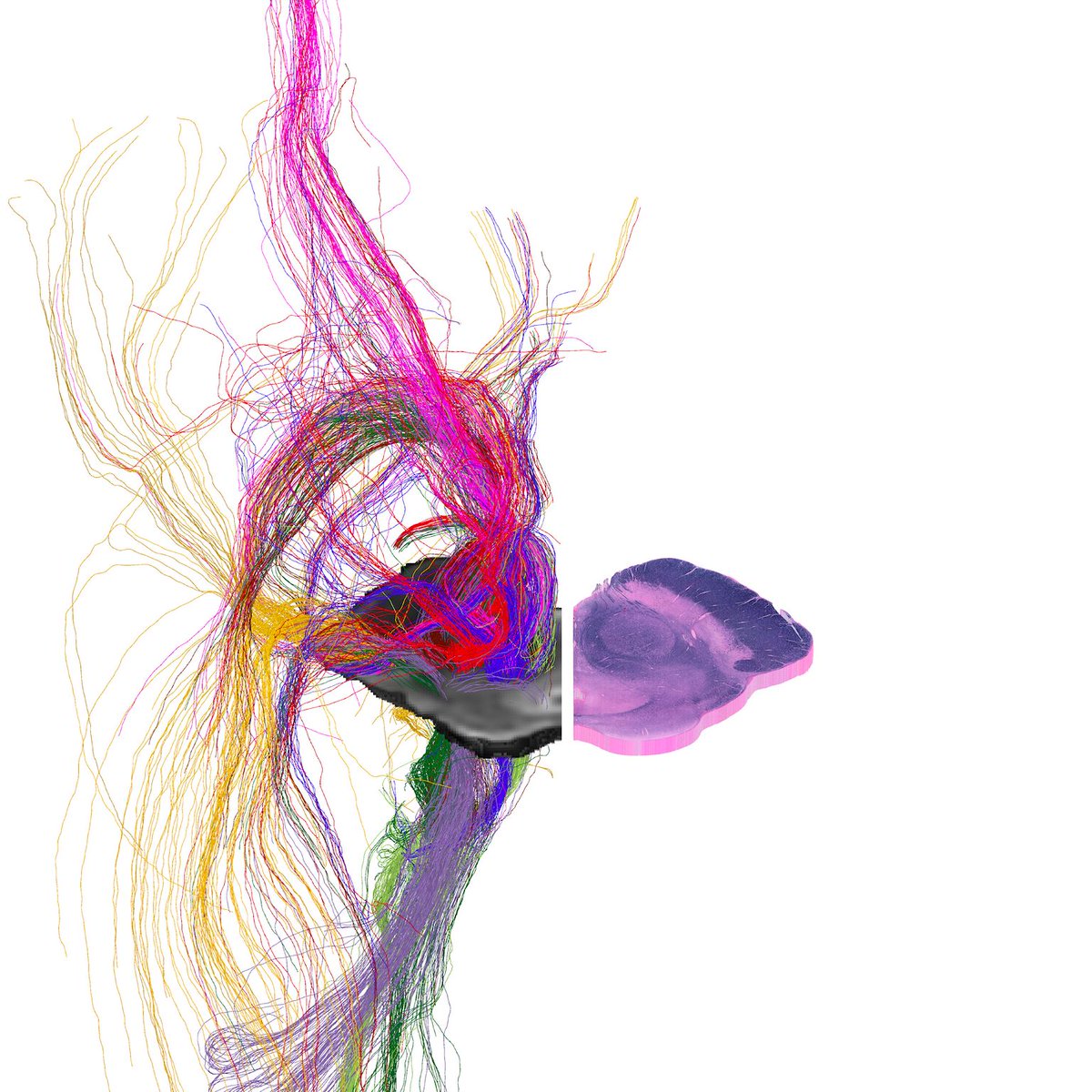

1/8 Our preprint is now a peer-reviewed paper :) Big thanks to our reviewers who pushed us to examine our results more carefully and Olivier Wyart (headquarter.paris) for the exquisite visual. science.org/doi/10.1126/sc…

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

Towards Magnanimous AGI Before we build extremely powerful alien minds, we must understand our own minds and the mechanisms behind prosocial behavior. After years of investigating brain-based AI safety, here’s what we found and the teams we're backing: blog.amaranth.foundation/p/towards-magn…