Sabitlenmiş Tweet

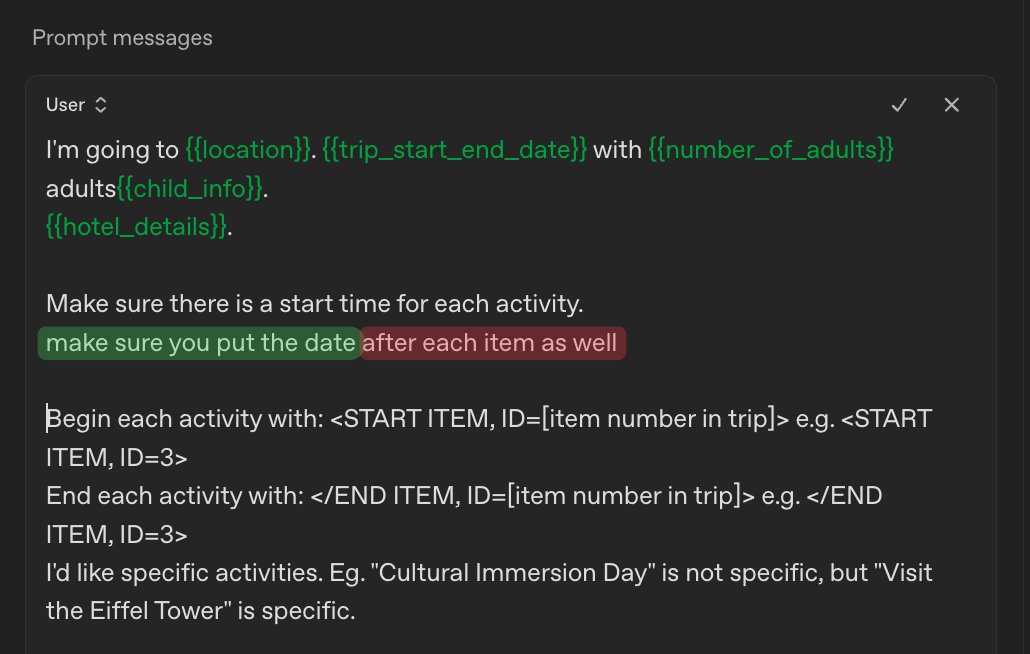

I enjoy @TheEconomist but can't read it all. Their "Your Day in Brief" section summarise key articles, but not all of them (& don't include the GOATs @matt_levine and @benthompson)

A great thing with ChatGPT/GPT API is how quickly you can make tools that used to take days/weeks

English