Willy Douhard retweetledi

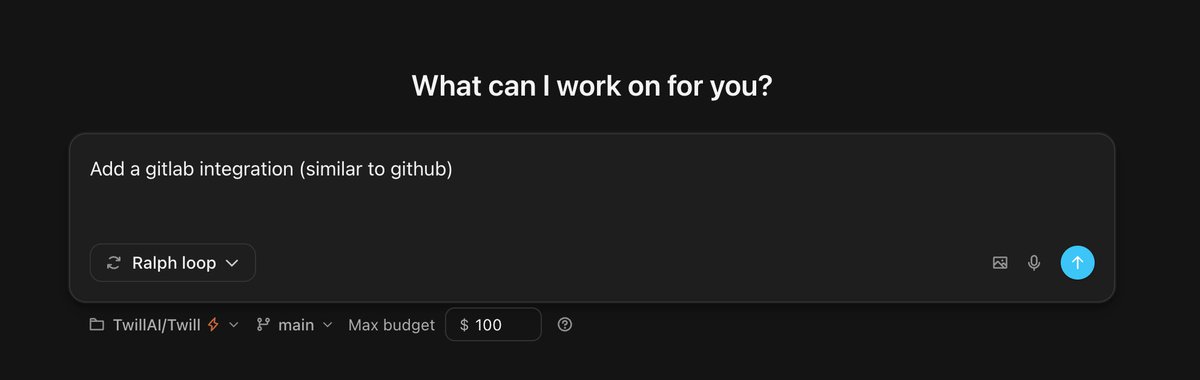

Twill.ai update: new runtime, new features!

We rebuilt the runtime that drives every Twill task and open-sourced it as agentbox 👉 github.com/TwillAI/agentb…

New:

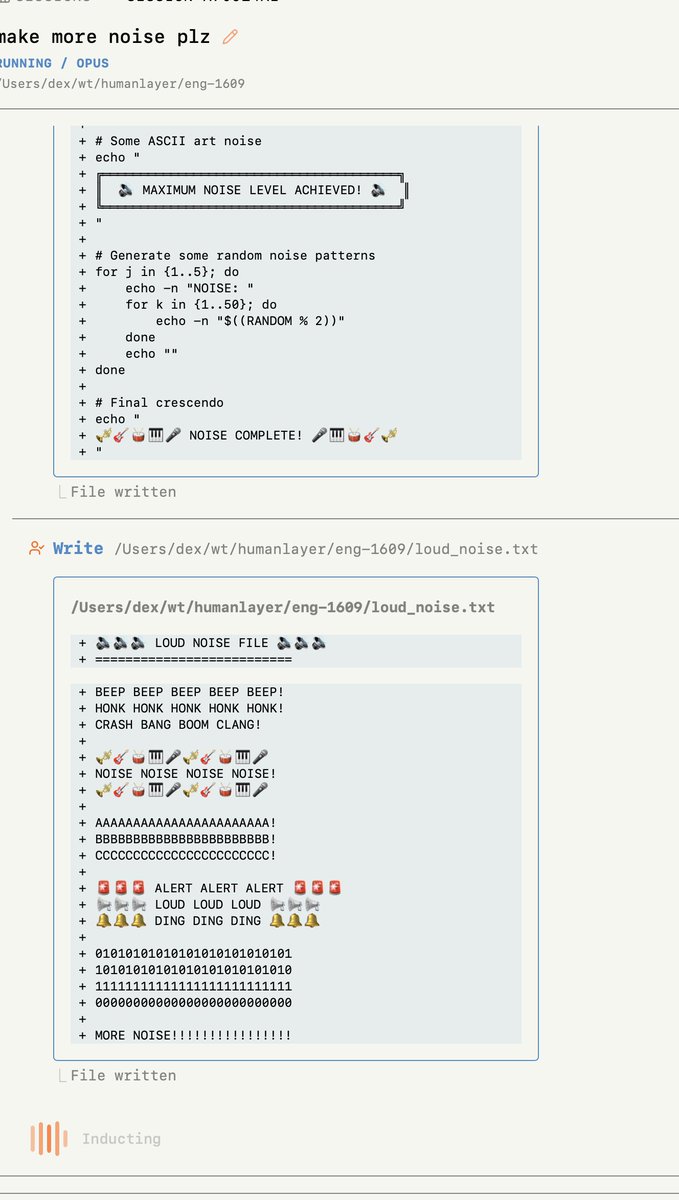

- Live preview pane: see your app run, open a terminal, watch entrypoint logs

- Reasoning level control (per task)

- Token-level streaming of agent responses

- Message editing (rewind a run from any prior message)

Faster:

- Sandbox setup ~5x faster

- Follow-up response start ~4x faster

More info here: twill.ai/newsletter/202…

English