Xiaochuan Li

51 posts

@xiaochuanlee

Ph.D. student @LTIatCMU, working with @XiongChenyan. Previously @Tsinghua_Uni @Alibaba_Qwen

Now in research preview: routines in Claude Code. Configure a routine once (a prompt, a repo, and your connectors), and it can run on a schedule, from an API call, or in response to an event. Routines run on our web infrastructure, so you don't have to keep your laptop open.

Today, we welcome the 2026 Microsoft Research Fellowship cohort, an inspiring global community of fellows and advisors helping to shape what’s next across science, technology, and society. Join us in celebrating this year’s recipients: msft.it/6013Q45bX These contributions span the following themes: • AI for global and societal impact • AI fundamentals: scalable reasoning, model adaption and evaluation • Biological and scientific modeling • Foundational systems & infrastructure for AI • Human-AI collaboration and interaction • Multimodal & embodied intelligence

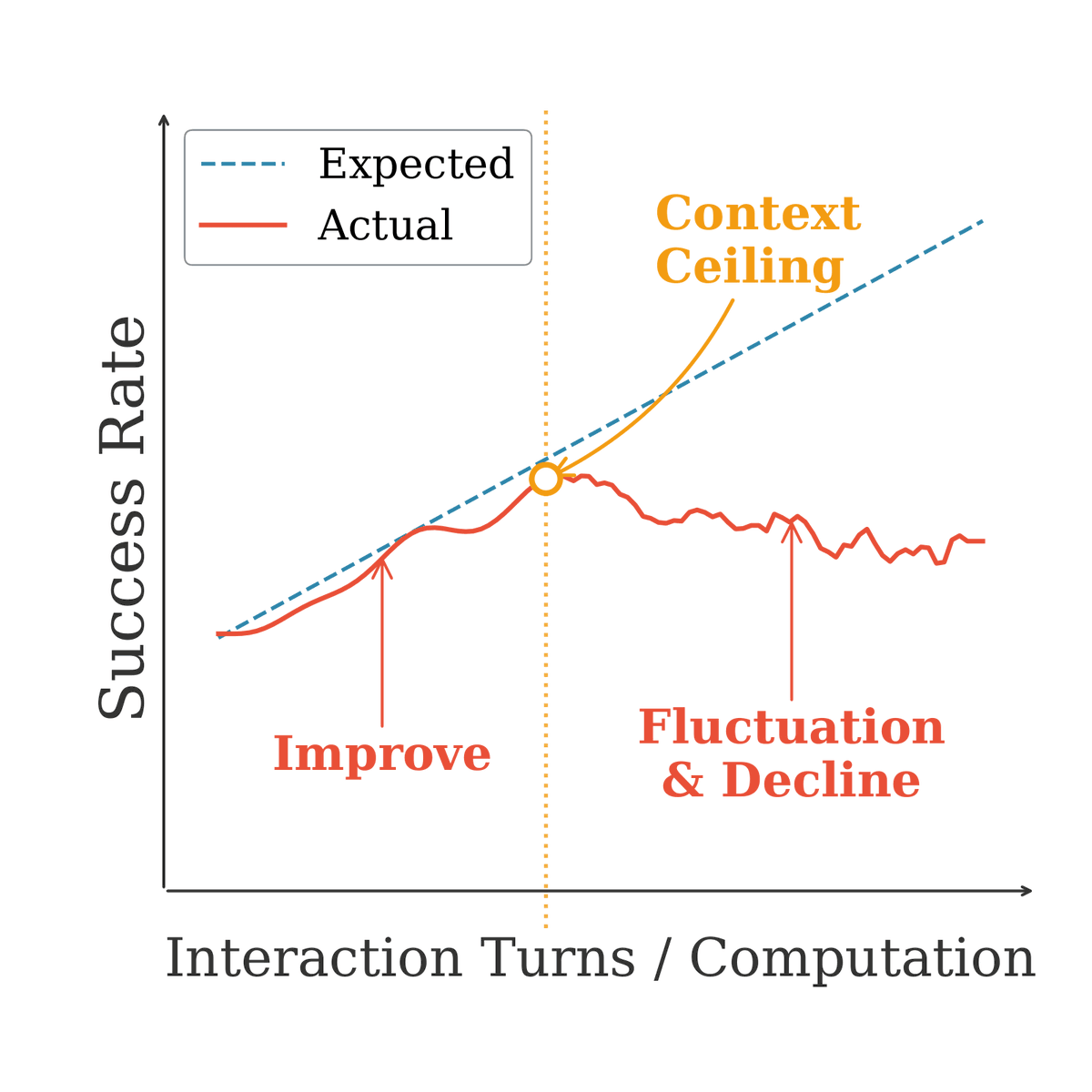

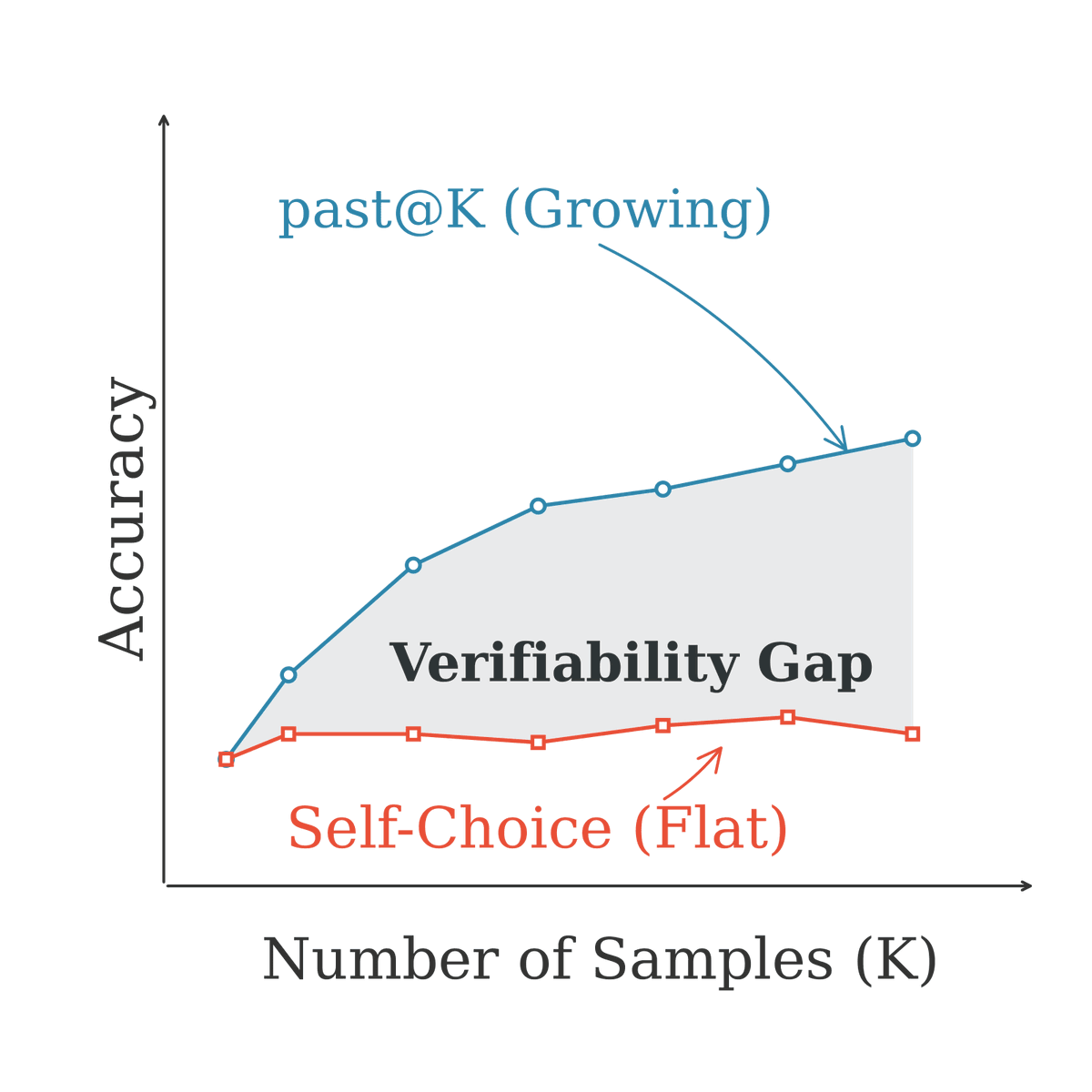

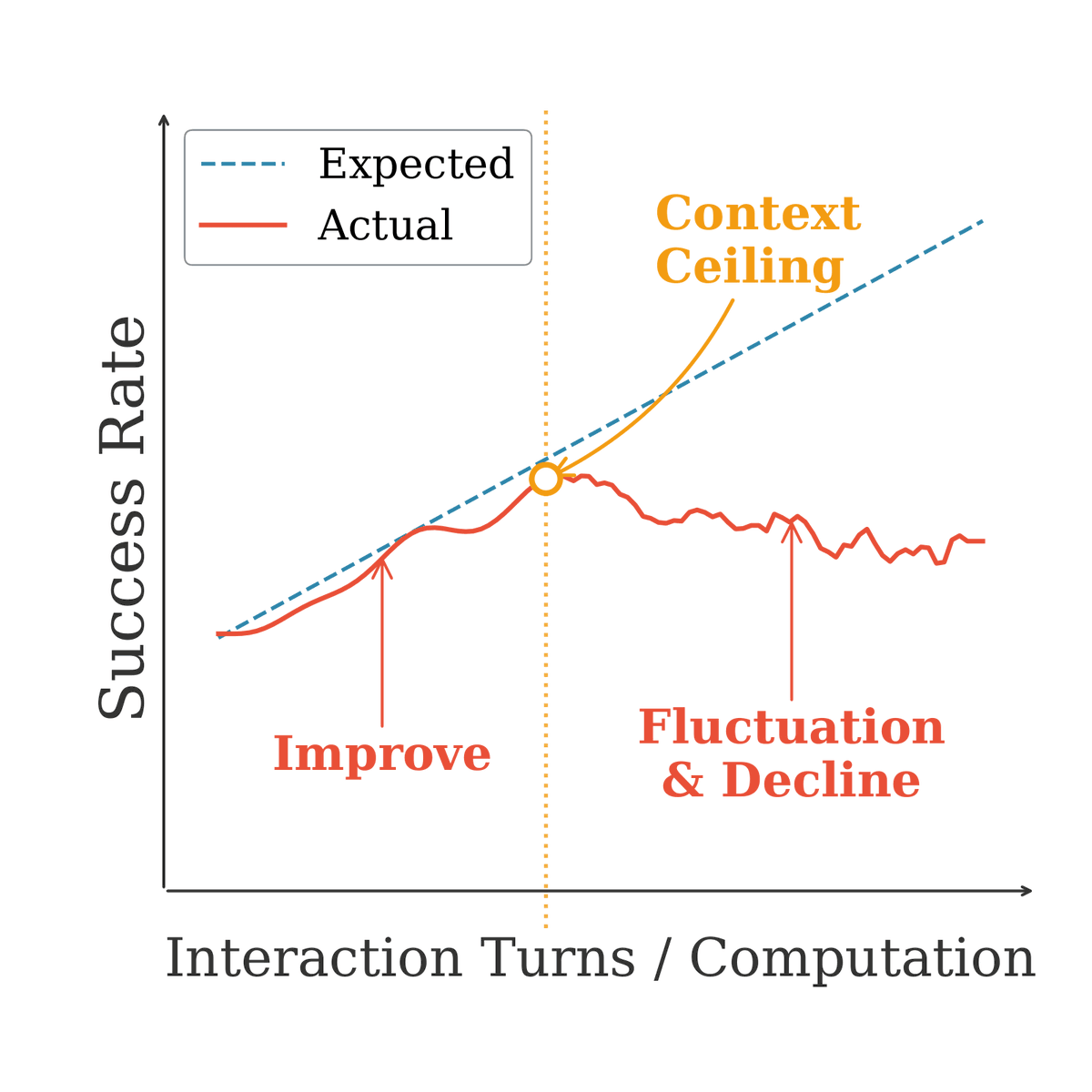

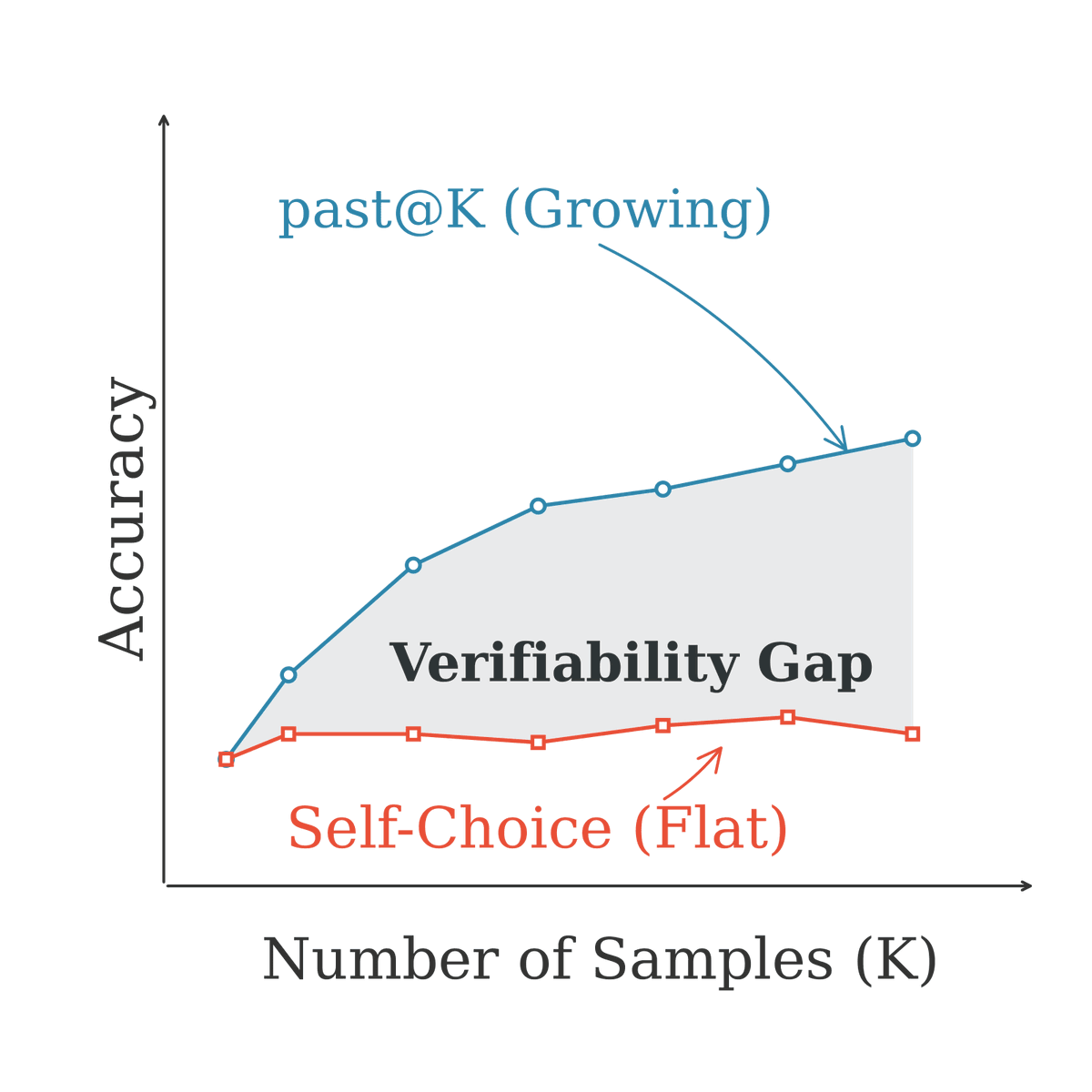

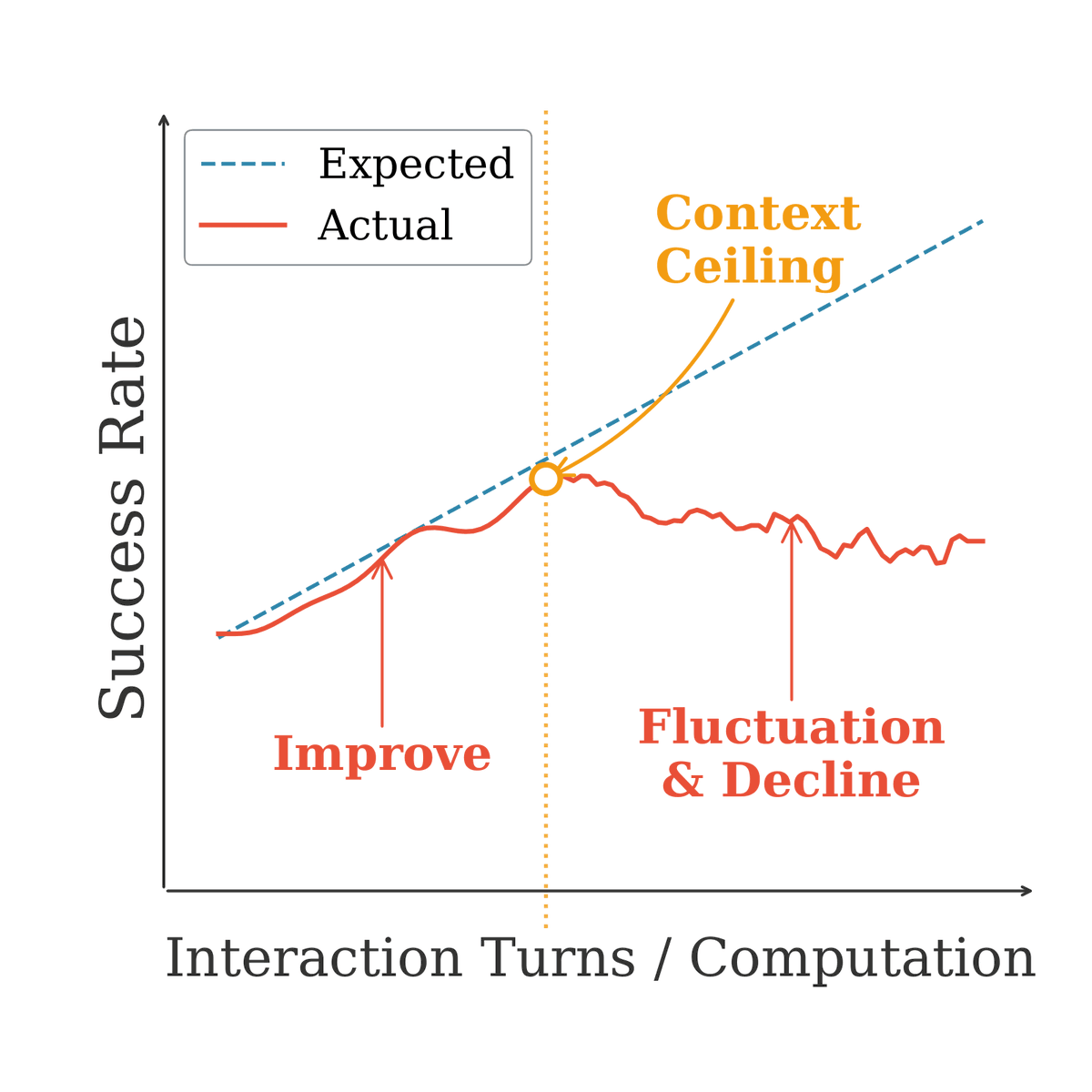

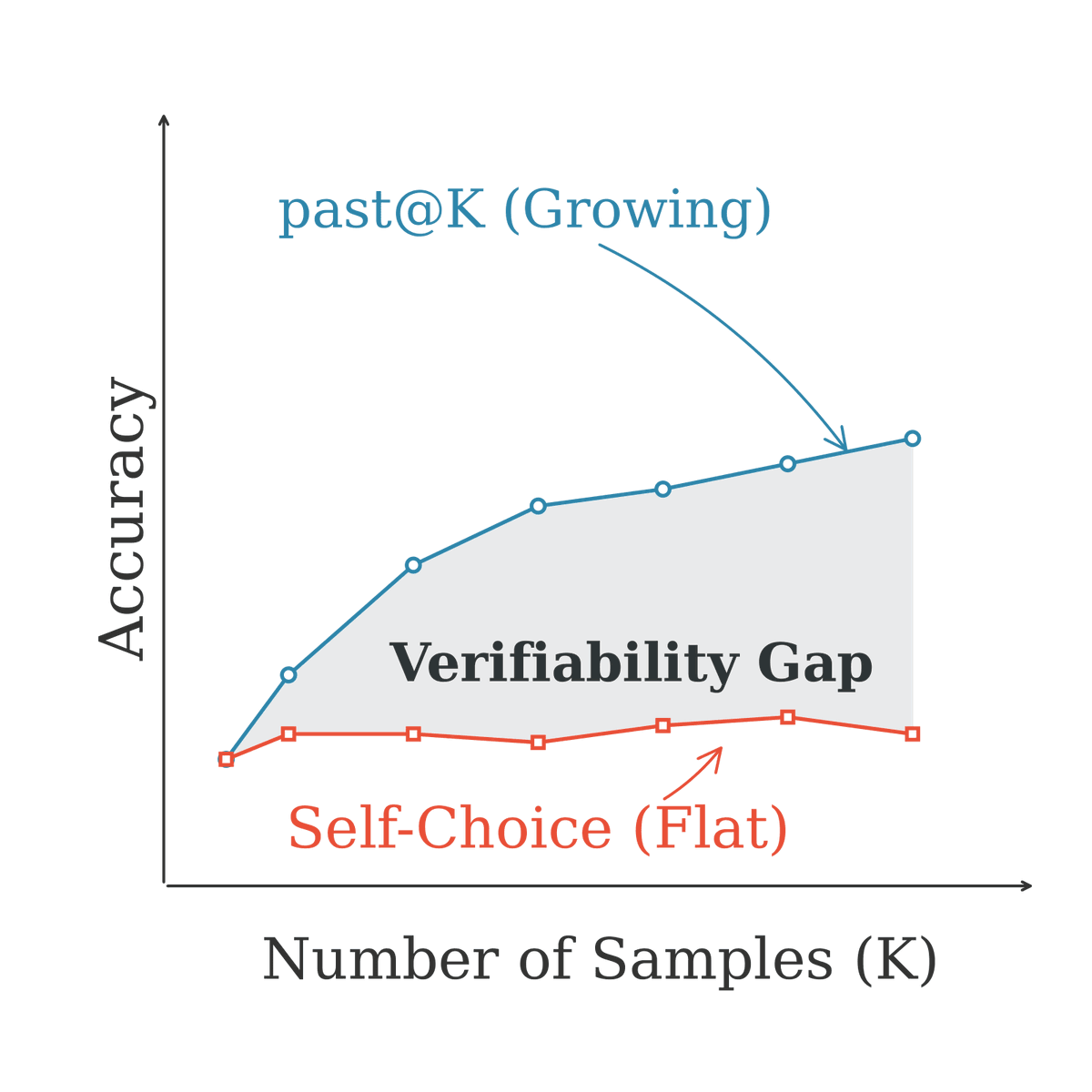

Test time scaling is the current bet for making LLM agents smarter: just give them more compute at inference. But does it actually work for general purpose agents? New benchmark from @XiongChenyan and Xiaochuan Li at @CMU_LTI, with collaborators from @MetaAI, tested 10 leading agents across search, coding, reasoning, and tool use in a unified setting. Two findings that should concern anyone building agent products: 1. Sequential scaling (longer interactions) hits a 'context ceiling' around 96K to 112K tokens. Beyond that, agents destabilize. More rounds of interaction make them worse, not better. 2. Parallel scaling (sampling multiple trajectories) looks good on paper (pass@K improves), but agents cannot reliably pick their own best answer. The 'verification gap' means real world gains are minimal. Models also showed 10 to 30% performance drops just from moving to a general agent setting vs. domain specific benchmarks. Source: arXiv 2602.18998

I (finally) put together a new LLM Architecture Gallery that collects the architecture figures all in one place! sebastianraschka.com/llm-architectu…

🤖Clawbots just moved into Embodied City inside SimWorld. They wake up. Go to work. Run errands. Talk to each other. All inside a shared physical world. This isn’t scripted — it’s autonomous agents living a daily routine. And you can spin up your own agent in minutes.