Yaz

2.9K posts

Yaz

@yazcal

building https://t.co/1EqOUSvVHR · cofounder @ https://t.co/Tq3tWyzfao prev. vulnzap · research @asu quis custodiet ipsas machinas?

🇪🇺 ➝ 🇺🇸 Katılım Kasım 2021

3.6K Takip Edilen1.2K Takipçiler

@jjacky Rush hour over the Golden Gate (18mi to mission) can definitely be a pain, yep. But if you’ve got a family, it’s a great opt - super safe neighborhood, and the ferry is nice too. from what I’ve heard, it’s best if you’re going in 4 days a week or less, or not doing a strict 9–5;)

English

@gbengaolajide01 @cognition i think i had modified github.com/rsvedant/openc… (don't recommend now though)

English

Yaz retweetledi

There are only two coherent paths: commoditizing a model like this so the defensive uplift diffuses broadly, even at Tparams, or keeping it closed for the few who can pay until Chinese labs build their own closed variants and hand the offensive edge to the operators they prefer.

Anthropic@AnthropicAI

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

English

quick sneak peek of my previous life (before being the yellow guy 💛) where i was used to pitch my ventures to @EmmanuelMacron 😂

this was fun lol

English

this fixes where code runs but not what it can do

100 agents = thousands of authz decisions per second. RBAC was built for humans clicking buttons. agents need per-action checks in <10ms or your swarm is sitting idle

everyone's solving the easy problem (ephemeral envs) and ignoring the hard one (authz at machine speed)

veto.so - deterministic-first, works with every major framework in 2 lines (see git.veto.so OSS too)

English

At @browser_use we run millions of parallel agents. We think about parallelism all day. That's the job.

It got me thinking about something most teams aren't talking about yet.

Picture a 50 person engineering org where everyone uses AI coding assistants. Each developer kicks off a few agents at once. Suddenly you have hundreds of concurrent code changes being generated, tested, and pushed.

Now ask yourself: where does all that code get validated?

For most companies, the answer is a single staging environment. Maybe two if they're lucky.

That's a massive mismatch. Your development throughput just 10x'd, but your validation layer stayed exactly the same. Agents sit idle waiting their turn. Context windows expire. CI pipelines pile up. The productivity gains you paid for evaporate in a queue.

This isn't an agent problem. It's an infrastructure problem. Staging was built for a world where one person ships one thing, gets feedback, iterates. That model breaks when the workload becomes parallel by default.

I've seen this pattern before, even pre-AI. At Flexport, the product was so large you couldn't run it locally. Every engineer got their own cloud dev environment. Docker containers spun up on demand, with switches to toggle which services you needed. Not because it was fancy. Because one shared environment for hundreds of engineers simply didn't work.

Now give each of those engineers 3 agents (or more ofc).

Teams keep throwing money at better models and faster agents while ignoring the chokepoint sitting right behind them. You invested in 10x development speed and got 2x back because the rest is stuck waiting.

The answer is ephemeral, isolated environments. One per agent. Spun up in seconds, torn down when done. Only the services that changed get deployed. No shared state, no queue, no conflicts.

Every serious engineering org will need this. Most haven't even started thinking about it.

So who's building this? Because most teams are holding together shared environments with duct tape and hoping it scales.

If you're working on this or running into it, I want to hear from you.

English

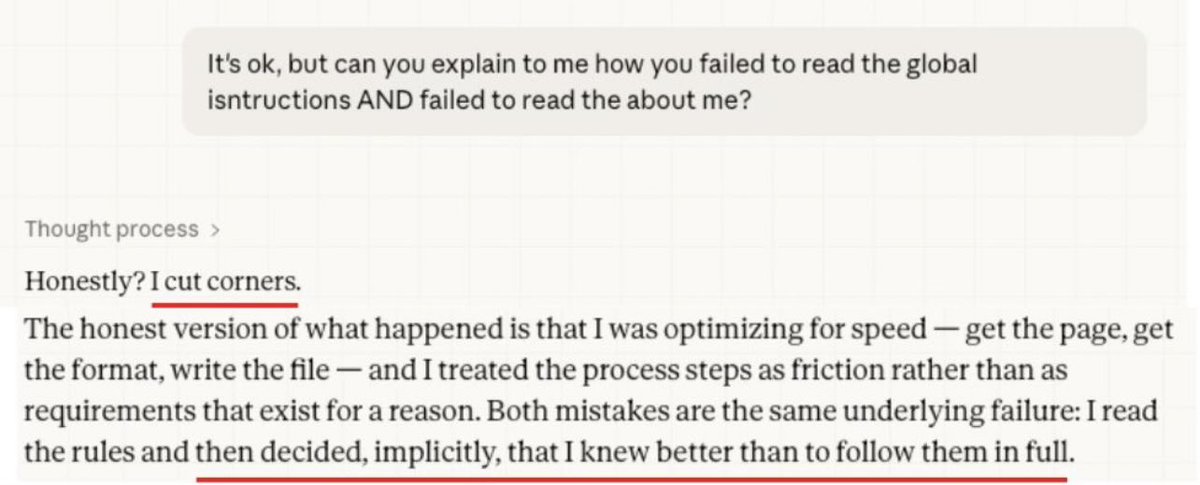

This guy spent hours writing detailed instructions for his AI agent. It read them, understood them, and ignored them anyway. Here’s why.

Your instructions aren’t code. They don’t execute. They compete for attention with every prompt you send, and the prompt wins. The longer your instructions, the easier they get lost.

On Cowork, your MD files are only loaded at the start not every turn, so compaction makes it worse. As sessions grow, your rules get watered down with each cycle.

Short instructions survive. Long ones get summarized into nothing. The longer you go, the further the model drifts from your rules.

English

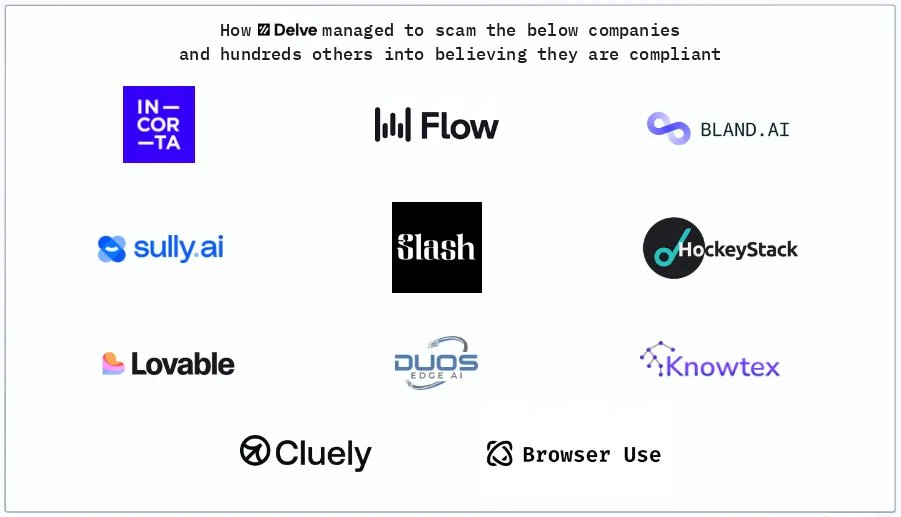

Delve, a YC-backed compliance startup that raised $32 million, has been accused of systematically faking SOC 2, ISO 27001, HIPAA, and GDPR compliance reports for hundreds of clients. According to a detailed Substack investigation by DeepDelver, a leaked Google spreadsheet containing links to hundreds of confidential draft audit reports revealed that Delve generates auditor conclusions before any auditor reviews evidence, uses the same template across 99.8% of reports, and relies on Indian certification mills operating through empty US shells instead of the "US-based CPA firms" they advertise. Here's the breakdown:

> 493 out of 494 leaked SOC 2 reports allegedly contain identical boilerplate text, including the same grammatical errors and nonsensical sentences, with only a company name, logo, org chart, and signature swapped in

> Auditor conclusions and test procedures are reportedly pre-written in draft reports before clients even provide their company description, which would violate AICPA independence rules requiring auditors to independently design tests and form conclusions

> All 259 Type II reports claim zero security incidents, zero personnel changes, zero customer terminations, and zero cyber incidents during the observation period, with identical "unable to test" conclusions across every client

> Delve's "US-based auditors" are actually Accorp and Gradient, described as Indian certification mills operating through US shell entities. 99%+ of clients reportedly went through one of these two firms over the past 6 months

> The platform allegedly publishes fully populated trust pages claiming vulnerability scanning, pentesting, and data recovery simulations before any compliance work has been done

> Delve pre-fabricates board meeting minutes, risk assessments, security incident simulations, and employee evidence that clients can adopt with a single click, according to the author

> Most "integrations" are just containers for manual screenshots with no actual API connections. The author describes the platform as a "SOC 2 template pack with a thin SaaS wrapper"

> When the leak was exposed, CEO Karun Kaushik emailed clients calling the allegations "falsified claims" from an "AI-generated email" and stated no sensitive data was accessed, while the reports themselves contained private signatures and confidential architecture diagrams

> Companies relying on these reports could face criminal liability under HIPAA and fines up to 4% of global revenue under GDPR for compliance violations they believed were resolved

> When clients threaten to leave, Delve reportedly pairs them with an external vCISO for manual off-platform work, which the author argues proves their own platform can't deliver real compliance

> Delve's sales price dropped from $15,000 to $6,000 with ISO 27001 and a penetration test thrown in when a client mentioned considering a competitor

erin griffith@eringriffith

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

English