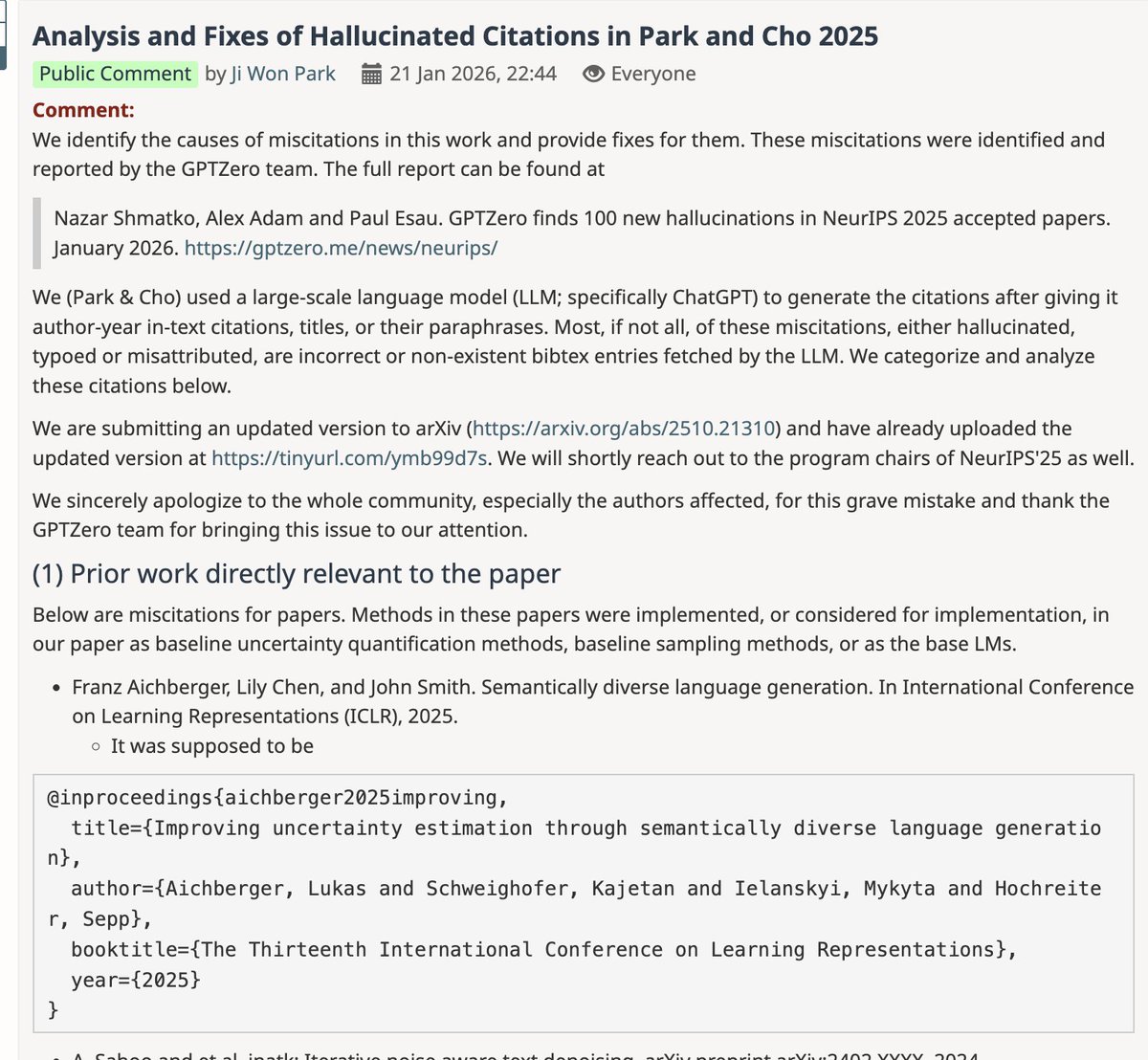

Yunmin Cha retweetledi

New #NVIDIA Paper

We introduce Motive, a motion-centric, gradient-based data attribution method that traces which training videos help or hurt video generation.

By isolating temporal dynamics from static appearance, Motive identifies which training videos shape motion in video generation.

🔗 research.nvidia.com/labs/sil/proje…

1/10

English