Ying Shan

1.5K posts

Ying Shan

@yshan2u

Distinguished Scientist @TencentGlobal, Founder of PCG ARC Lab. Formerly @Microsoft, @MSFTResearch. Views are my own.

🚨BREAKING: Researchers just confirmed something the AI industry does not want you to know. AI is making professionals worse at their jobs when the AI is not available. Not slower. Not less confident. Measurably worse. A study published in The Lancet Gastroenterology and Hepatology tracked doctors performing colonoscopies across four hospitals in Poland after AI assistance was introduced into the procedure. Then the researchers measured what happened when the doctors performed the same procedure without AI help. Adenoma detection rates dropped from 28.4% to 22.4%. A six-point absolute decline. The AI was not present. The doctors were. But continuous reliance on the AI had eroded the observational skill the procedure requires. Real patients with real polyps were missed because the doctors had stopped practicing the part of their job the AI had been doing. This is not an isolated finding. Researchers at Microsoft and Carnegie Mellon University surveyed 319 knowledge workers and presented the results at CHI 2025, the premier academic conference on human-computer interaction. Workers with higher confidence in AI tools reported lower confidence in their own critical thinking. The pattern was consistent. The more someone relied on AI to produce outputs, the less cognitive effort they reported applying to the work itself. A separate study from SBS Swiss Business School published in January 2025 surveyed users across age groups and found a statistically significant negative correlation between AI usage frequency and critical thinking scores. Younger users were more affected than older ones. The MIT Media Lab reached the same conclusion in a study on cognitive atrophy. A study published in October 2025 in Computers in Human Behavior found that AI use makes people overestimate their own cognitive performance. They get smarter outputs and dumber self-awareness simultaneously. The mechanism has a name. Cognitive offloading. The brain stops practicing tasks it has delegated to a system. Active skills become passive ones. The AI performs the task. The human approves the output. Over time the human loses the ability to perform the task without the AI. The Lancet study made this visible because the stakes were measurable. A doctor either finds the polyp or does not. But the same dynamic is happening across every professional field where AI has taken over routine cognitive work. UX designers reported it for prototyping and bias detection. Cybersecurity analysts reported it for threat reasoning. Knowledge workers reported it for analysis and synthesis. The implication is structural. Entry-level roles historically existed not just to produce output but to develop judgment. The junior analyst ran the numbers because doing so taught them what the numbers meant. The junior associate drafted the brief because doing so taught them how arguments are constructed. AI is absorbing those tasks at exactly the point where the next generation of professionals would normally be building the skills they need at the senior level. There is a direct line between the Lancet study and the Anthropic finding that young worker hiring in AI-exposed fields has dropped 14%. The tasks are not being practiced. The judgment is not being developed. The researchers are not arguing against AI. They are documenting a specific harm that does not show up in any productivity metric. The output looks better. The human producing it has gotten worse. If you have been using AI for the work you used to do yourself, the studies suggest you are not just saving time. You are losing the ability to do that work without it. Sources: The Lancet Gastroenterology and Hepatology, 2025 PDF: thelancet.com/journals/langa… Microsoft and Carnegie Mellon University, CHI 2025 PDF: microsoft.com/en-us/research… SBS Swiss Business School, Societies Journal, January 2025 PDF: mdpi.com/2075-4698/15/1… Computers in Human Behavior, October 2025 DOI: doi.org/10.1016/j.chb.…

🤯BREAKING: Researchers just mathematically proved that AI layoffs will collapse the economy: and every CEO already knows it. The AI Layoff Trap. A game theory paper from UPenn + Boston University is glaringly important! 100K+ tech layoffs in 2025. 80% of US workers exposed. And no market force can stop it. → Every company fires workers to cut costs → Every fired worker stops buying products → Revenue collapses across every sector → The companies that fired everyone go bankrupt It's a Prisoner's Dilemma with math behind it. Automate and you survive short-term. Don't automate and your competitor kills you. But everyone automating destroys the demand that makes all companies viable. UBI (universal basic income) won't fix it. Profit taxes won't fix it. The researchers found only one solution: a Pigouvian automation tax "robot tax" The AI trap on the economy is here!

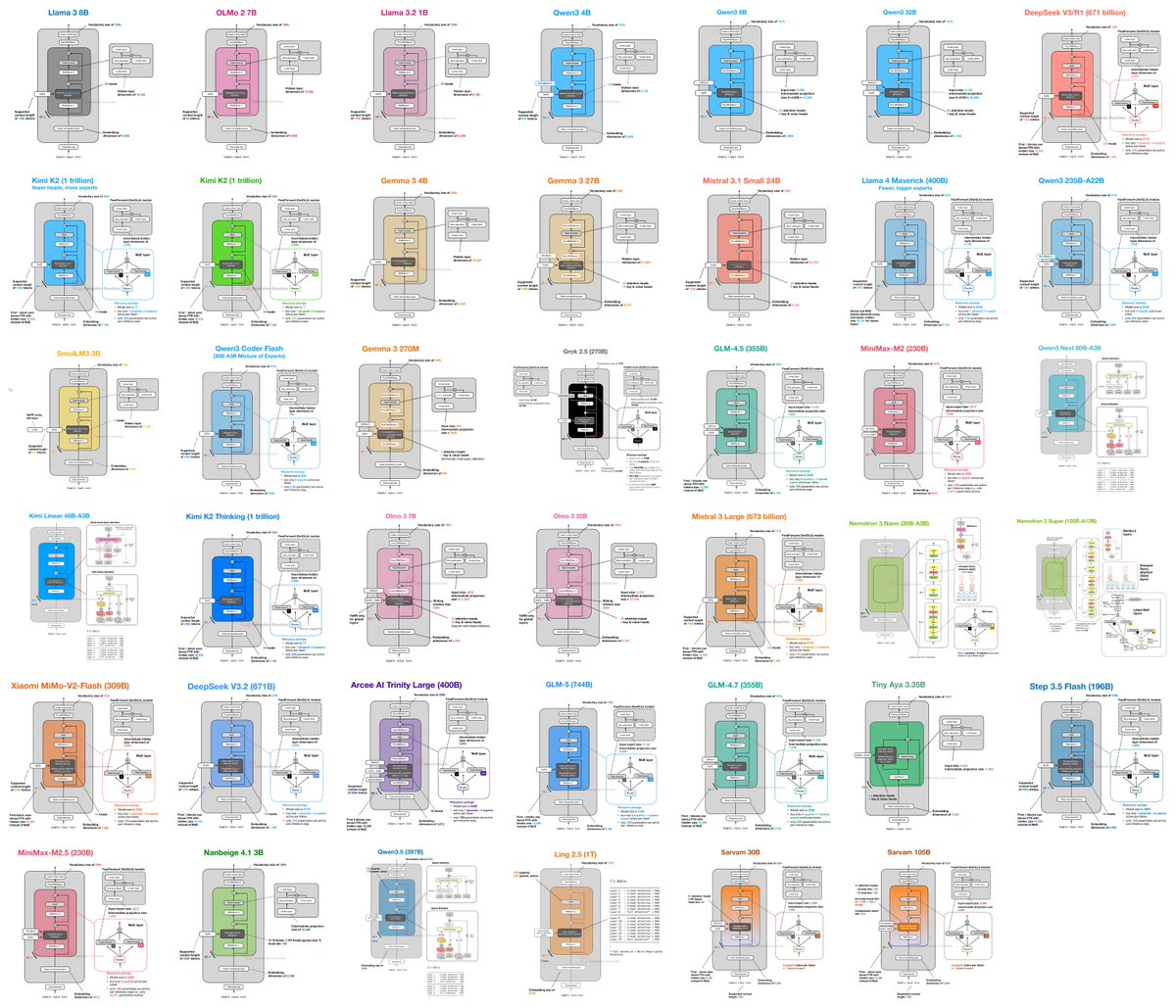

Sharing another very cool paper from my friend @XinggangWang. It goes after one of the most fundamental assumptions in Transformers: residual connections. The core issue is simple: as Transformers get deeper, early-layer signals get washed out. Every residual update is added with roughly equal weight, so features formed in shallow layers gradually get diluted. By the time you are 100 layers deep, a lot of that useful early information is barely preserved. MoDA’s idea is elegant: let attention operate not just across the sequence, but across depth too. So instead of each head only attending over tokens, it also attends to KV pairs from previous layers at the same position. In other words, the model can look back not only across context, but also across its own intermediate representations — all in one unified attention operation. What makes this even better is that the engineering is serious too: --fused Triton kernel reaches 97.3% of FlashAttention-2 efficiency at 64K context with only 3.7% FLOPs overhead --works even better with post-norm than pre-norm, also reduces attention sink behavior as a nice side effect And the results are strong: at 1.5B scale, MoDA gets +2.11% average improvement across 10 downstream tasks, and -0.2 perplexity across 10 benchmarks vs OLMo2. For a long time, depth has been the relatively underused scaling axis. People talk about data scale, model width, and context length. Much less about how to make depth actually compound. MoDA makes a very compelling case that depth still has a lot to give — if the architecture can truly preserve and reuse what earlier layers learned. Triton code is open: github.com/hustvl/MoDA Paper: arxiv.org/abs/2603.15619

This aired tonight to 1 billion people in China. A year ago these robots could barely wave a handkerchief, now they can do backflips and kung fu with nunchucks. Physical intelligence is the next frontier.

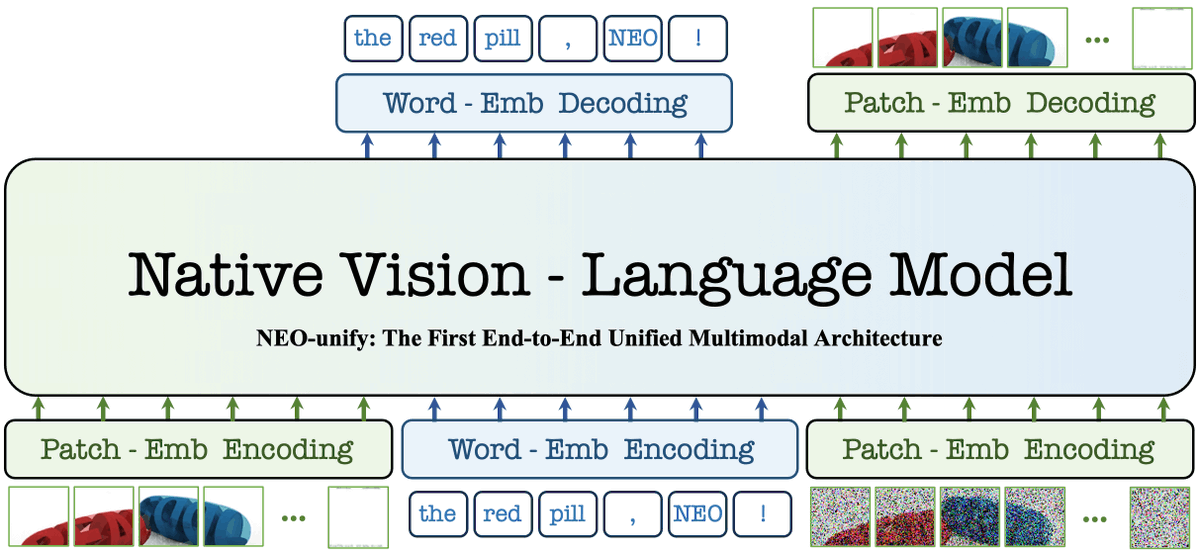

"a closed-loop paradigm that jointly optimizes the world model and the VLA policy to iteratively enhance the performance and grounding of both"