Zack Gow

187 posts

Zack Gow

@zackgow

Cofounder & CTO @ orita | Data Scientist & ML Engineer | some guy on the internet

Today feels big. My third grader earned another stripe on his BJJ belt and then casually finished the last lesson of his Calc BC course. This kid, who just over a year ago claimed he hated math, fell in love with the subject when he started @_MathAcademy_. He became thirsty for more and more math. He has been setting his own goals, and they vastly exceeded anything I would have dared set for him. He finished 6th through 12th grade math in just over a year. He hates reviews 😂 and loves new lessons. He doesn't like calculations but loves concepts. He takes math notebooks to restaurants so he can toy with proofs while he waits for his food. And he cannot wait for the MA Abstract Algebra course (@ninja_maths, counting on you!)

“Why AGI Will Not Happen” @Tim_Dettmers timdettmers.com/2025/12/10/why… This essay is worth reading. Discusses diminishing returns (and risks) of scaling. The contrast between West and East: “Winner Takes All” approach of building the biggest thing vs a long-term focus on practicality. “The purpose of this blog post is to address what I see as very sloppy thinking, thinking that is created in an echo chamber, particularly in the Bay Area, where the same ideas amplify themselves without critical awareness. This amplification of bad ideas and thinking exuded by the rationalist and EA movements, is a big problem in shaping a beneficial future for everyone.” “A key problem with ideas, particularly those coming from the Bay Area, is that they often live entirely in the idea space. Most people who think about AGI, superintelligence, scaling laws, and hardware improvements treat these concepts as abstract ideas that can be discussed like philosophical thought experiments. In fact, a lot of the thinking about superintelligence and AGI comes from Oxford-style philosophy. Oxford, the birthplace of effective altruism, mixed with the rationality culture from the Bay Area, gave rise to a strong distortion of how to clearly think about certain ideas.”

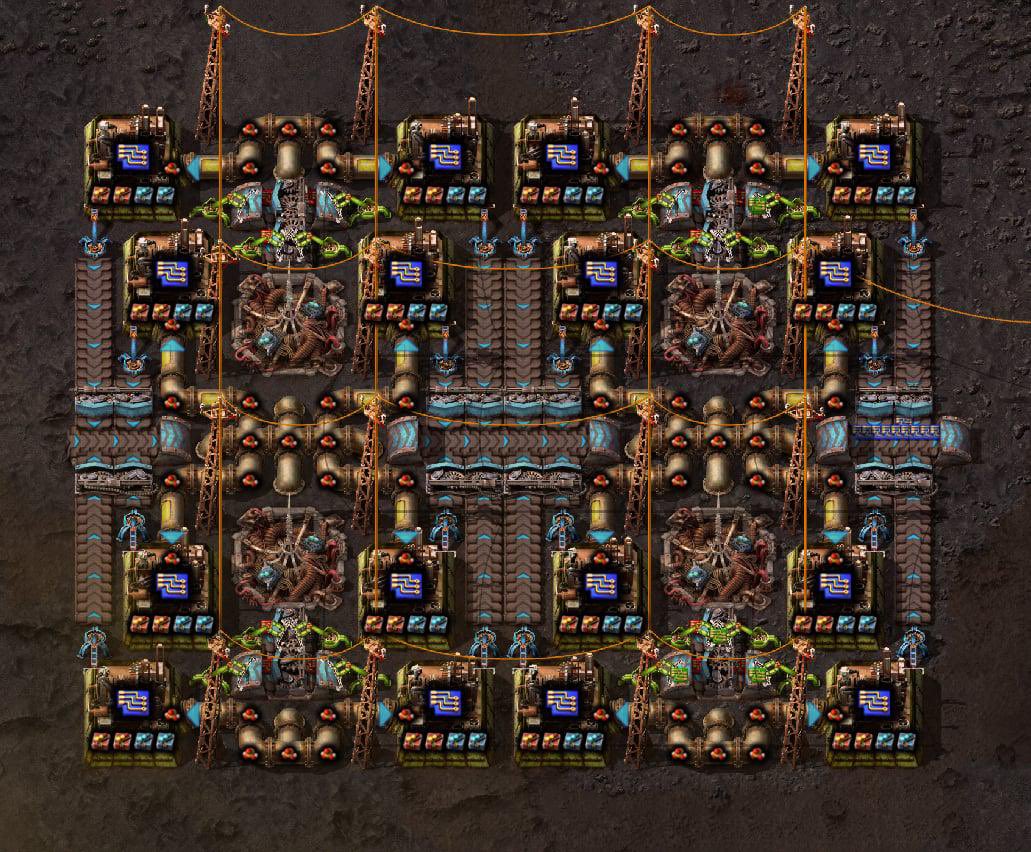

I was talking with my bro about how he uses Claude Code, and realized he was operating the agents without a radar view. That's just silly

I am both surprised and not surprised the US Postal Service website's banner alerts are simply someone commenting and un-commenting the html. Random indents and all.