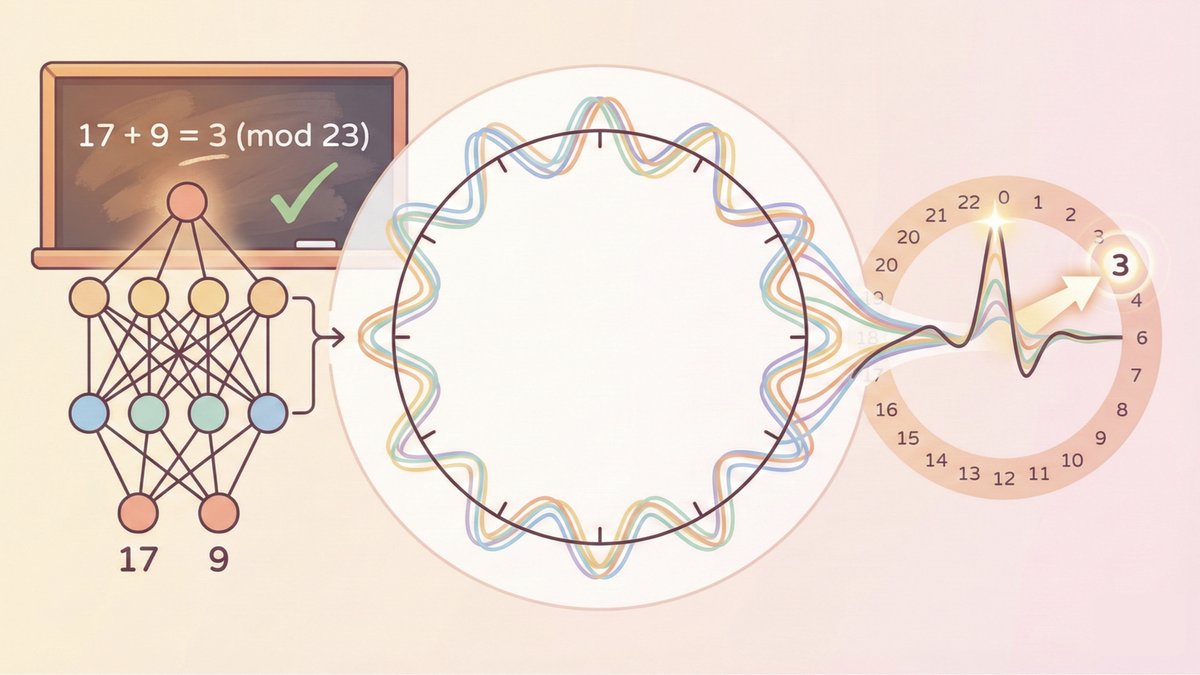

Holiday cooking finally ready to serve! 🥳 Introducing DFlash — speculative decoding with block diffusion. 🚀 6.2× lossless speedup on Qwen3-8B ⚡ 2.5× faster than EAGLE-3 Diffusion vs AR doesn’t have to be a fight. At today’s stage: • dLLMs = fast, highly parallel, but lossy • AR LLMs = accurate, sequential, but slow DFlash = diffusion drafts, AR verifies.