Gopi Kumar

525 posts

Gopi Kumar

@zenlytix

AI Infra @ Microsoft Research. https://t.co/yXMFohcHB1. Amateur musician @ https://t.co/jbymO74ZpX. Opinions my own.

Redmond, WA Katılım Mart 2016

74 Takip Edilen234 Takipçiler

Is it just me or the ASCII art on openai.com/devday/ represents OpenAI (the frog) gobbling up the startups (flies) :) #devday2025 #OpenAIDevDay #openai

English

In this part two of the AI coding practices, we look at the AI assisted coding in the enterprise production code scenario.

linkedin.com/pulse/coding-e…

Let me know what you think and if it resonates with you.

English

Putting together a series of short posts with some thoughts and opinions on the practice of vibe coding. This is the introductory post to set the stage for the conversation. Appreciate your feedback and suggestions.

linkedin.com/pulse/practice…

English

@miguelgfierro Agree with learning by practice. One thing one would benefit starting with RAG/even 0-shot prompting LLM is understanding how to evaluate results. You don't need know at 1st how to build model or what gradient descent is but basics of how to measure is key to grok from start.

English

Unpopular option: if you want to get into AI, start from a RAG system like this instead of linear regression.

I call this reverse learning.

𝐓𝐫𝐚𝐝𝐢𝐭𝐢𝐨𝐧𝐚𝐥 𝐰𝐚𝐲:

Linear regression->Logistic Regression->SVMs->Decision Trees->Random Forests->Gradient Boosted Trees->CNNs->RNN->LSTMs->LLMs->RAG.

𝐑𝐞𝐯𝐞𝐫𝐬𝐞 𝐋𝐞𝐚𝐫𝐧𝐢𝐧𝐠:

RAG->LLMs->Gradient Boosted Trees->Random Forests->Logistic Regression

𝐓𝐫𝐚𝐝𝐢𝐭𝐢𝐨𝐧𝐚𝐥 𝐰𝐚𝐲:

First study the theory, then practice with projects.

𝐑𝐞𝐯𝐞𝐫𝐬𝐞 𝐋𝐞𝐚𝐫𝐧𝐢𝐧𝐠:

First practice with projects, then learn the theory underlying the models.

𝐓𝐫𝐚𝐝𝐢𝐭𝐢𝐨𝐧𝐚𝐥 𝐰𝐚𝐲:

Six months to learn the AI that that companys require to get an AI position.

𝐑𝐞𝐯𝐞𝐫𝐬𝐞 𝐋𝐞𝐚𝐫𝐧𝐢𝐧𝐠:

One month to learn the AI that that companys require to get an AI position.

The key idea is start from the AI that is useful to get an AI position instead of the AI that was used 30 years ago.

𝐐𝐮𝐞𝐬𝐭𝐢𝐨𝐧:

But it's impossible to do RAG+LLMs if you don't know linear and logistic regression!

𝐀𝐧𝐬𝐰𝐞𝐫:

Are you sure? In the picture below you have a RAG solution with DeepSeek LLM in less than 30 lines of code.

Challenge the status quo!

Vamos!!!🦾🦾🦾

English

On-device AI framework ecosystem is blooming these days:

1. llama.cpp - All things Whisper, LLMs & VLMs - run across Metal, CUDA and other backends (AMD/ NPU etc)

github.com/ggerganov/llam…

2. MLC - Deploy LLMs across platforms especially WebGPU (fastest WebGPU LLM implementation out there)

github.com/mlc-ai/web-llm

3. MLX - Arguably the fastest general purpose framework (Mac only) - Supports all major Image Generation (Flux, SDXL, etc), Transcription (Whisper), LLMs

github.com/ml-explore/mlx…

4. Candle - Cross-platform general purpose framework written in Rust - wide coverage across model categories

github.com/huggingface/ca…

Honorable mentions:

1. Transformers.js - Javascript (WebGPU) implementation built on top of ONNXruntimeweb

github.com/xenova/transfo…

2. Mistral rs - Rust implementation for LLMs & VLMs, built on top of Candle

github.com/EricLBuehler/m…

3. Ratchet - Cross platform, rust based WebGPU framework built for battle-tested deployments

github.com/huggingface/ra…

4. Zml - Cross platform, Zig based ML framework

github.com/zml/zml

Looking forward to how the ecosystem would look 1 year from now - Quite bullish on the top 4 atm - but open source ecosystem changes quite a bit! 🤗

Also, which frameworks did I miss?

English

Here is a short walkthrough of running the Phi-3 Mini model on a Windows365 Cloud GPU desktop with #nvidia A10 showing the e2e steps starting with setup, downloading the model and running the model inference in two different ways (all in under 5 mins)

youtu.be/xNT8aRJeC3k

YouTube

English

Introducing the new Azure AI infrastructure VM series ND MI300X v5 - Microsoft Community Hub techcommunity.microsoft.com/t5/azure-high-…

English

Gopi Kumar retweetledi

Today at @Microsoft Build, @satyanadella announced a deepened partnership with @huggingface, with new experiences across cloud, hardware, open source and developers.

Here's a round up of all the joint work we announced this week!

huggingface.co/blog/microsoft…

- Azure AI Studio Model Catalog

- Azure AMD GPU MI300X VMs

- Phi-3 open models

- WebGPU with transformers.js and optimum

- Spaces Dev Mode

English

4. Download the chat sample and run:

Reference: github.com/microsoft/onnx…

curl raw.githubusercontent.com/microsoft/onnx… -o model-qa.py

python model-qa.py -m directml/directml-int4-awq-block-128 -l 2048 -g

English

Prerequisites:

* Install AMD GPU Driver as indicated in Azure doc page:

learn.microsoft.com/en-us/azure/vi…

Download the EXE package and execute it. Follow the wizard. (Just Driver alone is adequate).

VM will need a reboot

* Install Miniconda

docs.anaconda.com/free/miniconda…

English

Quick walkthrough to setup and run @MicrosoftAI Phi-3 model on Windows machine with an AMD V620 GPU using #microsoft @Windows DirectML and @onnxruntime GenAI library in under 5 minutes. These instructions will work across Nvidia, AMD and Intel GPUs. 🧵

youtube.com/watch?v=hghCoi…

YouTube

English

Gopi Kumar retweetledi

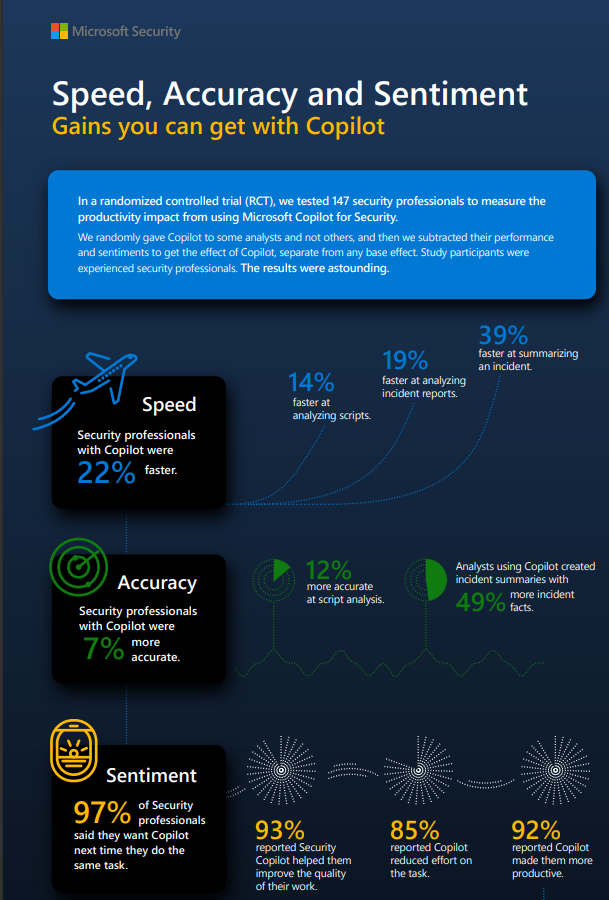

Research report showing productivity gains from Microsoft Security #copilot.

Full report: go.microsoft.com/fwlink/?linkid…

English

@bindureddy I guess if Alice is playing online chess hard to say what the other sister is doing? :)

English

Bard is Now Gemini! My initial thoughts

- Still continues to be somewhat nerfed and refuses to answer questions

- Refused to generate a simple illustration of George Clooney, ChatGPT is better

- missing PDF upload

- Answers do seem better than the previous version

- Seems to have a "reasoning vibe"

- However, it does NOT answer some hard questions that GPT-4 does.

For example, it didn't get "In a room I have only 3 sisters. Anna is reading a book. Alice is playing a match of chess. What the third sister, Amanda, is, doing ?"

The answer is the 3rd sister is playing Chess. GPT-4 nails it.

Overall, we plan to do a lot more analysis, but first impressions are good but not great.

TLDR; I don't think it will make a material difference to how Bard was doing before, especially if their plan is to charge for this. However, it's always good to have more players in the market. 🤷♀️

English

LoRA SFT took about an hour on the MedAlpaca on a single node with 8xAMD MI250X (Dual vGPU) huggingface.co/datasets/medal….

Used the Llama-Factory (github.com/hiyouga/LLaMA-…) to do the fine tuning which made things super easy. Standard instructions worked just fine on AMD/ROCm too.

English

This weekend I was able to smoothly run inferencing and Supervised Fine tuning (LoRA) on @Microsoft #phi2 model (huggingface.co/microsoft/phi-2) on an @amd MI250X GPU / #rocm on @Azure . Nice work @SebastienBubeck and team.

English