Zhe Wang

21 posts

@zhexwang

Frontier AI @GoogleDeepMind. Generative modelling, Robotics and AI4Science.

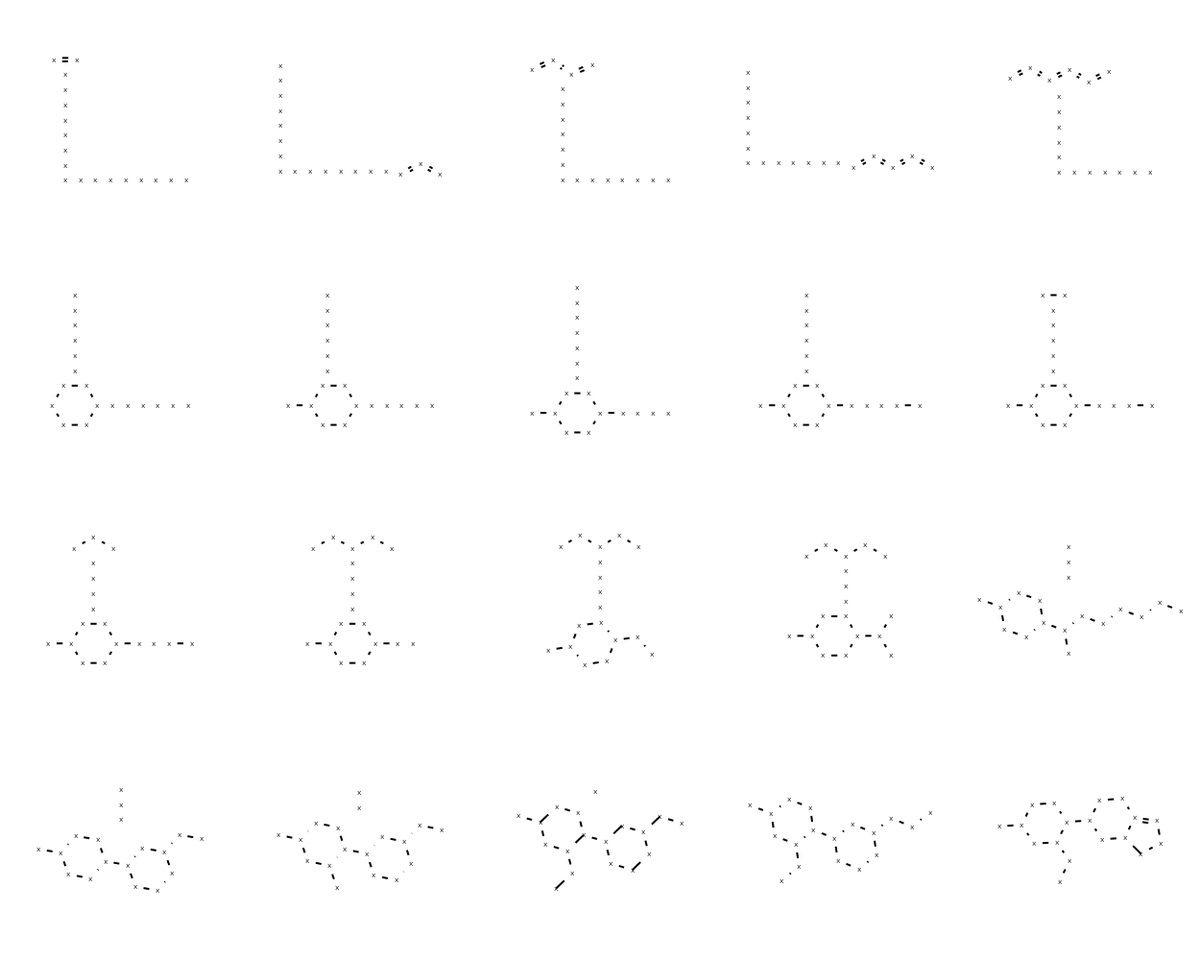

Wrote up some notes providing an introduction to discrete diffusion models, going into the theory of time-inhomogeneous CTMCs via generators/time-evolution systems. What motivated me was the sheer difficulty of finding a useful reference which laid out the theory (e.g. Kolmogorov equations etc.) in a single place. Hope these can be helpful to others.

It takes a village to build an awesome 🦾 🧠 . We are #hiring! Come transform robotics at Google DeepMind. I've received a lot of request for applied and engineering roles. Indeed we are hiring for those too! Robotics engineering: lnkd.in/gDt6CeAq Applied ML SWEs: lnkd.in/gCmY69XQ Research Scientist: lnkd.in/g47zS2Xz

Super thrilled to share that our AI has has now reached silver medalist level in Math at #imo2024 (1 point away from 🥇)! Since Jan, we now not only have a much stronger version of #AlphaGeometry, but also an entirely new system called #AlphaProof, capable of solving many more Olympiad problems. This is a large-scale project that I was fortunate to co-lead at @GoogleDeepMind! See our blog & NYT articel below! Blog: dpmd.ai/imo-silver NYT: nytimes.com/2024/07/25/sci…

🚀 Call for Papers: ICLR 2025 Workshop on World Models! 🌍🤖 📅 Submission Deadline: 10th Feb 2025 23:59 AOE 🌐 Website: sites.google.com/view/worldmode… We invite submissions on understanding, modeling, and scaling #WorldModels—from knowledge extraction to model-based RL, multimodal world models, and their applications in AI, robotics, and scientific discovery. 📩 Join us in shaping the future of AI-driven world modeling! #ICLR2025 #WorldModels #AI #ML #RL