Peichen Zhong

221 posts

@zhongpc

Moved to LinkedIn: https://t.co/kYc30jlZ5n

Microsoft researchers introduce MatterGen, a model that can discover new materials tailored to specific needs—like efficient solar cells or CO2 recycling—advancing progress beyond trial-and-error experiments. msft.it/6012U8zX8

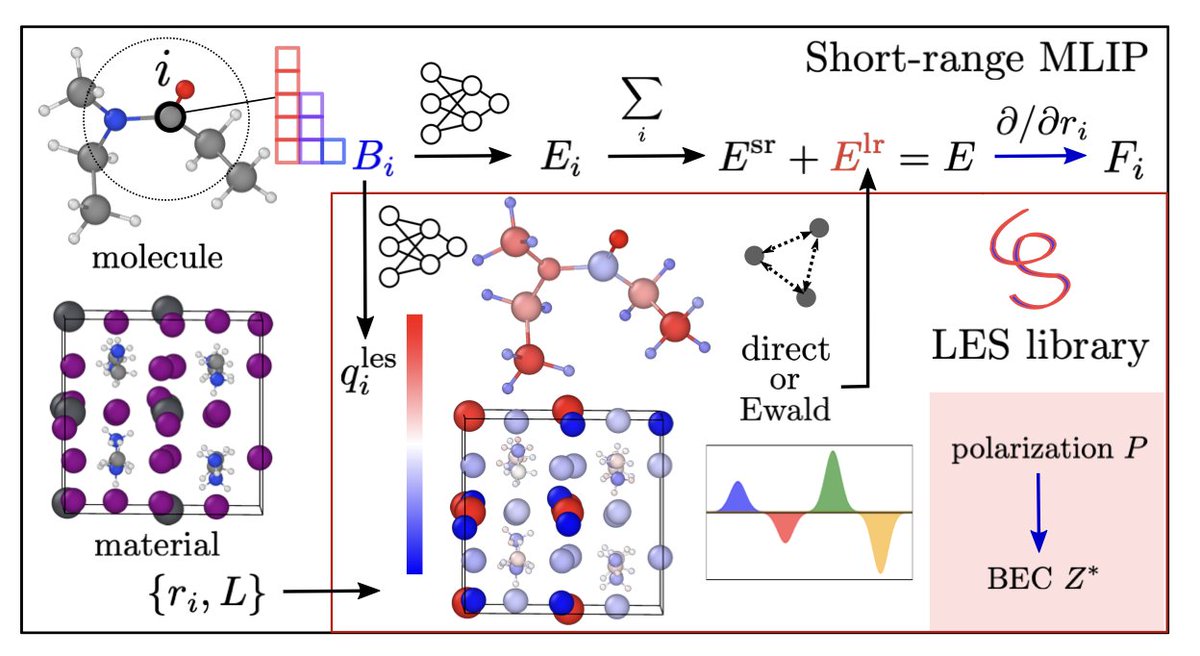

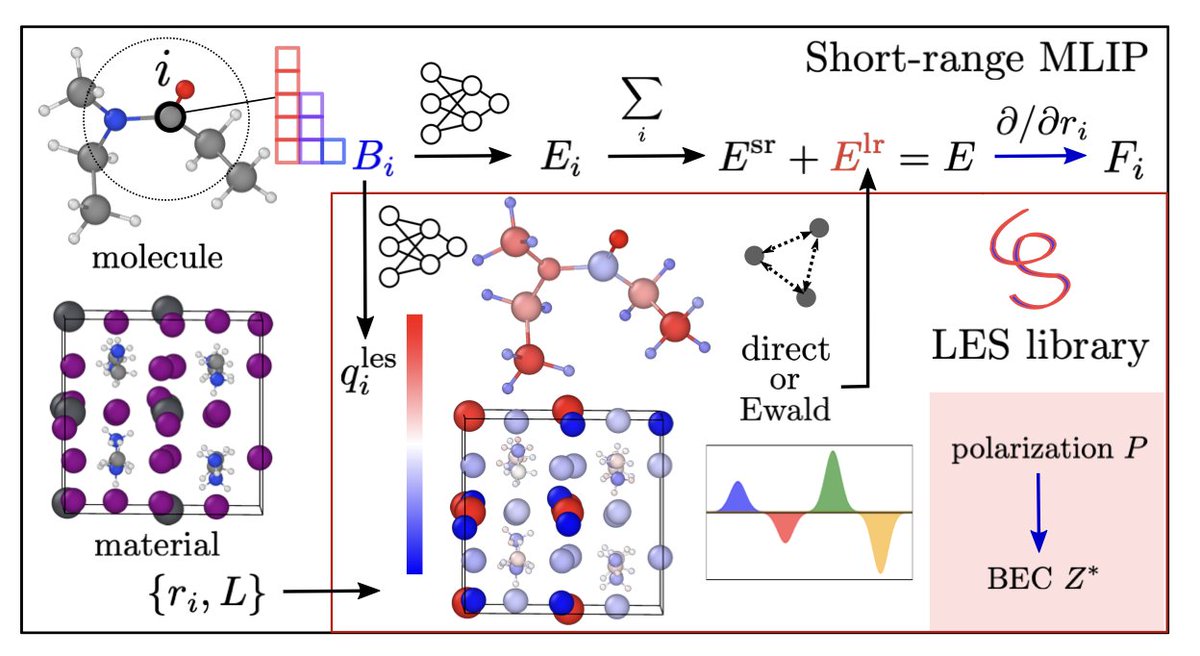

Long-range ML potentials strike again! 🚀 We benchmarked LES on diverse systems—molecules, solutions, and interfaces. Learning just from energy & forces, LES gives the most accurate potential energy surfaces, and physical charges, dipoles, quadrupoles! arxiv.org/abs/2412.15455

Today we’re previewing NeuralPLexer3 Beta: demonstrating unprecedented accuracy and speed in predicting protein-drug complexes, with instant and accurate structural insights across the full range of protein classes and drug molecules. Learn more: iambic.ai/post/np3-previ…