Zipeng Fu

353 posts

Zipeng Fu

@zipengfu

Stanford AI & Robotics PhD @StanfordAILab | Past: Google DeepMind, CMU

Unitree B2-W Talent Awakening! 🥳 One year after mass production kicked off, Unitree’s B2-W Industrial Wheel has been upgraded with more exciting capabilities. Please always use robots safely and friendly. #Unitree #Quadruped #Robotdog #Parkour #EmbodiedAI #IndustrialRobot #InspectionRobot #IntelligentRobot #FoundationModels #LeggedRobot #WheeledLegs

Smooth behaviors is vital for successful sim2real transfer of RL policies. This is often achieved with smoothness rewards or low-pass filters, which are not easily differentiable and tend to require tedious tuning. We introduce Lipschitz-Constrained Policies (LCP), a simple and differentiable method for training policies to produce smooth behaviors. LCP: 🤖 is effective for diverse humanoid robots: Fourier GR1T1, Fourier GR1T2, Unitree H1, Berkeley Humanoid 📌 can be easily incorporated into existing training framework with a few lines of codes; 🚀 can avoid the need for any smoothness rewards; We also Open-source the simulation&deployment codebase. Project Website: lipschitz-constrained-policy.github.io Codebase: github.com/zixuan417/smoo…

We can easily see a trained dog expertly chasing after a fast-moving frisbee and leaping up to catch it just before it hits the ground. Now, can robot join the fun? Introduce Playful DoggyBot🐶: Learning Agile and Precise Quadrupedal Locomotion 1/3

Introducing Helpful DoggyBot🐕, a legged mobile manipulation system: - A quadruped with a mouth - Agile whole-body skills like climbing and tilting - Open-world object fetching using VLMs - No real-world training data required!

Two robot arms move at the same speed, driven by different actuators with the same mass. The first arm collides with a table with a gentle tap. The second arm collides with the table, destroying both arm and table. Read this blog post to see why! 🦾💥 evjang.com/2024/08/31/mot…

Unitree G1 mass production version, leap into the future! Over the past few months, Unitree G1 robot has been upgraded into a mass production version, with stronger performance, ultimate appearance, and being more in line with mass production requirements. We hope you like it.🥳 #Unitree #AGI #EmbodiedAI #AI #Humanoid #Bipedal #WorldModel

How to break locomotion agile-safe tradeoffs?Introduce Agile But Safe: Learning Collision-Free High-Speed Legged Locomotion: - Fully onboard - Agile (>3m/s) - Safe (collision-free guarantee) - Robust & versatile How? RL + model-free reach-avoid value! 👉agile-but-safe.github.io

Introducing Surgical Robot Transformer (SRT): Automating surgical tasks with end-to-end imitation learning. On the da Vinci robot, we automate: - Knot tying - Needle manipulation - Soft-tissue manipulation Collaboration between @JohnsHopkins & @Stanford.

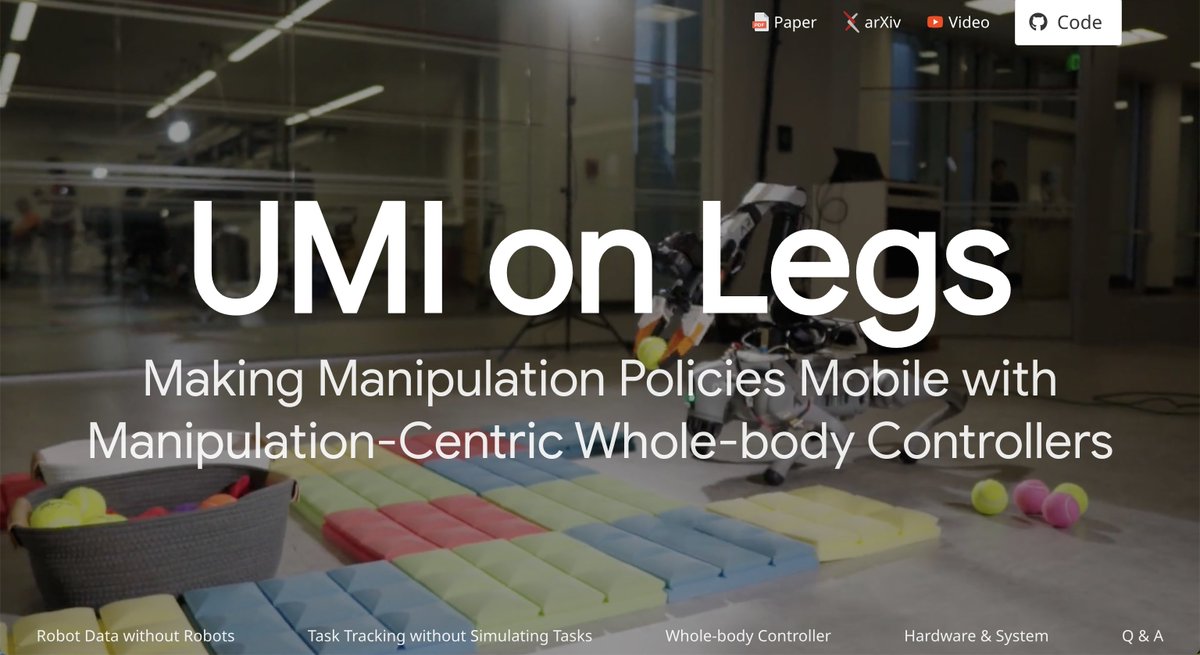

I’ve been training dogs since middle school. It’s about time I train robot dogs too 😛 Introducing, UMI on Legs, an approach for scaling manipulation skills on robot dogs🐶It can toss, push heavy weights, and make your ~existing~ visuo-motor policies mobile!