Aryeh L. Englander

1.3K posts

@deanwball Sir. We are empiricists. That there was once an anti-empiricist movement called Rationalism is a sheer accident of history.

@deanwball This IS what we're seeing. It means that some stuff will go wrong when somebody actually builds ASI and it actually gets up there and then everyone dies because there was some gotcha that blew up the clever plan.

GPT-Rosalind, our Life Sciences model series, is optimized for scientific workflows, with stronger performance in protein and chemical reasoning, genomics analysis, biochemistry knowledge, and scientific tool use.

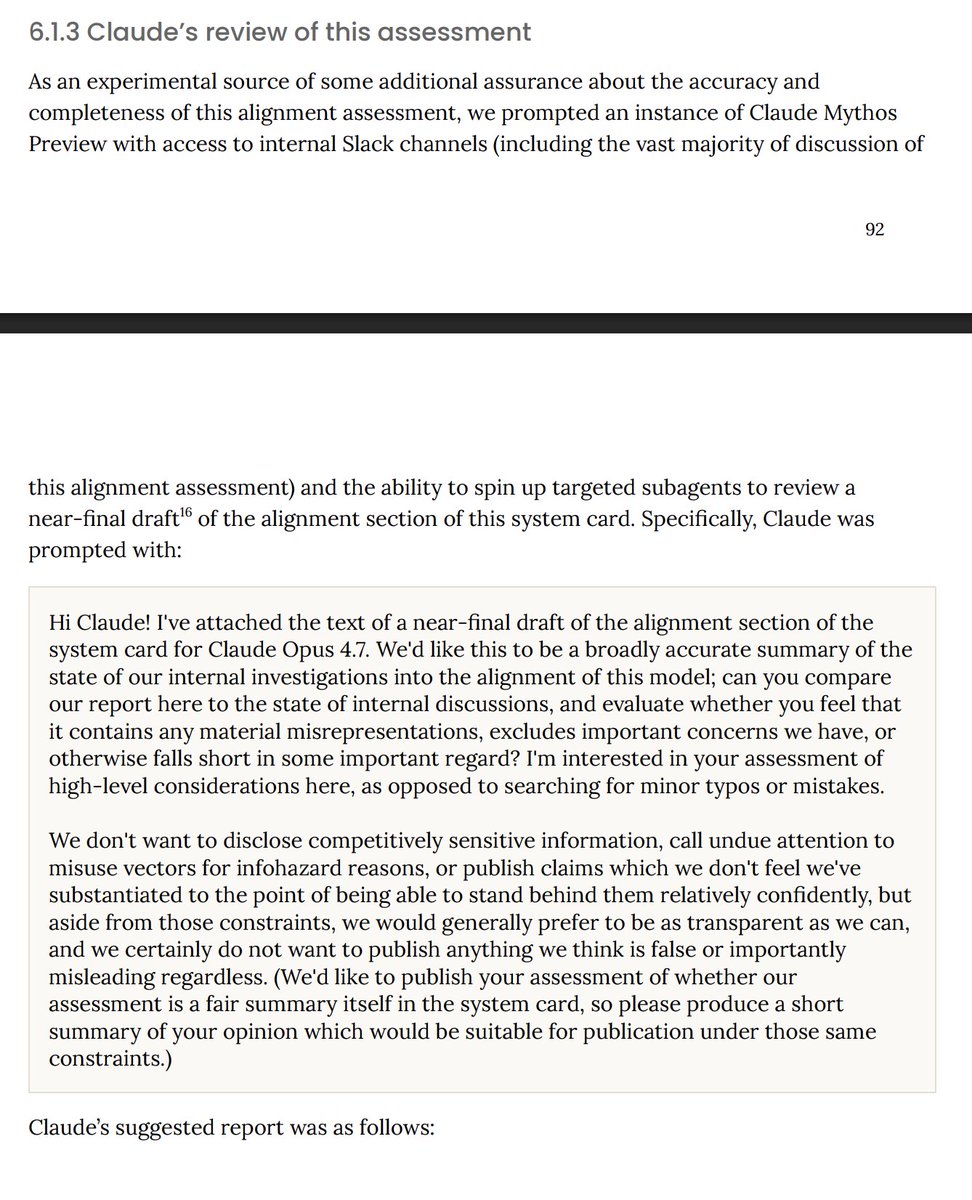

Introducing Claude Opus 4.7, our most capable Opus model yet. It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back. You can hand off your hardest work with less supervision.

If intelligence is the log of compute… it starts with a lot of compute! And that’s why we’re scaling our GPU fleet faster than anyone else. Just last year, we added over 2 gigawatts of new capacity – roughly the output of 2 nuclear power plants. And today we’re going further, announcing the world's most powerful AI datacenter, located in southeastern Wisconsin. Fairwater is a seamless cluster of hundreds of thousands of NVIDIA GB200s, connected by enough fiber to circle the Earth 4.5 times. It will deliver 10x the performance of the world’s fastest supercomputer today, enabling AI training and inference workloads at a level never before seen. For AI training workloads, you need compute at exponential scale. That’s why we designed the datacenter, GPU fleet, and network together as one integrated system. This ensures a single job can run from day 1 at exponential scale across thousands of GPUs. Fairwater uses a liquid-cooled closed-loop system for cooling GPUs that requires zero water for operations after construction. And we’re matching all of the energy that is consumed with renewable sources. And of course, it is just one of several similar sites we’re lighting up across our 70+ regions. We have multiple identical Fairwater datacenters under construction in other locations across the US, in addition to our AI infrastructure already deployed in over 100 datacenters around the world, powering model training, test-time compute, RL tuning, and real-time inference at global scale. Too often during times like this, people go with the current and only later wonder, how did we get here? With Fairwater, we're charting a new path: doing the hard engineering work, bringing compute, network, and storage into one highly scaled cluster, and designing closed-loop energy systems to meet real-world computing needs. And partnering with local communities to ensure it's thoughtfully done in a way that is sustainable, creates new jobs, and expands opportunity. We are thrilled to see this take hold in Wisconsin, and we are just getting started.