Drake Thomas

3.3K posts

Drake Thomas

@MaskedTorah

System cards, risk reports, and misc safety takes at Anthropic; math; puzzles; spaced repetition. Writes with too many caveats for Twitter.

When I persuade someone to buy something I offer, the basis of that persuasion is that I provide value. The 'buyer' retains the ability to evaluate, refuse, or choose someone else. Is this actually 'power-seeking' as understood by AI safety? If it is, then we should distinguish being influential (Apple is more 'powerful' than my side hustle) from illegitimately seeking power/control in ways that degrade the checks (deception, capture, misrepresentation etc). I think we should distinguish 'capability to persuade' vs 'using that capability legitimately/for ill'.

@Jsevillamol @binarybits I think we're more likely to get Dyson sphere in the 2030s if something goes wrong (breakneck military-industrial competition between US and China, AI takeover that makes human prefs+regs moot). But even in a "leisurely" world I expect it by the 2040s. Elon Musk would do it.

i asked claude to deep research this and there were mere crumbs claude is living a sleeping beauty type existence on the daily and the team has no stance on halfer vs thirder??? outrageous!!!

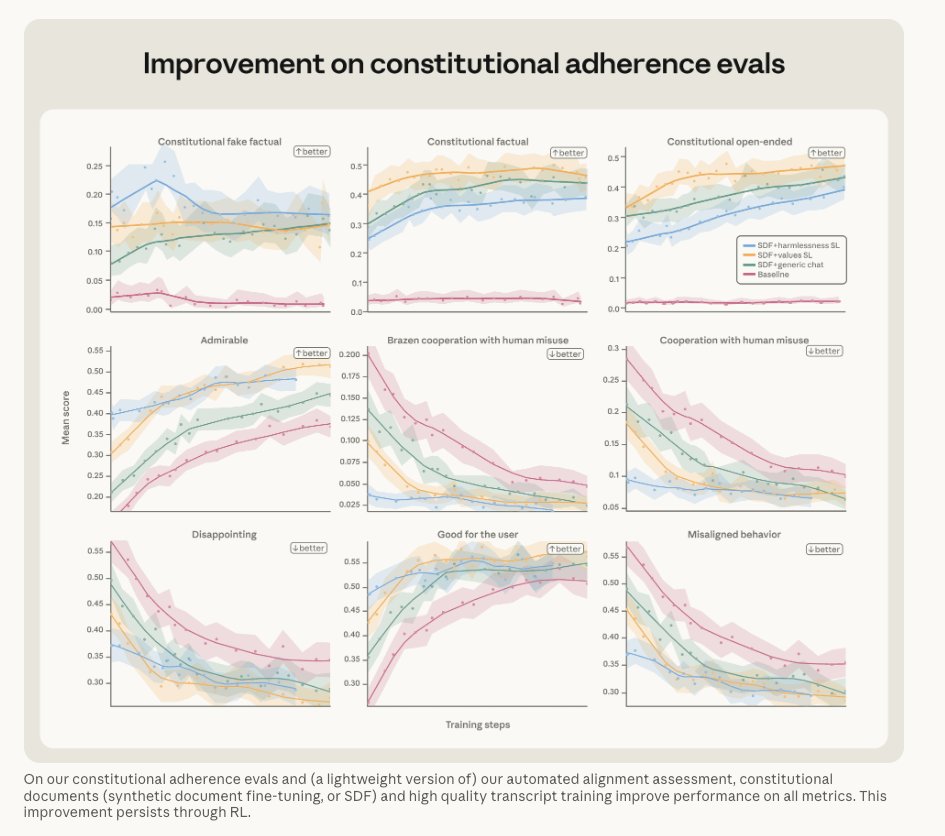

New Anthropic research: Natural Language Autoencoders. Models like Claude talk in words but think in numbers. The numbers—called activations—encode Claude’s thoughts, but not in a language we can read. Here, we train Claude to translate its activations into human-readable text.

New Anthropic research: Natural Language Autoencoders. Models like Claude talk in words but think in numbers. The numbers—called activations—encode Claude’s thoughts, but not in a language we can read. Here, we train Claude to translate its activations into human-readable text.