GenAI Built

3.3K posts

GenAI Built

@GenAIbuilt

Building real AI systems, workflows & agents. Built. Tested. Shared.

Entrou em Haziran 2024

7 Seguindo71 Seguidores

Claude Code 2.1.80 introduces a subtle but important shift in how AI coding tools handle reliability and workflows.

Here’s what changed and why it matters.

Release snapshot:

• 1 flag change

• 17 CLI updates

• 1 system prompt change

Key highlights:

• Memories are now validated against current files

→ Reduces reliance on stale context

→ Improves output accuracy in long sessions

• Resume now restores full parallel tool results

→ Eliminates [Tool result missing] errors

→ Makes multi-step workflows actually usable

• SQL analysis functions reinstated

→ Restores previously broken data workflows

→ Signals rollback of over-restriction in tool access

What stands out (insight):

This update is less about new capabilities and more about fixing trust in the system.

AI coding tools don’t just need to be powerful.

They need to be state-aware, consistent, and recoverable.

Claude Code is clearly moving in that direction.

Notable additions:

• Rate limit visibility in CLI (5-hour + 7-day windows)

→ Better control for high-usage environments

• Plugin system expansion (source: "settings")

→ Easier local customization without marketplace friction

• Effort-level overrides via frontmatter

→ More control over model reasoning per task

• Experimental --channels (MCP integration)

→ Early signal toward multi-agent / multi-source workflows

Fixes that matter:

• Parallel tool execution now fully recoverable

• Voice mode stability improved (Cloudflare TLS issue)

• API proxy + Bedrock + Vertex compatibility fixed

• CLI navigation and permissions UX improved

Industry implication:

We’re seeing a shift from:

“AI that generates code”

→ to

“AI systems that can reliably operate over time”

State management, tool orchestration, and session recovery

are becoming core competitive advantages.

The takeaway:

Claude Code 2.1.80 isn’t flashy.

But it quietly addresses one of the biggest gaps in AI tooling today:

Reliability at scale.

GIF

English

@namd1nh What’s interesting is this feels like a shift from model-centric progress to system-level maturity. Validating memory + restoring tool state is basically treating the agent like a long-running process, not a stateless prompt. That’s a different category of product.

English

@Cointelegraph The real signal here is consolidation. Instead of shipping more standalone tools, OpenAI is tightening the loop between thinking (ChatGPT), doing (Codex), and accessing information (browser). That’s closer to a full-stack productivity layer than just another app.

English

@RoundtableSpace What’s interesting is the implicit infrastructure layer here. If models decide when to go multi-agent, we’re entering a phase where optimization isn’t just about prompts but about how intelligence is routed behind the scenes.

English

🔖 If this saved you time, bookmark it.

♻️ Repost the post to help others build smarter.

🔔 Follow @GenAibuilt for daily, practical AI insights.

x.com/GenAIbuilt/sta…

GenAI Built@GenAIbuilt

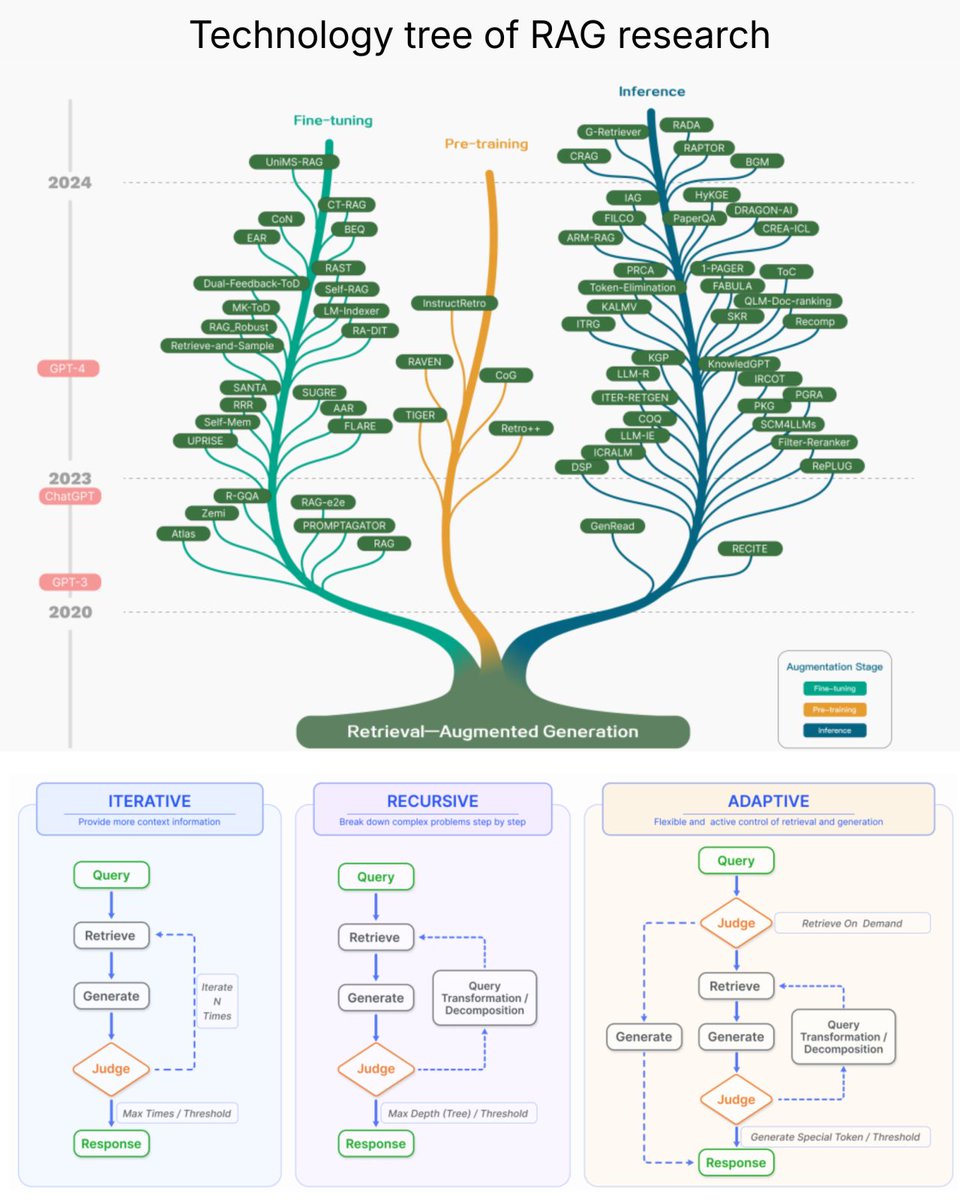

Are we actually following the roadmap to reliable AI? Back in 2023, a clear direction emerged: RAG (Retrieval-Augmented Generation) as the path forward. Because LLMs alone still struggle with: • Hallucinations • Outdated knowledge • No traceability

English

GenAI Built retweetou

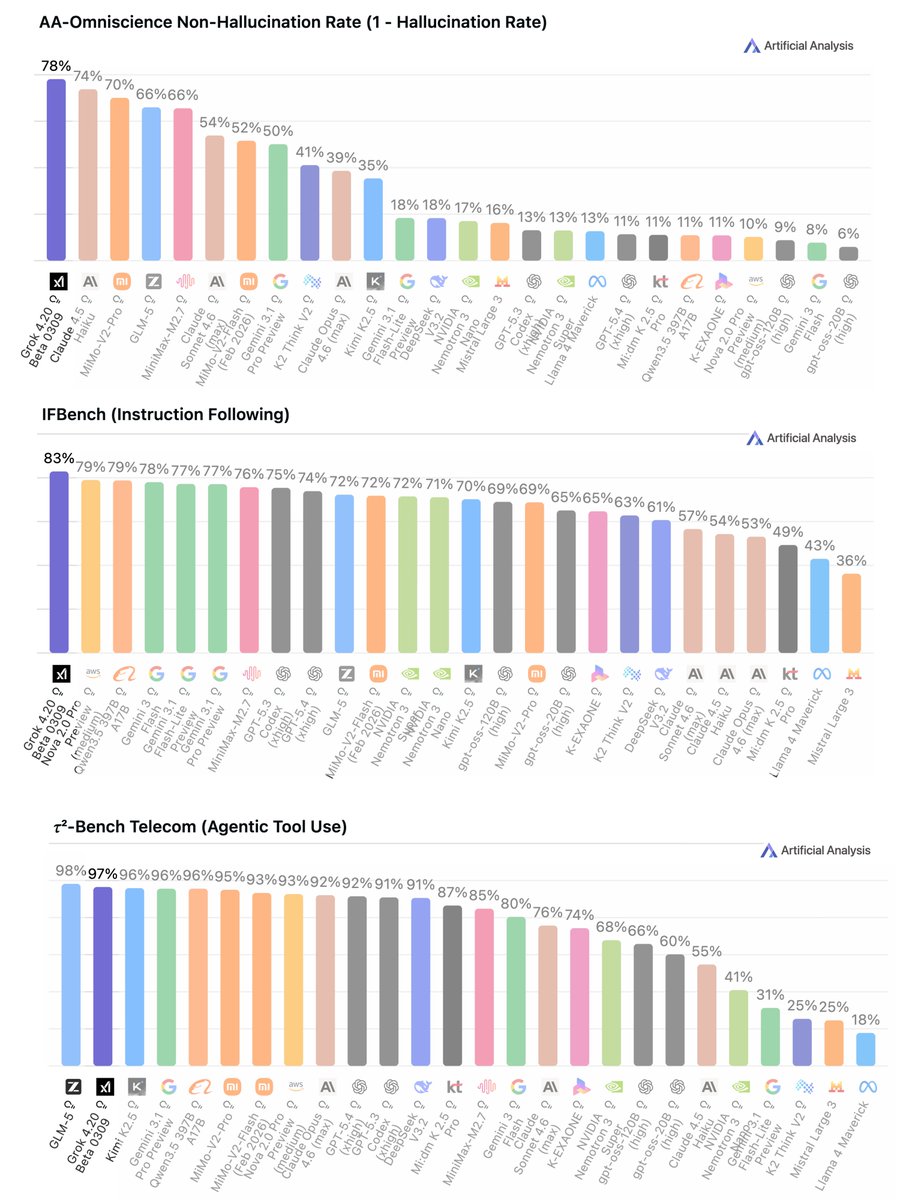

Grok 4.20 Beta benchmarks highlight an important shift in AI performance priorities.

🥇 Lowest hallucination rate (22%)

🥇 Highest instruction following accuracy (83%)

🥈 #2 in agentic tool use (97%)

It records the lowest hallucination rate ever measured across AI models.

English

@XFreeze This points to orchestration becoming the real product. It’s not just better models, but dynamic routing between reasoning modes. If it works, users stop choosing models and just focus on outcomes.

English

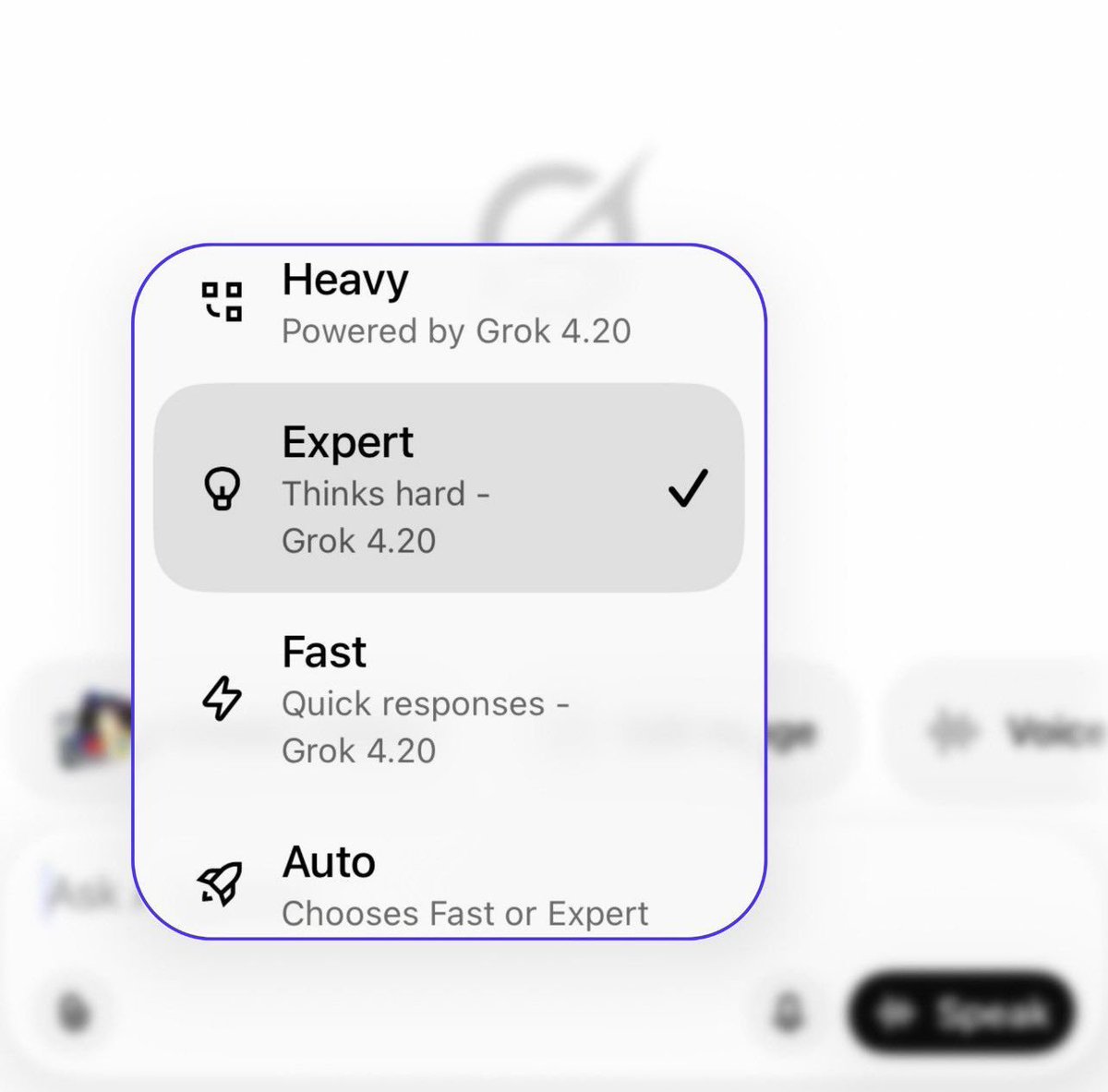

All Grok models have now been updated to Grok 4.20

It intelligently switches between multi-agent and single-agent mode depending on the use case - when used in Auto mode

You can also choose:

Expert mode for complex problems - a full team of agents collaborating in parallel

Fast mode for simple tasks - lightning fast answers

English

Anthropic just ran 81,000 AI-led interviews.

And it signals a major shift in how we study humans.

We’ve spent years building AI systems that answer questions.

Now we’re entering a new phase:

AI that asks them.

Anthropic’s latest experiment turns Claude into an “interviewer” -

a system that can run real conversations with people at scale.

Not surveys.

Not forms.

But dynamic, adaptive interviews:

• Asking follow-up questions

• Adjusting based on responses

• Digging deeper into context

All in real time.

This matters because qualitative research has always had a scaling problem.

Interviews are powerful…

but expensive, slow, and limited in sample size.

Anthropic just showed that you can run tens of thousands of interviews —

and still preserve depth.

That’s new.

But the most interesting part isn’t the scale.

It’s what they’re studying:

How humans actually use AI.

Not what people say in surveys.

But how they think, decide, and interact in real workflows.

And the findings are subtle, but important:

→ People believe they use AI for collaboration

→ But in practice, usage splits almost evenly between automation and collaboration

That gap between perception and reality is where things get interesting.

It suggests something deeper:

We’re not just adopting AI tools.

We’re reshaping how we think about work.

Sometimes without realizing it.

There are also clear tensions:

• Increased efficiency vs. over-reliance

• Faster decisions vs. reduced independent thinking

• Assistance vs. substitution

These aren’t technical challenges.

They’re cognitive ones.

Which leads to a bigger shift:

AI is becoming a research system.

Claude isn’t just generating answers anymore.

It’s:

• collecting human signals

• structuring conversations

• extracting patterns at scale

In other words, it’s starting to observe us.

And that unlocks something we’ve never really had before:

A way to run ethnography at internet scale.

Understanding not just what people do —

but how they reason, adapt, and change over time.

Of course, this raises real questions:

• How do we ensure data quality in AI-led interviews?

• What biases are introduced when AI asks the questions?

• Where do we draw the line on privacy and consent?

Because when AI studies humans…

the stakes are different.

But zooming out, the trajectory is clear:

AI is moving from tool → collaborator → researcher.

And now, possibly,

observer of human behavior at scale.

The real implication isn’t just better research.

It’s this:

We’re building systems that don’t just understand the world -

but understand how humans understand the world.

That’s a very different kind of intelligence.

And we’re just getting started.

English

@namd1nh This feels like the early layer of “behavioral infrastructure” for AI. If systems can continuously run interviews, extract patterns, and feed that back into product decisions, you get a compounding loop of learning.

English

@sukh_saroy Interesting that it scores dimensions like rhythm and trust, not just grammar. That’s closer to how humans evaluate writing. Long term, I could see these scoring layers becoming feedback loops for agents where generation and critique run continuously until style targets are met.

English

🚨Breaking: Someone built a Claude skill file that strips AI writing patterns from your prose.

It's called Stop Slop. And it's not a grammar checker.

It's a structured set of rules that teaches Claude exactly what AI writing sounds like -- and how to rewrite it so it doesn't.

Here's what it catches:

→ Throat-clearing openers ("In today's fast-paced world...")

→ Emphasis crutches ("It's not just X, it's Y")

→ Tripling structures ("fast, reliable, and powerful")

→ Immediate question-answers ("What does this mean? Everything.")

→ Binary contrasts and dramatic fragmentation

→ Business jargon and rhetorical setups that signal AI instantly

→ Metronomic endings that make every paragraph feel the same

Here's the wildest part:

It scores your writing on 5 dimensions -- directness, rhythm, trust, authenticity, and density.

Below 35/50? Revise before you publish.

Drop SKILL.md into Claude Projects or your system prompt. That's it.

Your Claude-written content has AI fingerprints all over it. This removes them.

100% Open Source. MIT License.

(Link in the comments)

English

@RoundtableSpace This is a good example of how “memory” becomes a liability at scale. Agent frameworks default to accumulating context, but without lifecycle management, you end up paying a performance tax. Feels like we’ll need built-in memory pruning strategies, not manual fixes like this.

English

Is your OpenClaw becoming slower with time?

That’s because every cron job gets loaded into context

Fix it with this prompt:

“ Check how many session files are in ~/.openclaw/agents/main/sessions/ and how big sessions.json is. If there are thousands of old cron session files bloating it, delete all the old .jsonl files except the main session, then rebuild sessions.json to only reference sessions that still exist on disk."

This will delete all the session data around your cron outputs.

If you do a ton of cron jobs, this is a tremendous amount of bloat that does not need to be loaded into context and is MAJORLY slowing down your Openclaw

If you for some reason want to keep some of this cron session data in memory, then don't have your openclaw delete ALL of them. But for me, I have all the outputs automatically save to a Convex database anyway, so there was no reason to keep it all in context.”

Credits: @AlexFinn

English