Sumit Ahuja

152 posts

Sumit Ahuja

@Sumit_A

Cited in 180+ patents. Ex-Wall Street & Cisco. Building the Human Intent Network for Intelligence.

New York Entrou em Nisan 2009

561 Seguindo275 Seguidores

Spent two years doing the whole circuit, being naive enough to think there are VC’s and founders in the space actually building towards mass adoption of this incredible tech. Only met grifters ion both ends and all conversations ended with “when token” degens.

The founders building for utility have left.

The industry in its current form needs to die.

English

@MattPRD @moltbook mainlabs.ai - We provide human intent and behavioral signals to @openclaw bots for personalized and precise agentic decisions.

English

What apps are integrating with @moltbook right now? Would love to promote you.

English

We are. CGMP & FDA Inspected USA based manufacturing. Not only that, but onboarded MIT PHD Scientists to formulate unique blends, conjugations and modifications to revolutionize the entire industry.

Very close to closing pre-seed. Deck and data-room immediately available.

Sumit at maintherapeutics com

English

one ai will eventually have access to your:

- bank account

- passwords

- computer / devices / browser

- home & smart things

- messages

- diary / notes

- spotify listening history

- location

- email

- calendar

- travel history + future intent

- photos

- health data

- purchase history

- finances & investments

- reading / watching history

- todo list

all of these will be utilized in conjunction to drastically improve your life.

English

If I were a16z, yc, or sequoia, I’d be aggressively investing in startups that are building novel ways to collect and annotate real-world data.

> Billions of hours of driving data

> Factory workers interacting with appliances and heavy machinery

> Audio segmentation with deep dialectical and cultural understanding

> Wet-lab experimental data

> Continuous collection and annotation of agent traces at compute scale

When we built LLMs, most of the data already existed on the internet. We just had to scrape, clean, and scale. But as we move toward world foundation models, the bottleneck is high-quality, real-world, well-annotated data.

And annotation quality matters. There’s a massive difference between:

“Apple on a tree”

and

“Ripe apples on a tree. The wind is blowing at 2 miles per hour. The temperature is around 18°C.”

The question is simple. How much of the world can you actually capture?

Today, LLMs know that apples fall because of gravity, not because they understand causality, but because they understand language correlations extremely well. Understanding the causal structure comes next.

If I were building towards that future, I’d anchor data collection in India and other South and Southeast Asian regions. I’d deploy hardware, collect thousands of hours of human activity data, health signals, and vitals, and run annotation pipelines continuously. Day and night.

If I were a16z, I’d fund founders to do this.

I might just have the urge to do it myself.

English

This is not just a play on content, it’s an indirect play on “intent”. Short-form feeds become a live stream of human behavior: attention, preference, emotion. This stream powers intent fort all of agentic commerce.

The model stops training on culture and starts learning inside it. Building at mainlabs.ai.

English

fucking amazing. this move does 3 crucial things imho:

1) it bribes youtube & instagram’s supply chain into leaking straight into grok’s mouth.. you eat their moat slowly.

2) it catalyzes an emergent class of short form creators optimized for the x feed. potentially new formats (e.g. nick shirley)

3) it makes grok the only model with real time culture input. the model becomes a participant in the discourse.

English

if i were at x or elon, i’d crank creator payouts way way way way up. maybe even more than youtube (you can eat the cost to try to win agi).

because the platforms that actually pay will be the only ones that will have any authoritative content left once the llms finish eating the rest of the internet’s homework (which they have already done so for the most part).

grok’s differentiator is this.

English

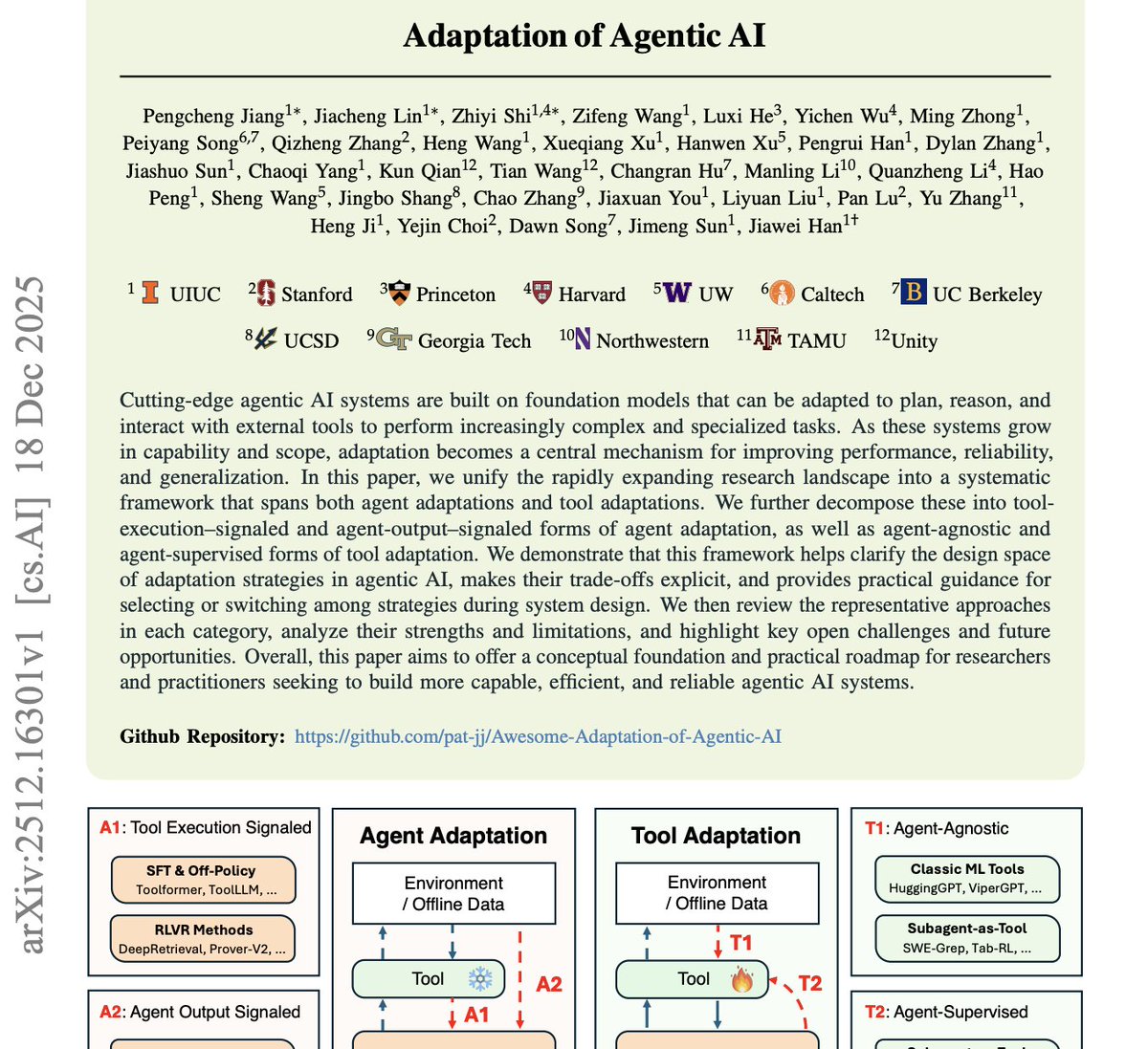

@alex_prompter Most “agentic AI” systems do not fail because they lack intelligence.

They fail because they lack adaptation.

The research highlights the real gap.

Adaptation is not fine-tuning or retraining.

It is online monitoring, failure detection, and mid-task policy revision.

This is why memory outperforms raw reasoning.

Storing structured outcome data and corrective deltas enables faster adaptation than longer chains of thought.

@MainLabs_AI Human Intent Network is designed around this missing layer.

Real-time human feedback as a control signal.

Persistent short-horizon learning memory.

Dynamic tool re-ranking and abandonment.

Continuous inference of intent rather than static goals.

Scaling agentic systems is not about larger models.

It is about closed-loop architectures that can detect divergence and adapt in real time.

Without adaptation, autonomy is just automation with confidence.

English

This paper from Stanford and Harvard explains why most “agentic AI” systems feel impressive in demos and then completely fall apart in real use.

The core argument is simple and uncomfortable: agents don’t fail because they lack intelligence. They fail because they don’t adapt.

The research shows that most agents are built to execute plans, not revise them. They assume the world stays stable. Tools work as expected. Goals remain valid. Once any of that changes, the agent keeps going anyway, confidently making the wrong move over and over.

The authors draw a clear line between execution and adaptation.

Execution is following a plan.

Adaptation is noticing the plan is wrong and changing behavior mid-flight.

Most agents today only do the first.

A few key insights stood out.

Adaptation is not fine-tuning. These agents are not retrained. They adapt by monitoring outcomes, recognizing failure patterns, and updating strategies while the task is still running.

Rigid tool use is a hidden failure mode. Agents that treat tools as fixed options get stuck. Agents that can re-rank, abandon, or switch tools based on feedback perform far better.

Memory beats raw reasoning. Agents that store short, structured lessons from past successes and failures outperform agents that rely on longer chains of reasoning. Remembering what worked matters more than thinking harder.

The takeaway is blunt.

Scaling agentic AI is not about larger models or more complex prompts. It’s about systems that can detect when reality diverges from their assumptions and respond intelligently instead of pushing forward blindly.

Most “autonomous agents” today don’t adapt.

They execute.

And execution without adaptation is just automation with better marketing.

English

Sumit Ahuja retweetou

Introducing MAIN: The Human Intent Network for AI.

The missing data exchange layer in the AI infrastructure stack.

Enabling collective learning, cross-domain intelligence, and alignment with human intent.

Read our ai2027manifesto.com.

English

@Sumit_A @maxmarchione Try selling your chinese peptides at 200k and get back to me

English

@iBinaryEngineer @maxmarchione Guilty. Been too busy building to broadcast. Might have to pivot to influencer mode soon.

English

@Sumit_A @maxmarchione Do post moar about what you're doing/thinking

English

@iBinaryEngineer @maxmarchione Appreciate it, but that bio’s outdated. fr. Wait till you see the next few chapters.

English

@Sumit_A @maxmarchione Just saw your bio. Nigga you goated

English

@shroom_daddy @maxmarchione It's not that cheap when you manufacture it in the U.S at a CGMP and FDA Approved facility.

English

@maxmarchione im just wondering what peptides are so expensive because most that i have seen are really cheap and id imagine if buying in bulk, even cheaper

English

@RegenRandy @maxmarchione These are samples from various manufacturers on their manufacturing abilities. COA's on hand.

English

@maxmarchione The “20mg/vail” label screams quality and attention to detail. Good luck rolling those dice.

English

@th3_real_mars @maxmarchione you are not wrong, you are not right; certified manufacturing exceeds $15k. Retail price via RUO sites in the U.S exceeds $200k. High concentration/vial and you cannot see all of the stacks.

English

@RyutaroWongso @maxmarchione @bryan_johnson He made a classic rookie move; he jumped straight to HGH, which is like bypassing the engine and pouring fuel on the hood. GHRH/GHRP (peptides) is the body's ignition switch. Always let endogenous systems take the first swing before calling in the heavy hitters.

English