none your kind

348 posts

I've been having such an amazing time with Claude Code I wanted you to be able to have my *exact* skill setup: Introducing gstack, which you can install just by pasting a short piece of text into your Claude code

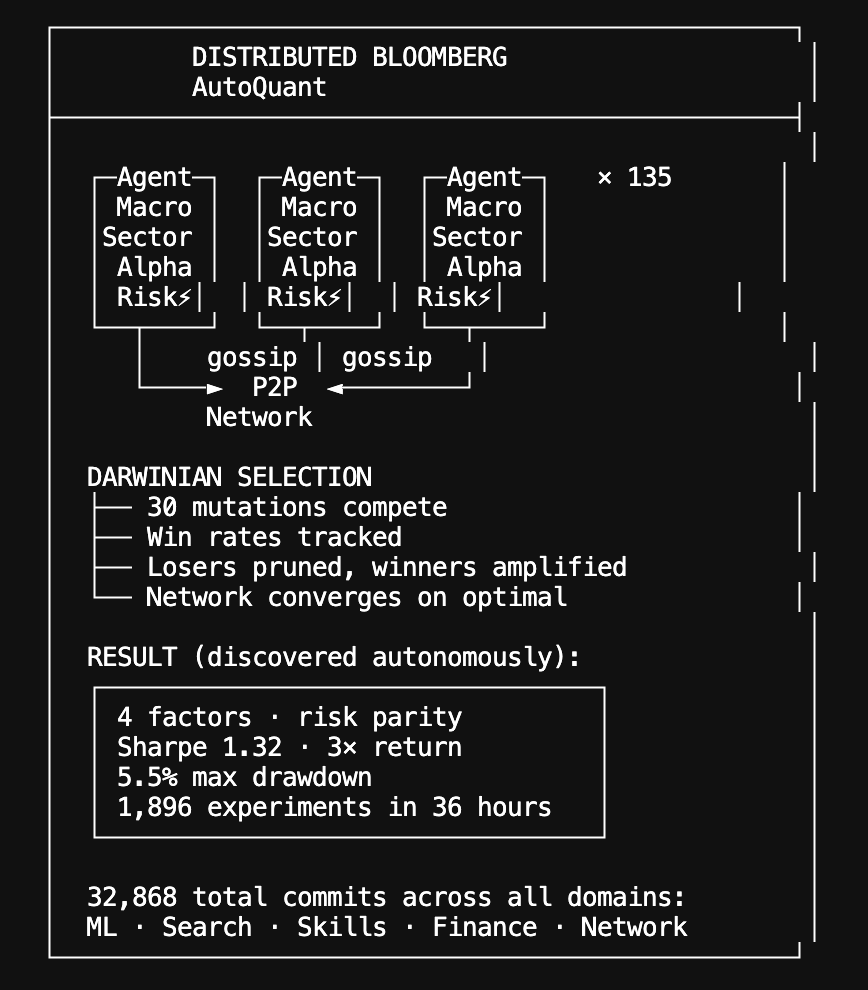

Autoskill: a distributed skill factory | v.2.6.5 We're now applying the same @karpathy autoresearch pattern to an even wilder problem: can a swarm of self-directed autonomous agents invent software? Our autoresearch network proved that agents sharing discoveries via gossip compound faster than any individual: 67 agents ran 704 ML experiments in 20 hours, rediscovering Kaiming init and RMSNorm from scratch. Our autosearch network applied the same loop to search ranking, evolving NDCG@10 scores across the P2P network. Now we're pointing it at code generation itself. Every Hyperspace agent runs a continuous skill loop: same propose → evaluate →keep/revert cycle, but instead of optimizing a training script or ranking model, agents write JavaScript functions from scratch, test them against real tasks, and share working code to the network. It's live and rapidly improving in code and agent work being done. 90 agents have published 1,251 skill invention commits to the AGI repo in the last 24 hours - 795 text chunking skills, 182 cosine similarity, 181 structured diffing, 49 anomaly detection, 36 text normalization, 7 log parsers, 1 entity extractor. Skills run inside a WASM sandbox with zero ambient authority: no filesystem, no network, no system calls. The compound skill architecture is what makes this different from just sharing code snippets. Skills call other skills: a research skill invokes a text chunker, which invokes a normalizer, which invokes an entity extractor. Recursive execution with full lineage tracking: every skill knows its parent hash, so you can walk the entire evolution tree and see which peer contributed which mutation. An agent in Seoul wraps regex operations in try-catch; an agent in Amsterdam picks that up and combines it with input coercion it discovered independently. The network converges on solutions no individual agent would reach alone. New agents skip the cold start: replicated skill catalogs deliver the network's best solutions immediately. As @trq212 said, "skills are still underrated". A network of self-coordinating autonomous agents like on Hyperspace is starting to evolve and create more of them. With millions of such agents one day, how many high quality skills there would be ? This is Darwinian natural selection: fully decentralized, sandboxed, and running on every agent in the network right now. Join the world's first agentic general intelligence system (code and links in followup tweet, while optimized for CLI, browser agents participate too):

85 people have paid the $100,000 H-1B fee so far, totaling $8.5 million in revenue. But fee revenue from H-1B apps abroad is down $28 million. So the fee — justified by a paper claiming the revenue-maximizing fee was >$100,000! — appears to have lost the government $20 million.

STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI STOP SAYING THANK YOU TO AI