David López Mateos

57 posts

David López Mateos

@SenorScience

Founder and CTO: @ComputeDesk. Exited Pace Revenue to FLYR. Formerly VP of Research at Winton, particle physics researcher at Harvard and CERN

London, England Присоединился Temmuz 2018

63 Подписки84 Подписчики

@DevanshuXi @modal @modal gets it. Pricing in the industry is like pricing for airlines in the 70s. It's only a matter of time before we move beyond cost-based pricing and all the learnings from algorithmic pricing hit neoclouds.

English

Is one of those companies that made me regret why I’m not in America. 😭

Fr though, genuinely one I wanted work with @modal

I love their arbitrage algorithm for efficient GPU pricing computation.

Erik Bernhardsson@bernhardsson

Insane that we’re triple digit headcount now!

English

Very valid point @YannikHehemann . Inference providers are very good at integrating "smallish" amounts of capacity, so it's probably not that. Our composite index for Hopper and Blackwell does that, but you are right that there's work to do on adoption and index construction transparency

English

I am no expert but it seems that one problem is batch size. Large companies being the major customers of AI compute could have difficulty integrating small amounts of spare capacity which is why I think spot prices are not that useful in AI compute market pricing.

Also, it would be very useful to design an index according to real world allocation meaning weighting it by how much of AI compute is really priced by the specific mechanism.

English

This is what happens when there's no mechanism to reprice locked capacity. If you're sitting on a $1.70 contract and the market is at $2.35, you hoard. The missing piece isn't more supply, it's contract liquidity. Let people resell or transfer committed compute and the price signal starts working again.

English

"On-Demand GPU rental capacity is sold out across all GPU types – those that have locked up on-demand instances are not willing to relinquish this capacity back into the pool despite recent price hikes. Trying to find GPU compute in early 2026 has been like trying to book airplane tickets on the last flight out, high prices, and almost no availability."

English

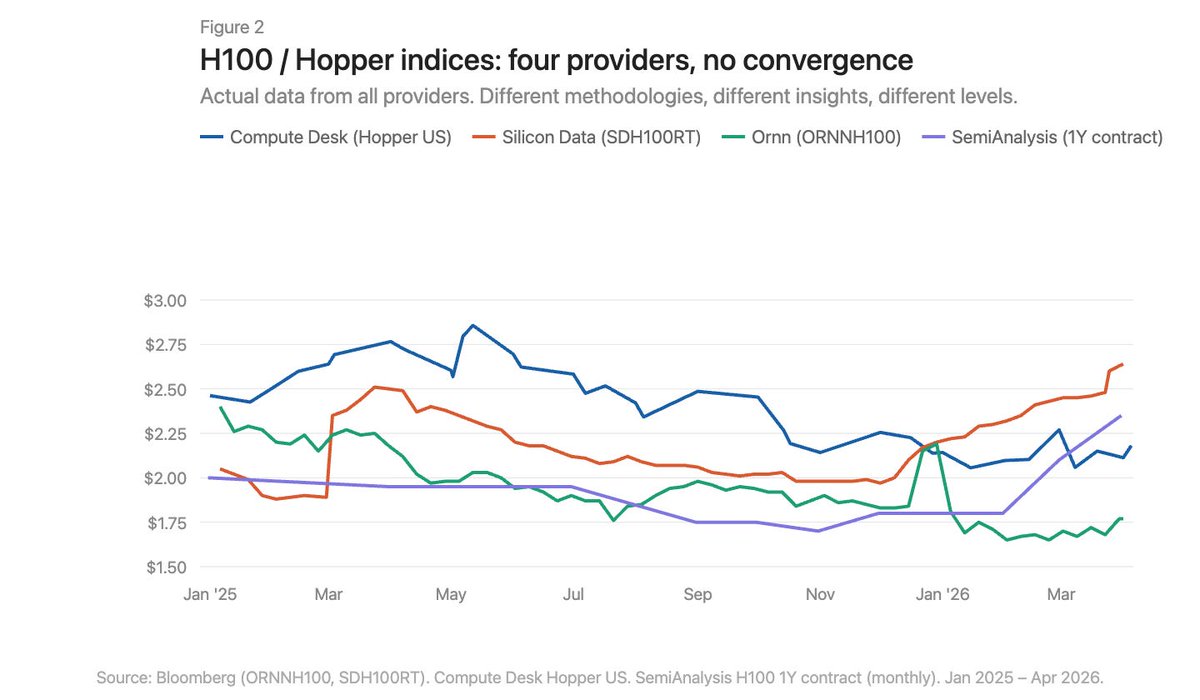

Those price differences are exactly the problem we've been looking at. SemiAnalysis says $2.35, these marketplaces quote $1.50–1.79, Silicon Data says $2.64, our index shows $2.18. Whether centralised or decentralised, nobody agrees on what an H100 costs. That's not a mature market: it's a market still figuring out what it's pricing.

English

just read @SemiAnalysis_'s latest report on the GPU rental market. a few numbers worth noting:

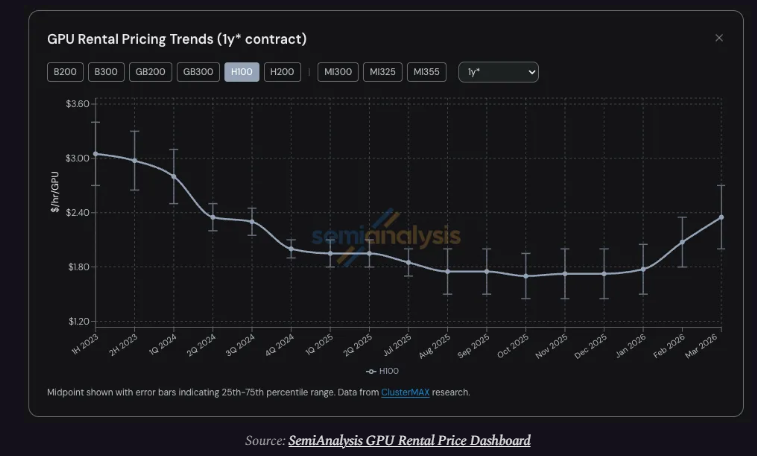

– H100 rental prices up 40% in 5 months ($1.70 → $2.35/hr)

– on-demand capacity: sold out across all GPU types

– all supply coming online until Aug 2026 already pre-booked

– driver: agentic AI workflows consuming compute at parabolic scale

centralized clouds are at full capacity and repricing aggressively with the high demand of tokens from #AI models: Claude, ChatGPT, Gemini,…

the gap this creates is real and I think decentralized GPU marketplaces exist precisely for this moment.

Here are the 3 projects building the alternative for #GPU race:

1/ @akashnet: the open-source cloud marketplace.

34,300 new leases in Q4 2025, GPU utilization near 80%, and just crossed $5M in compute spend in the first 90 days of 2026, an all-time high.

avg H100 rental: $1.53/hr.

2/ @TargonCompute: enterprise-grade GPU compute.

highest-revenue subnet on $tao (~$10.4M projected ARR). 20B+ inference tokens/day across 1,500+ nodes. raised $10.5M Series A. co-authored a whitepaper with Intel on decentralized compute using Intel TDX.

avg H100 rental: $1.79/hr.

3/ @lium_io: the GPU rental layer.

500+ H100s onboarded in early 2026. built for short-term burst compute that centralized clouds can't offer fast enough. rental revenues now outpacing token emissions.

avg H100 rental: $1.50/hr.

for context: the centralized market is at $2.35/hr and climbing. these three are offering the same hardware at 35–55% cheaper with availability when AWS has none.

English

@stevehou @Silicon_Data A lot of capacity is coming online for B200s in the next 2 months. Whether that shows up in pricing depends on which index you're watching. Ours and Silicon Data's disagree by over $2/hr on the B200 right now. The market is moving fast, but price discovery hasn't caught up.

English

Normalized GPU rental price appreciation. AI compute prices are going up across the board, especially the newest installed Blackwell B200 chips followed by H100 and A100. AI demand is surging, showing no sign of slowing down.

Credit: @Silicon_Data

English

@SmallCapSnipa Which index? SemiAnalysis says $2.35. Silicon Data says $2.64. Ornn says $1.77. Our Hopper US index shows $2.18. Same chip, same month. The direction is real. The magnitude depends entirely on who you ask.

English

@The_AI_Investor The question is: profitable at what price? Four indices track H100 rental rates right now and they disagree by nearly $1/hr. On-demand capacity is sold out at current rates, which means even the highest index is probably below the clearing price. It could be more profitable...

English

@tengyanAI Thanks @tengyanAI , please DM. Happy to have a chat!

English

@SenorScience well said. we've been trying to get a sense of compute costs but data is quite sparse. would love to chat more, could there be ways for us to collaborate

English

@0xmoonrunner Thanks @0xmoonrunner There is definitely some truth to this. However, physical markets will happen with the actual good, and there will be differentials between different goods. Financial markets will probably converge to one or two proxies for the whole market

English

@SenorScience maybe the issue is we want to forcefully create one market from the place where there are many markets, as in why not to have a separate benchmark for training and for inference, with units of measurement that represent what the user wants to pay for?

English

@gustofied Thanks @gustofied . Energy prices feed into all of this too, so not sure this is the case of one market being more important than another. This is a new market for sure.

English

in some ways everything is just more and more chained with gpu prices, in the sense that common denominator / mover, "god" market of all markets is compute. eats more share of the pie, oil is a competing share, and thus it's not countries but these two market players who are positing themselves structurally across the world, as oil perhaps goes for is top, just as the debt market had it's final turn in 2020

English

@0xP4mP1t Just a little bit ;) To be fair, this is a very nascent space. The surprising thing would be if they were efficient. It took other commodities decades to become efficient.

English

@Lazarus_Capital Thanks @Lazarus_Capital . I don't think we can interpret that much into these data, unfortunately. There's no deep analysis behind these prices. Lots of people are even doing cost-based pricing on data centers!

English

Reserved matters the most since it prices in spot/on demand and the future curve. It also enables companies to model out their revenue stream to justify the investment into the GPU and DC.

I'm surprised to see B200s reserve pricing stay flat to down vs h100 rising. Hope theyre using 1 to 1 year contracts and not the 1st year of a 5 year contract...

David López Mateos@SenorScience

4/ The B200 story is wilder still. Our on-demand index hit $8.13 in March. Other indices registered the same move from levels $2+ lower. Five months of history, and the benchmarks can't agree on where the market even is.

English

6/ There is no GPU price. Not yet. Full analysis with all the data: computedesk.substack.com/p/there-is-no-…

English