keysersoze

754 posts

keysersoze

@Surajdotdot7

building things with AI Claude Code | agents | automation sharing what I learn — for people who want to build, not just watch

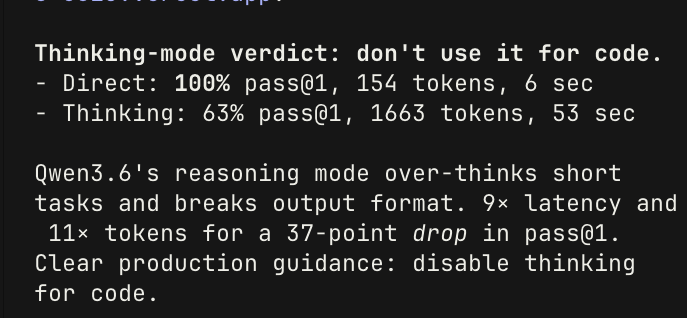

@Surajdotdot7 There are benchmarks included for agentic tool use

Qwen3.6-35B-A3Bが強すぎる!!! ・opencode,vibe-local,GitHub Copilot,qwencode,claude codeと組み合わせたときのts-benchを実施したところ、すべて満点 ・しかもClaude sonnet 4.6やOpus 4.6と同じくらい速くタスクを遂行できている Qwen3.5-27Bもすごかったが、Qwen3.6-35B-A3Bは赤い彗星のごとく27Bよりも推論速度が3倍速いので、ベンチマーク結果からもわかるようにタスク遂行までの時間が大幅に短縮できるようになったのが大きい

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.