Pete Soderling@petesoder

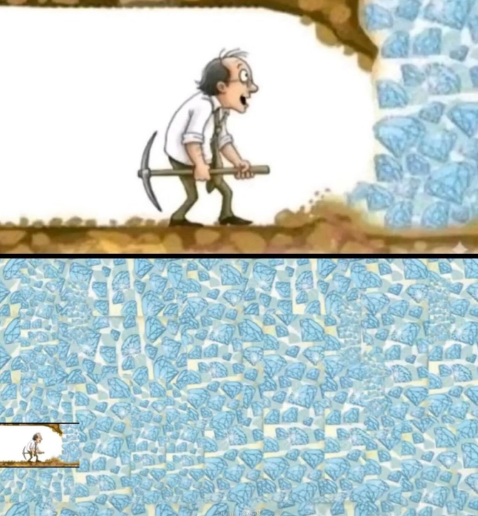

Hyperscaler Capex continues to explode. Roughly $600–700B in 2026.

The story behind this infrastructure sprint is the pivot from training giant models to inference as the dominant GPU consumer. Training is a “bursty” workload: you spin up a massive cluster, run the job, then shut it down. Inference is the opposite. Agents sit in the loop continuously, serving requests day and night. The utilization pattern looks less like “run a marathon once” and more like “stay on the treadmill forever.”

And text generation is just the warm-up. It’s relatively efficient. As soon as you move into speech, audio, video, and vision - especially with real-time latency targets - you see an order-of-magnitude jump in compute per request, often on the order of 10–100x over plain text tokens depending on modality and constraints. Add robots with cameras and sensors, and compute becomes a hard gating factor again.

As inference demand grows, efficient inference systems become the bottleneck - and a huge opportunity - for the foreseeable future. That's why inference matters right now.

So at @AICouncilConf 2026, we’re launching a new Inference Systems track focused on exactly this: the systems and infrastructure behind real-time multimodal AI. Curated by @BEBischof, it’s aimed at engineers who ship: people optimizing inference, running multimodal workloads and fighting for performance in production.

Join us in San Francisco in May: aicouncil.com/sf-2026