Ke Wang

47 posts

Ke Wang

@wangkeml

PhD student in Machine Learning @ EPFL

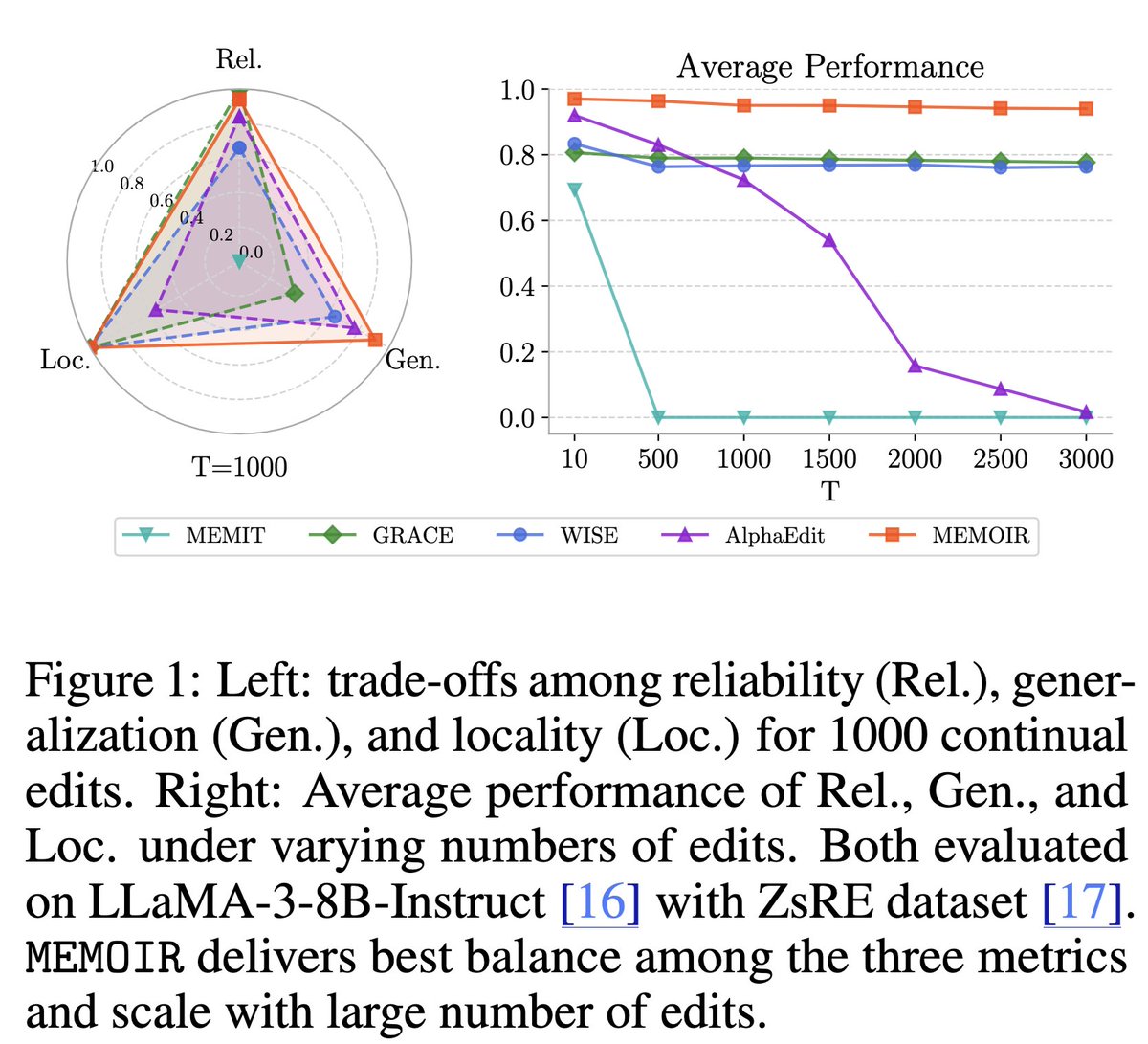

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

🚀 Presenting #DeFoG: our discrete flow‑matching framework for graph generation! Catch our #ICML2025 oral presentation today (3:30 – 3:45 PM, in West Exhibition Hall C) and drop by the poster right after (4:30 –7:00). Come chat graphs & generative models! @manuelmlmadeira

New Online! Decoding the interactions and functions of non-coding RNA with artificial intelligence bit.ly/4kNblk6

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

Are you interested in graph generation, from molecular discovery 🧪 to social networks 🌐? You’ll love DeFoG 🌬️😶🌫️, our new framework that delivers state-of-the-art performance in diverse graph generation tasks with unmatched efficiency! 🤩 📄: arxiv.org/abs/2410.04263 🧵1/9