Ani Aggarwal

25 posts

@AnirudAgg

Vision AI researcher | Applying for 2026 PhD | CS + Math from UMD | I like vision

Super excited to introduce ✨ AnyUp: Universal Feature Upsampling 🔎 Upsample any feature - really any feature - with the same upsampler, no need for cumbersome retraining. SOTA feature upsampling results while being feature-agnostic at inference time.

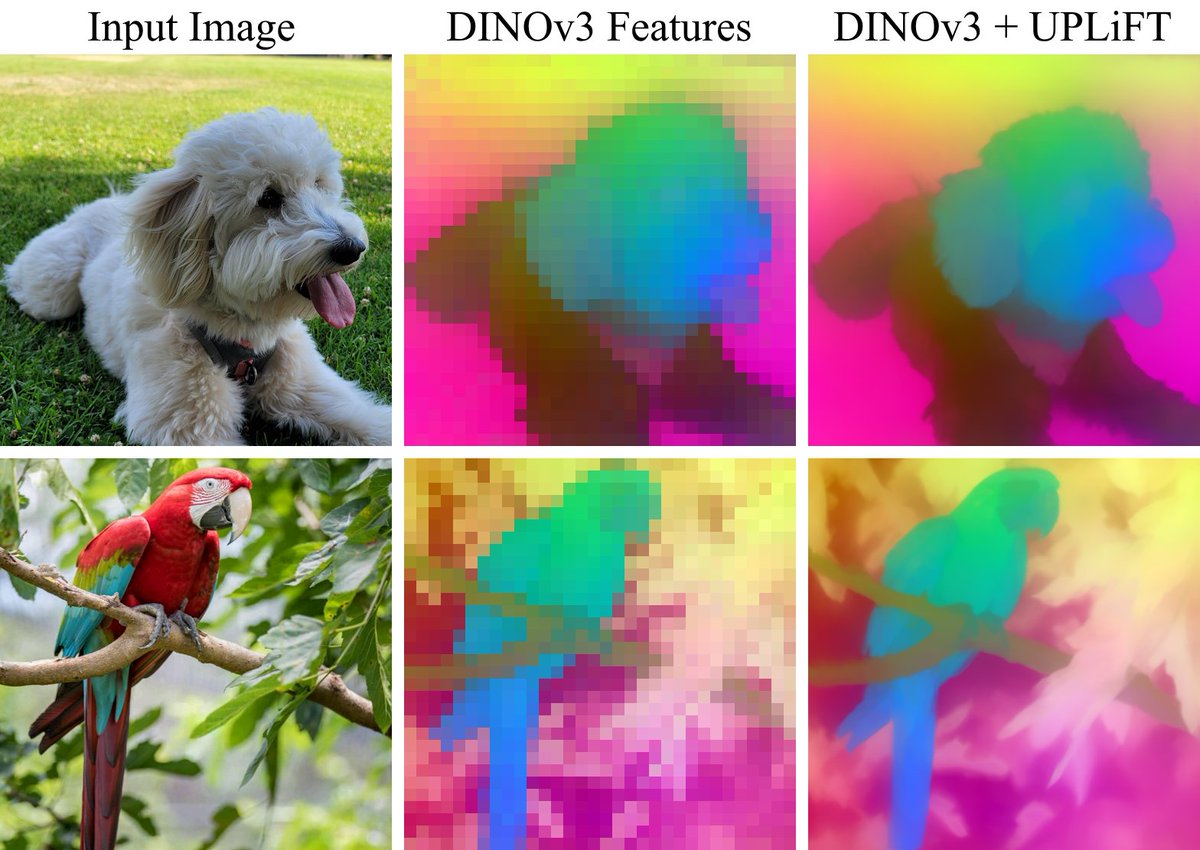

Excited to announce that UPLiFT has been accepted to #CVPR2026! You can also try out UPLiFT right now to extract pixel-dense DINOv3 features with our pretrained models linked below! Code: github.com/mwalmer-umd/UP… Paper: arxiv.org/abs/2601.17950 Website: cs.umd.edu/~mwalmer/uplif…

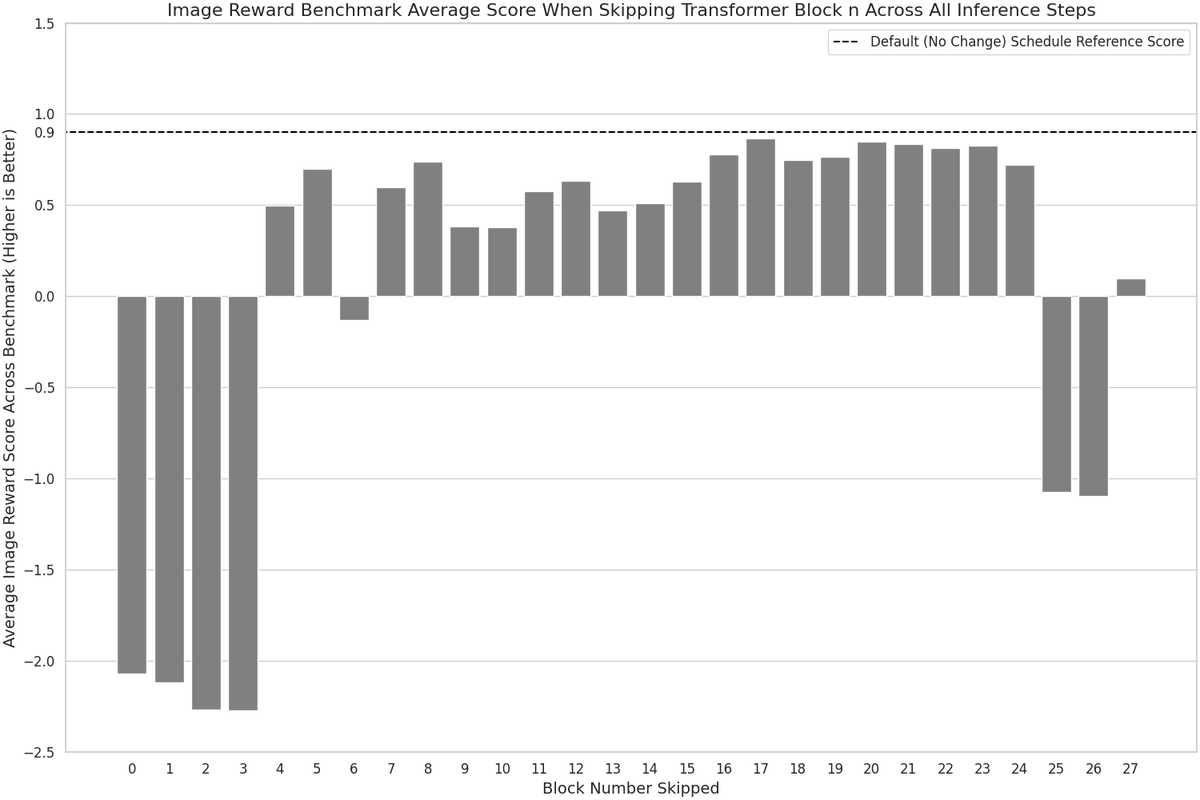

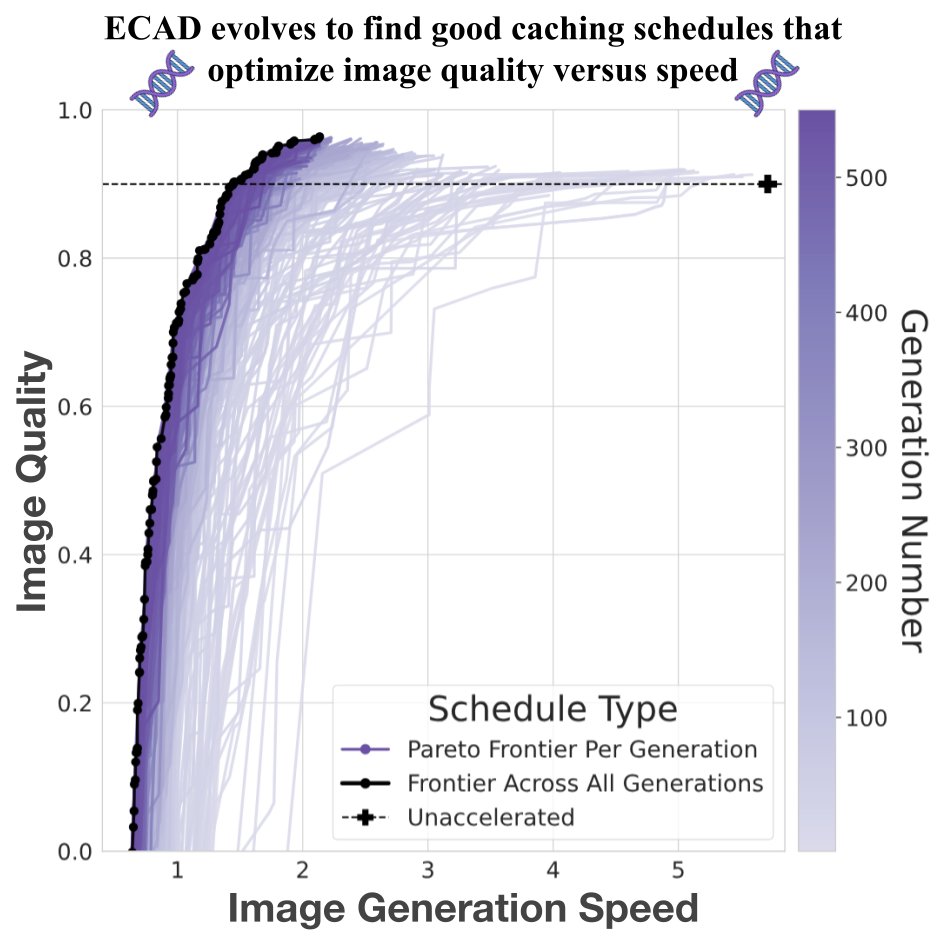

🧵 Your DiT, faster Introducing ECAD: we reframe diffusion model caching as multi-objective optimization and evolve Pareto-optimal schedules via a genetic algorithm—achieving 4.47 FID gain at 2.58× speedup, with no retraining or tuning. 🔗 aniaggarwal.github.io/ecad #MachineLearning

We’re excited to announce UPLiFT, our lightweight, pixel-dense feature upsampler. UPLiFT boosts feature density, preserves semantics, and has better efficiency scaling than recent SOTA methods. See all links in the thread below. Coauthors: @_sakshams_ @AnirudAgg @abhi2610 🧵[1/6]

How can we create a single navigation policy that works for different robots in diverse environments AND can reach navigation goals with high precision? Happy to share our new paper, "VAMOS: A Hierarchical Vision-Language-Action Model for Capability-Modulated and Steerable Navigation"! 📜 Paper: arxiv.org/abs/2510.20818 🌐 Website: vamos-vla.github.io