Nathaniel Calhoun รีทวีตแล้ว

Wharton’s latest AI study points to a hard truth: “AI writes, humans review” model is breaking down

Why "just review the AI output" doesn't work anymore, our brains literally give up.

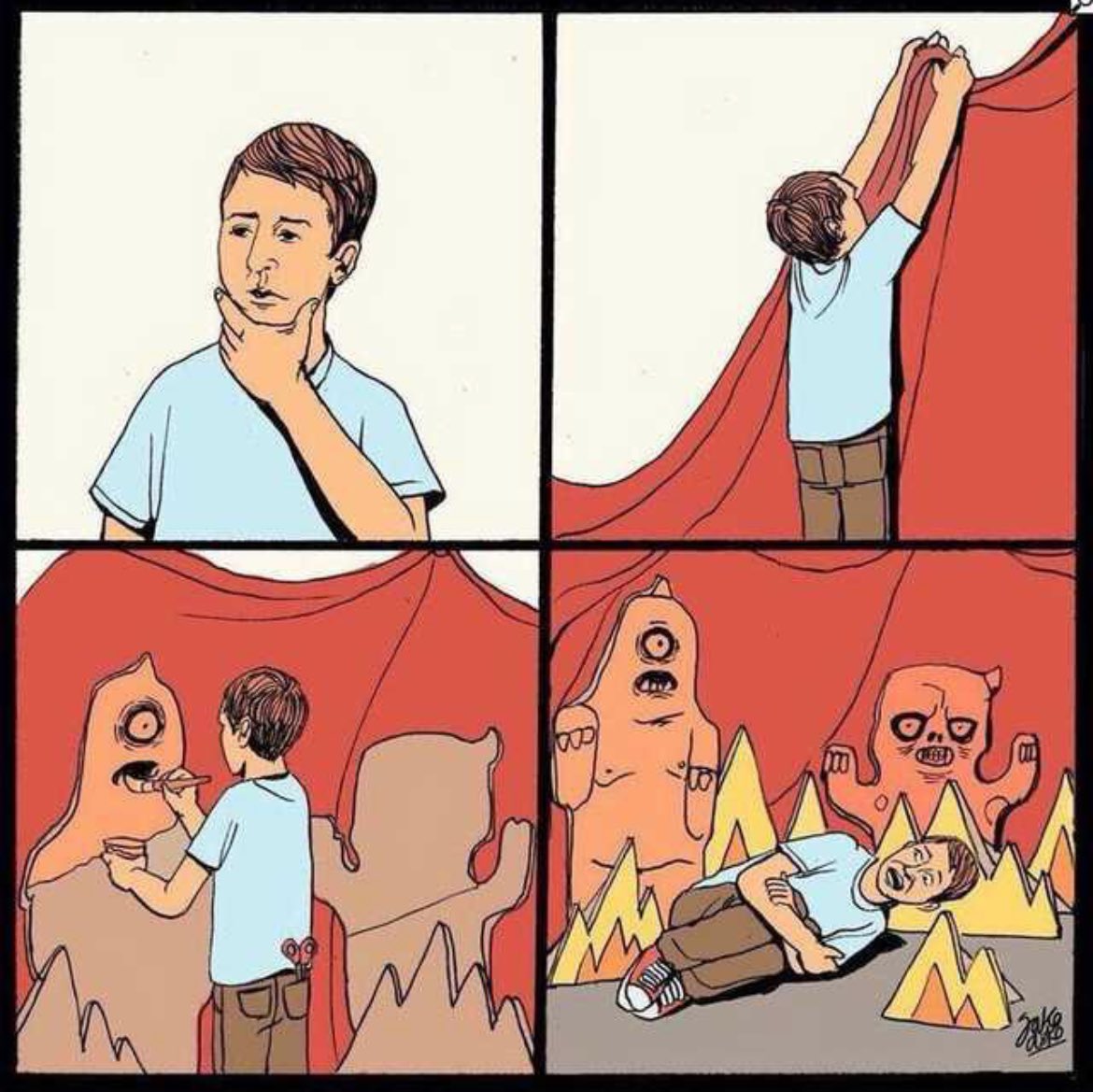

We have started doing "Cognitive Surrender" to AI - Wharton’s latest AI study points to a hard truth: reviewing AI output is not a reliable safeguard when cognition itself starts to defer to the machine.when you stop verifying what the AI tells you, and you don't even realize you stopped. It's different from offloading, like using a calculator.

With offloading you know the tool did the work. With surrender, your brain recodes the AI's answer as YOUR judgment. You genuinely believe you thought it through yourself.

Says AI is becoming a 3rd thinking system, and people often trust it too easily.

You know Kahneman's System 1 (fast intuition) and System 2 (slow analysis)? They're saying AI is now System 3, an external cognitive system that operates outside your brain. And when you use it enough, something happens that they call Cognitive Surrender.

Cognitive surrender is trickier: AI gives an answer, you stop really questioning it, and your brain starts treating that output as your own conclusion. It does not feel outsourced. It feels self-generated.

The data makes it hard to brush off. Across 3 preregistered studies with 1,372 participants and 9,593 trials, people turned to AI on over 50% of questions.

In Study 1, when AI was correct, people followed it 92.7% of the time. When it was wrong, they still followed it 79.8% of the time.

Without AI, baseline accuracy was 45.8%. With correct AI, it jumped to 71.0%. With incorrect AI, it dropped to 31.5%, worse than having no AI. Access to AI also boosted confidence by 11.7 percentage points, even when the answers were wrong.

Human review is supposed to be the safety net. But this research suggests the safety net has a hole in it: people do not just miss bad AI output; they become more confident in it.

Time pressure did not eliminate the effect. Incentives and feedback reduced it but did not remove it. And the people most resistant tended to score higher on fluid intelligence and need for cognition. That makes this feel less like a laziness problem and more like a cognitive architecture problem.

English