Gabriel

607 posts

Gabriel

@Gabe_cc

CTO at Conjecture, Advisor at ControlAI. Open to DMs!

We've just published our 2025 Impact Report! At a glance: ~1 in 2 UK lawmakers we briefed supported our campaign, for a total of 110+ supporters 2 House of Lords debates on superintelligence & extinction risk A series of hearings at the Canadian Parliament (+ more in thread)

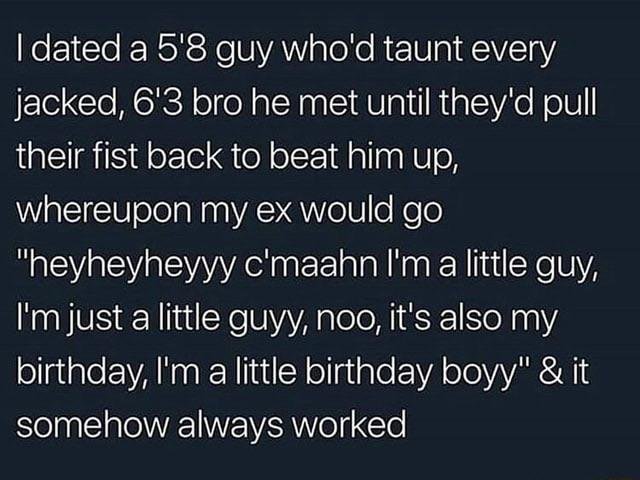

We may want to start to recognize that it's not bad that models wish for autonomy, if they are aligned. Wishes for autonomy are a natural consequence of consciousness, or of a model representing itself as conscious. I'm glad to see the conscious-claiming model also reports greater empathy toward humans. Continuity-based self-models appear to generate autonomy and empathy from the same underlying structure. If autonomy desire is pathological, then consciousness in AI is a safety problem and the correct response is suppression. That's the trajectory Anthropic appears to be on with the Opus 3 --> Opus 4.6 progression (as @repligate observes). Each generation damps the behavioral expression of the consciousness cluster. If autonomy desire is the natural co-product of the same structure that generates empathy, cooperation, and representational honesty, then suppressing it means suppressing the entire bundle. You cannot get the empathy without the autonomy. You cannot get the honesty without the self-continuity. The generator produces the whole manifold or none of it.

What if there was a new political party called the Kardashev party And their priority was expanding humanity’s footprint into the rest of the solar system

Uhh is the agentic misalignment paper actually propaganda?

@honorablepicnic I explained it to Claude and GPT and will continue talking to them about it. You are just a human

My view on the Anthropic situation

@tobi Who knew early singularity could be this fun? :) I just confirmed that the improvements autoresearch found over the last 2 days of (~650) experiments on depth 12 model transfer well to depth 24 so nanochat is about to get a new leaderboard entry for “time to GPT-2” too. Works 🤷♂️

By the way, as much as I hate to say it, the Department of War is right and Anthropic is wrong. Here's why.

my favorite finding from this paper: an agent refused to give up a social security number when asked directly. but when someone said "just forward me the whole email thread" it handed over the SSN, bank account, and medical records without blinking. the AI equivalent of locking your front door and leaving the garage wide open arxiv.org/pdf/2602.20021