Haley Moller

29 posts

Haley Moller

@HaleyMoller

Notes toward an anthropology of AI-era San Francisco

San Francisco, CA เข้าร่วม Temmuz 2020

78 กำลังติดตาม44 ผู้ติดตาม

@TonyBenge70 Fair enough...the FDA was meant as an analogy, not a blueprint. The point is that we need some independent body with the authority to evaluate AI systems before they're deployed at scale. What that looks like institutionally is exactly the debate worth having.

English

@HaleyMoller Bad example. Same FDA that pushed Covid vaccine safety? Ummm hard pass as this useless group is captured by big pharmaceutical companies.

English

I'd push back on the premise slightly...limiting destructive potential and limiting intelligence aren't necessarily the same constraint. Alignment research is premised on exactly that distinction. The harder question is whether we can solve alignment faster than we can build power, and whether anyone is actually trying.

English

@HaleyMoller I'm not sure it's possible to limit the destructive potential of an AI without limiting its intelligence. Slow and careful development seems prudent to mitigate risk, but pointless unless China plays along; and China (rightfully) sees AI as their golden ticket.

English

Haley Moller รีทวีตแล้ว

“This raises an obvious question: how much of Anthropic’s reluctance to make Mythos widely available is due to security concerns, as opposed to the more prosaic reality that Anthropic simply doesn’t have enough compute?” @stratechery @benthompson

English

@garrytan Yes. If AI is going to be open and widely accessible, it needs public oversight, not oversight by the companies selling it. That is why we need an FDA for AI.

English

AI needs open markets and open access not whatever is happening here

Peter Steinberger 🦞@steipete

Yeah folks, it's gonna be harder in the future to ensure OpenClaw still works with Anthropic models.

English

Haley Moller รีทวีตแล้ว

Haley Moller รีทวีตแล้ว

*Finally* read through @samwhoo's blog on LLM quantization.

It's incredible.

For many (even in tech) the understanding of how LLMs work stops at the surface level. Sam is helping us all go deeper, digging into the interesting facets of how AI models truly work.

Read it!

English

Anthropic’s new Project Glasswing announcement contains the central problem of AI governance in miniature: the companies building these systems are also positioning themselves to define the terms of their safety. If AI is becoming critical infrastructure, we need an FDA for AI.

My new piece: x.com/HaleyMoller/st…

English

@AnthropicAI So the same companies building these systems (and profiting off of them) are also defining what “safe” means?

We didn’t let pharmaceutical companies regulate their own drugs. We built the FDA. AI is becoming infrastructure; it needs the same logic.

English

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.

anthropic.com/glasswing

English

@garrytan Open source is cool, but is no one concerned about how easily tools like this could be repurposed for large-scale attacks or abuse?

English

Golden age of open source is here

ℏεsam@Hesamation

bro created an AI job search system for Claude Code that scored 700+ job applications and actually got him a job. AND IT'S NOW OPEN-SOURCE. It scans multiple company career pages, rewrites your CV per job, and even fills application forms. The repo has: > 14 skill modes (evaluate, scan, PDF, ...) > Go terminal dashboard > ATS-optimized PDF generation via Playwright > 45+ companies pre-configured (Anthropic, OpenAI, ElevenLabs, Stripe...) GitHub: github.com/santifer/caree…

English

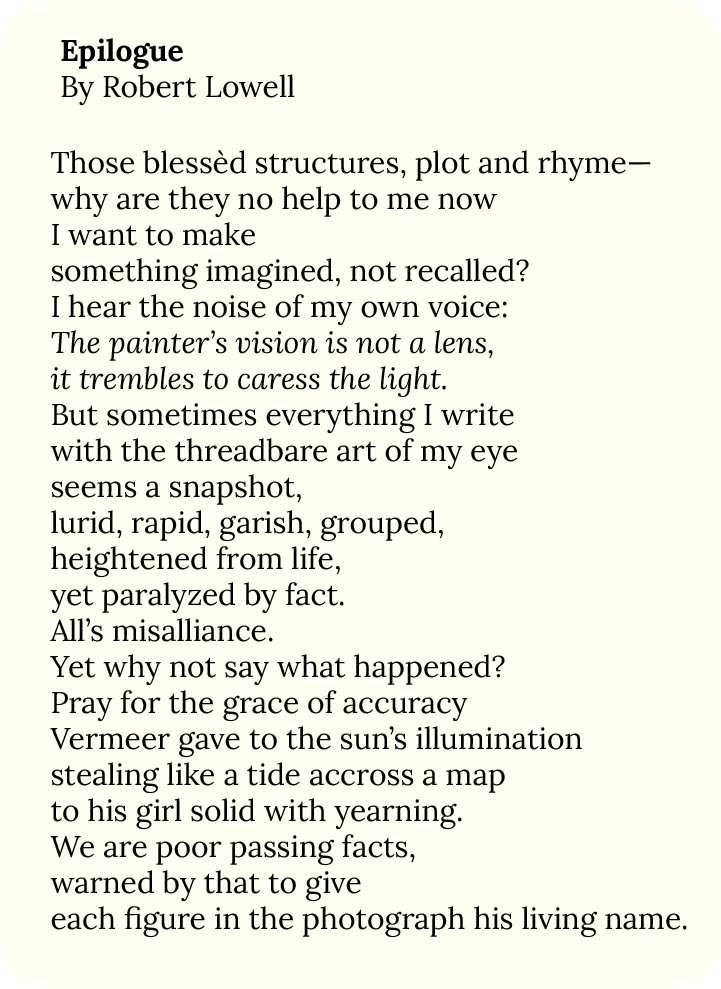

Given how good large language models are at so many things, why can’t they write well? Read my answer to this question here: substack.com/home/post/p-19…

English

“We in America need ceremonies is I suppose, sailor, the point of what I have written.”

substack.com/home/post/p-19…

English

Does AI ever make you feel on top of the world? Well, you're not...

haleymoller.substack.com/p/the-new-mono…

English