ทวีตที่ปักหมุด

Hanjung Kim

75 posts

Hanjung Kim

@KimD0ing

Research Scientist Intern @nvidia GEAR | Ph.D. student @ Yonsei University | prev. @nyuuniversity

Santa Clara, CA เข้าร่วม Şubat 2023

289 กำลังติดตาม179 ผู้ติดตาม

Hanjung Kim รีทวีตแล้ว

Robotics: coding agents’ next frontier.

So how good are they?

We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability.

From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab

capgym.github.io

🧵

English

Hanjung Kim รีทวีตแล้ว

Hanjung Kim รีทวีตแล้ว

SAM 3.1 is here 🚀

7x faster with 128 objects, without sacrificing any quality

Glad to have contributed as part of the SAM team

Special kudos to our intern @hkchengrex for the amazing contribution! 🙌

AI at Meta@AIatMeta

We’re releasing SAM 3.1: a drop-in update to SAM 3 that introduces object multiplexing to significantly improve video processing efficiency without sacrificing accuracy. We’re sharing this update with the community to help make high-performance applications feasible on smaller, more accessible hardware. 🔗 Model Checkpoint: go.meta.me/8dd321 🔗 Codebase: go.meta.me/b0a9fb

English

Hanjung Kim รีทวีตแล้ว

🎓I defended my PhD at @OhioState!

Grateful to my advisor @ysu_nlp and all my collaborators along the way :)

Excited to be starting at @nvidia (just in time for #NVIDIAGTC😆) and continuing my research on spatial intelligence in multimodal foundation models.

English

Hanjung Kim รีทวีตแล้ว

Hanjung Kim รีทวีตแล้ว

We trained a humanoid with 22-DoF dexterous hands to assemble model cars, operate syringes, sort poker cards, fold/roll shirts, all learned primarily from 20,000+ hours of egocentric human video with no robot in the loop.

Humans are the most scalable embodiment on the planet. We discovered a near-perfect log-linear scaling law (R² = 0.998) between human video volume and action prediction loss, and this loss directly predicts real-robot success rate.

Humanoid robots will be the end game, because they are the practical form factor with minimal embodiment gap from humans. Call it the Bitter Lesson of robot hardware: the kinematic similarity lets us simply retarget human finger motion onto dexterous robot hand joints. No learned embeddings, no fancy transfer algorithms needed. Relative wrist motion + retargeted 22-DoF finger actions serve as a unified action space that carries through from pre-training to robot execution.

Our recipe is called "EgoScale":

- Pre-train GR00T N1.5 on 20K hours of human video, mid-train with only 4 hours (!) of robot play data with Sharpa hands. 54% gains over training from scratch across 5 highly dexterous tasks.

- Most surprising result: a *single* teleop demo is sufficient to learn a never-before-seen task. Our recipe enables extreme data efficiency.

- Although we pre-train in 22-DoF hand joint space, the policy transfers to a Unitree G1 with 7-DoF tri-finger hands. 30%+ gains over training on G1 data alone.

The scalable path to robot dexterity was never more robots. It was always us.

Deep dives in thread:

English

Hanjung Kim รีทวีตแล้ว

Announcing DreamDojo: our open-source, interactive world model that takes robot motor controls and generates the future in pixels. No engine, no meshes, no hand-authored dynamics. It's Simulation 2.0. Time for robotics to take the bitter lesson pill.

Real-world robot learning is bottlenecked by time, wear, safety, and resets. If we want Physical AI to move at pretraining speed, we need a simulator that adapts to pretraining scale with as little human engineering as possible.

Our key insights: (1) human egocentric videos are a scalable source of first-person physics; (2) latent actions make them "robot-readable" across different hardware; (3) real-time inference unlocks live teleop, policy eval, and test-time planning *inside* a dream.

We pre-train on 44K hours of human videos: cheap, abundant, and collected with zero robot-in-the-loop. Humans have already explored the combinatorics: we grasp, pour, fold, assemble, fail, retry—across cluttered scenes, shifting viewpoints, changing light, and hour-long task chains—at a scale no robot fleet could match. The missing piece: these videos have no action labels. So we introduce latent actions: a unified representation inferred directly from videos that captures "what changed between world states" without knowing the underlying hardware. This lets us train on any first-person video as if it came with motor commands attached.

As a result, DreamDojo generalizes zero-shot to objects and environments never seen in any robot training set, because humans saw them first.

Next, we post-train onto each robot to fit its specific hardware. Think of it as separating "how the world looks and behaves" from "how this particular robot actuates." The base model follows the general physical rules, then "snaps onto" the robot's unique mechanics. It's kind of like loading a new character and scene assets into Unreal Engine, but done through gradient descent and generalizes far beyond the post-training dataset.

A world simulator is only useful if it runs fast enough to close the loop. We train a real-time version of DreamDojo that runs at 10 FPS, stable for over a minute of continuous rollout. This unlocks exciting possibilities:

- Live teleoperation *inside* a dream. Connect a VR controller, stream actions into DreamDojo, and teleop a virtual robot in real time. We demo this on Unitree G1 with a PICO headset and one RTX 5090.

- Policy evaluation. You can benchmark a policy checkpoint in DreamDojo instead of the real world. The simulated success rates strongly correlate with real-world results - accurate enough to rank checkpoints without burning a single motor.

- Model-based planning. Sample multiple action proposals → simulate them all in parallel → pick the best future. Gains +17% real-world success out of the box on a fruit packing task.

We open-source everything!! Weights, code, post-training dataset, eval set, and whitepaper with tons of details to reproduce. DreamDojo is based on NVIDIA Cosmos, which is open-weight too.

2026 is the year of World Models for physical AI. We want you to build with us. Happy scaling!

Links in thread:

English

Hanjung Kim รีทวีตแล้ว

SONIC is now open-source!

Generalist whole-body teleoperation for EVERYONE!

Our team has long been building comprehensive pipelines for whole-body control, kinematic planner, and teleoperation, and they will all be shared.

This will be a continuous update; inference code + model already there, training code and gr00t integration coming soon!

Code: github.com/NVlabs/GR00T-W…

Docs: nvlabs.github.io/GR00T-WholeBod…

Site: nvlabs.github.io/GEAR-SONIC/

English

Hanjung Kim รีทวีตแล้ว

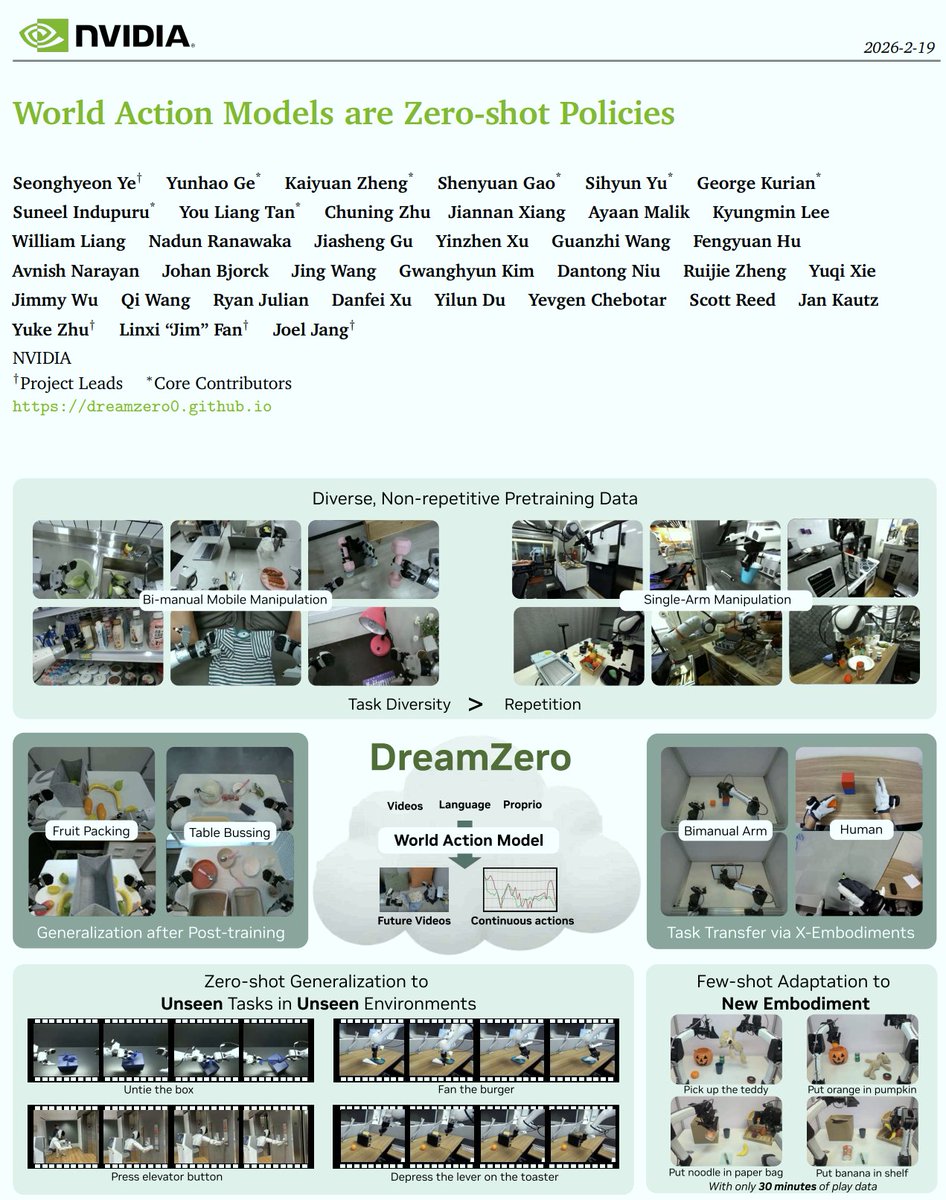

VLAs (from VLMs) ❌ => WAMs (from Video Models) ✅

Why WAMs?

1️⃣ World Physics: VLMs know the internet, but Video Models implicitly model the physical laws essential for manipulation.

2️⃣ The "GPT Direction": VLAs are like BERT (rely heavily on task-specific post-training). WAMs are like GPT (pre-train & prompt), unlocking incredible zero-shot transfer!

What I want to see in 2026:

📈 Scaling Laws: We will see much clearer scaling laws for robotics compared to VLAs.

🤝 Human-to-Robot Transfer: Unlocking massive transfer capabilities using video as a shared representation space.

🤖 Zero-Shot Mastery: Moving from short-horizon tasks to long-horizon, dexterous manipulation without task-specific demonstrations.

We recently open-sourced the checkpoints, training and inference code.

Dive into the research! 👇

📄 Paper: arxiv.org/abs/2602.15922

💻 Code: github.com/dreamzero0/dre…

🤗 HF: huggingface.co/GEAR-Dreams/Dr…

English

Hanjung Kim รีทวีตแล้ว

Robot foundation models are limited by costly real data, while simulation data is plentiful but visually mismatched to reality. We present Point Bridge, a method that enables zero-shot sim-to-real transfer for robot learning with minimal visual alignment.

pointbridge3d.github.io

English

Hanjung Kim รีทวีตแล้ว

Hanjung Kim รีทวีตแล้ว

Some exciting takeaways in addition to Brent's post:

• We show flow policies working for sim2real humanoid locomotion & motion tracking without distillation or shortcut models.

• The same recipe works for both from-scratch RL and BC → RL fine-tuning for manipulation---no bells and whistles.

Code will be released: github.com/amazon-far/fpo…

Brent Yi@brenthyi

New project! Flow Policy Gradients for Robot Control tldr; a simple online RL recipe for training and fine-tuning flow policies for robots co-led w/ @redstone_hong: hongsukchoi.github.io/fpo-control

English

Hanjung Kim รีทวีตแล้ว

Hanjung Kim รีทวีตแล้ว

Introducing DreamZero 🤖🌎 from @nvidia

> A 14B “World Action Model” that achieves zero-shot generalization to unseen tasks & few-shot adaptation to new robots

> The key? Jointly predicting video & actions in the same diffusion forward pass

Project Page: dreamzero0.github.io

🧵 (1/10)

English

Hanjung Kim รีทวีตแล้ว

Hanjung Kim รีทวีตแล้ว

Very happy to share that I moved to the Bay Area and joined the Gemini team at @googledeepmind ! Grateful to be working with a great team on long horizons, RL for LLMs, and agents

I'm looking forward to seeing old friends again and making new ones, DMs are open :)

English

Hanjung Kim รีทวีตแล้ว

We just released AINA, a framework for learning robot policies from Aria 2 demos, and are now open-sourcing the code: github.com/facebookresear…. It includes:

✅ Aria 2 data processing into 3D observations like shown

✅Training of point-based policies

✅Calibration

Give it a try!

GIF

English